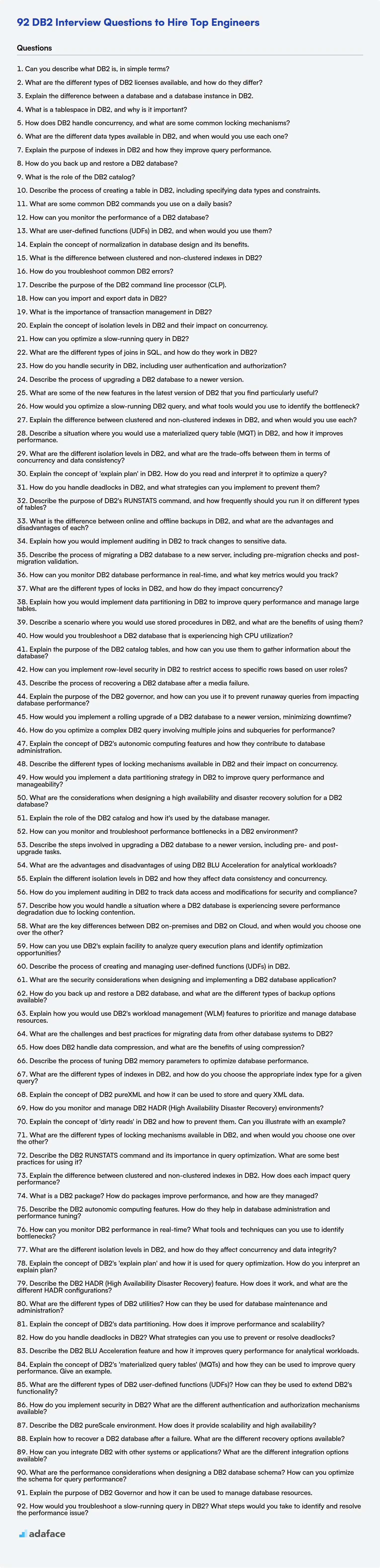

When evaluating candidates for database roles, particularly those involving IBM DB2, having a structured set of questions is key. Like SQL developers, Database experts also need to know their stuff.

This ensures you can fairly assess their knowledge and skills related to database management, query optimization, and system administration. This blog post provides a curated list of interview questions categorized by difficulty, ranging from basic to expert levels, including multiple-choice questions.

By using these questions, you will be able to identify candidates who not only understand IBM DB2 but can also apply their knowledge to solve real-world problems, or you could use an IBM DB2 online test before the interview.

Table of contents

Basic IBM DB2 interview questions

1. Can you describe what DB2 is, in simple terms?

DB2 is a relational database management system (RDBMS) from IBM. Think of it as a structured way to store and manage large amounts of data, like a digital filing cabinet but much more powerful. It organizes data into tables with rows and columns, allowing for efficient querying, updating, and managing of information.

It's designed for enterprise-level applications, meaning it can handle a high volume of transactions and users while ensuring data integrity and security. DB2 is used in various industries for tasks like managing customer information, tracking inventory, and supporting financial transactions.

2. What are the different types of DB2 licenses available, and how do they differ?

DB2 offers various license types catering to different needs and environments. Some common ones include:

- DB2 Community Edition: This is a free version, suitable for development, testing, and small-scale deployments. It has limitations on CPU cores and memory usage.

- DB2 Standard Edition: Designed for mid-sized businesses. It offers more features and scalability than the Community Edition, but still has resource restrictions.

- DB2 Advanced Edition: The most comprehensive edition, intended for large enterprises. It provides the full range of DB2 features, including advanced analytics, high availability, and disaster recovery capabilities, and typically has fewer restrictions.

- DB2 Developer Edition: Available for development and testing purposes. It contains the full functionality of DB2 Advanced Edition but is not for production use and has specific license terms regarding commercial deployment.

The primary differences lie in the functionality offered (e.g., advanced features), the scalability limits (e.g., maximum memory and CPU cores), and the licensing costs. Each license is tailored to a specific use case and user profile.

3. Explain the difference between a database and a database instance in DB2.

In DB2, a database is a container for data objects like tables, views, and indexes. It's the logical grouping of related data. Think of it as the blueprint or schema for your data.

A database instance, on the other hand, is the running environment of the database. It's the actual set of processes and memory structures that manage the database's data on the server. You can have multiple database instances running on a single server, each managing its own separate database(s). Each instance uses its own memory and processes to operate independently. So, the database is the what, and the database instance is the how and where the database exists and is managed.

4. What is a tablespace in DB2, and why is it important?

A tablespace in DB2 is a logical storage container for database objects, primarily tables and indexes. It acts as an intermediary between the database and the physical storage (disk). Tablespaces help manage storage allocation, performance, and availability of data.

Tablespaces are important because they allow you to:

- Control data placement: Specify where data is physically stored, enabling optimization for performance or separating data based on usage.

- Manage storage: Easily allocate, extend, and backup storage for tables and indexes.

- Improve performance: Use different tablespace types (e.g., SMS, DMS, Automatic Storage) to optimize I/O operations.

- Enhance availability: Tablespaces can be backed up and restored independently, improving recovery time.

5. How does DB2 handle concurrency, and what are some common locking mechanisms?

DB2 handles concurrency using a locking mechanism to ensure data integrity when multiple users or applications access and modify the same data simultaneously. It employs various lock types to manage concurrent access to data.

Common locking mechanisms in DB2 include:

- Shared (S) locks: Allow multiple transactions to read the same data concurrently.

- Exclusive (X) locks: Prevent other transactions from accessing the data while one transaction is modifying it.

- Update (U) locks: Used before modifying data, allowing conversion to an exclusive lock later.

- Intent locks (IS, IX, SIX): Indicate a transaction's intention to acquire shared or exclusive locks at a finer granularity, improving concurrency. DB2 also uses lock escalation (escalating row locks to table locks) to manage lock resources. Isolation levels, such as Read Uncommitted, Read Committed, Repeatable Read, and Serializable, determine the degree to which transactions are isolated from each other, thus affecting concurrency.

6. What are the different data types available in DB2, and when would you use each one?

DB2 offers a variety of data types to suit different data storage needs. Some common types include: INTEGER (for whole numbers, use when you need to store counts or IDs), SMALLINT (smaller range integer, useful for space optimization), BIGINT (larger range integer), DECIMAL or NUMERIC (for precise decimal values, useful for financial data), REAL and DOUBLE (for floating-point numbers, useful when storing scientific values where precision isn't critical), CHAR (fixed-length character strings, use when you need to store fixed-length codes, states, etc.), VARCHAR (variable-length character strings, use for names, addresses, or other text of variable length), DATE (for storing dates), TIME (for storing times), TIMESTAMP (for storing date and time together, often used to timestamp events or transactions), CLOB (Character Large Object, for storing large amounts of text data like documents), and BLOB (Binary Large Object, for storing binary data like images or audio).

The choice of data type depends on the nature of the data you are storing. Consider range, precision, and storage requirements when selecting the appropriate type. For example, if you are storing employee salaries, DECIMAL is a good choice due to its precision. If you are storing a flag that represents a boolean state, you can use SMALLINT to store 0 or 1. Use VARCHAR only when you don't know the exact length of the string beforehand. Prefer CLOB or BLOB to store large amount of string or binary data that may be more than 4000 bytes. If it's just date, time or date and time, it's better to use DATE, TIME or TIMESTAMP respectively.

7. Explain the purpose of indexes in DB2 and how they improve query performance.

Indexes in DB2 are data structures that improve the speed of data retrieval operations on a database table. They contain a subset of the table's columns and pointers to the full rows in the table. By creating an index on one or more columns, DB2 can quickly locate the rows that match a query's WHERE clause without having to scan the entire table, which is known as a full table scan. This significantly reduces the I/O operations required and accelerates query execution, especially for large tables.

Indexes improve query performance by:

- Reducing I/O: Indexes enable the database to retrieve only the necessary data pages.

- Faster Lookups: Provide a quick lookup mechanism based on indexed columns.

- Optimized Sorting: Can be used to satisfy

ORDER BYclauses more efficiently, avoiding separate sorting operations. - Ensuring Uniqueness: Can enforce uniqueness constraints on columns.

8. How do you back up and restore a DB2 database?

Backing up a DB2 database can be achieved using the BACKUP DATABASE command. For example, BACKUP DATABASE <dbname> TO <backup_location>. This command creates a backup image containing the database's data and metadata. Restoring involves using the RESTORE DATABASE command. For instance, RESTORE DATABASE <dbname> FROM <backup_location> REPLACE. The REPLACE option is often necessary to overwrite the existing database. Other options and parameters can be used to tailor the backup and restore processes, like specifying incremental backups, online backups, or redirecting restore operations to a different database name or location. It's important to consider the desired recovery point objective (RPO) and recovery time objective (RTO) when choosing backup strategies.

9. What is the role of the DB2 catalog?

The DB2 catalog is a set of system tables that contain metadata about the database objects within a DB2 database system. It's essentially a data dictionary that stores information about tables, views, indexes, users, privileges, packages, and other database elements.

The catalog is crucial for DB2's operation. DB2 uses the catalog to validate SQL statements, optimize query execution plans, enforce security, and manage dependencies between database objects. Without an accurate and up-to-date catalog, DB2 would be unable to function correctly.

10. Describe the process of creating a table in DB2, including specifying data types and constraints.

To create a table in DB2, you use the CREATE TABLE statement. The basic syntax involves specifying the table name, column names, data types for each column, and optionally, constraints.

For example:

CREATE TABLE employees (

employee_id INTEGER NOT NULL,

first_name VARCHAR(50),

last_name VARCHAR(50) NOT NULL,

salary DECIMAL(10, 2),

hire_date DATE,

PRIMARY KEY (employee_id),

CONSTRAINT check_salary CHECK (salary > 0)

);

In this example:

employeesis the table name.employee_id,first_name,last_name,salary, andhire_dateare column names.INTEGER,VARCHAR,DECIMAL, andDATEare data types.NOT NULLandPRIMARY KEYare constraints.CHECKis another constraint that enforces a condition on the salary column. Data types available includeINTEGER,VARCHAR,DATE,TIMESTAMP,DECIMAL, andCLOBamongst many others. Constraints such asUNIQUE,FOREIGN KEY, andDEFAULTcan also be defined.

11. What are some common DB2 commands you use on a daily basis?

On a daily basis, I frequently use DB2 commands for tasks like connecting to databases, querying data, and managing database objects. Some of the most common commands include:

db2 connect to <database_name> user <user_id> using <password>: To connect to a specific database.db2 "SELECT * FROM <table_name> WHERE <condition>": For querying data from tables.db2 list tables: To list all the tables in the current database.db2 describe table <table_name>: To view the structure of a table.db2 get db config show detail: To retrieve the database configuration settings.db2 terminate: To terminate a database connection.

12. How can you monitor the performance of a DB2 database?

To monitor DB2 database performance, you can use several methods. The DB2 command line processor (CLP) lets you run commands like db2top for real-time monitoring of key metrics such as CPU usage, buffer pool activity, and lock waits. Additionally, you can use the DB2 Performance Expert tool, which provides in-depth analysis and reporting capabilities. Finally, health monitors trigger alerts based on predefined thresholds for various performance indicators. Regularly reviewing these metrics helps identify bottlenecks and optimize database performance.

13. What are user-defined functions (UDFs) in DB2, and when would you use them?

User-defined functions (UDFs) in DB2 are routines written by users (often in languages like SQL, C, C++, Java, or .NET) and registered with the database server. They extend the built-in functionality of DB2, allowing you to encapsulate complex logic and reuse it in SQL statements as if it were a native function.

You would use UDFs when:

- You need to perform calculations or operations that are not supported by built-in DB2 functions.

- You want to encapsulate complex business logic within the database for better maintainability and reusability.

- You need to improve performance by moving processing closer to the data, especially for computationally intensive tasks.

- You need to access external data sources or services from within SQL.

For example, you could create a UDF to calculate a complex tax rate, format a phone number, or validate an email address. Here's an example of a simple SQL UDF:

CREATE FUNCTION calculate_discount (price DECIMAL, discount_percent DECIMAL)

RETURNS DECIMAL

LANGUAGE SQL

DETERMINISTIC

NO EXTERNAL ACTION

RETURN price * (1 - discount_percent/100);

14. Explain the concept of normalization in database design and its benefits.

Normalization in database design is the process of organizing data to reduce redundancy and improve data integrity. It involves dividing databases into tables and defining relationships between the tables. The primary goal is to isolate data so that changes to an attribute are made in only one table.

Benefits of normalization include: Reduced data redundancy and storage space, improved data consistency, easier data modification, and better query performance. It simplifies database maintenance and improves overall data quality.

15. What is the difference between clustered and non-clustered indexes in DB2?

In DB2, a clustered index determines the physical order of data on disk. There can only be one clustered index per table because data can only be physically sorted in one way. If a table has a clustered index, the data rows are stored on disk in the order defined by the index's key columns.

Non-clustered indexes, on the other hand, are separate structures that contain the index key and a pointer to the actual data row. A table can have multiple non-clustered indexes. They provide a way to locate data quickly without having to scan the entire table. Think of it like an index in a book, pointing to the pages where specific topics are discussed. When a non-clustered index is used, DB2 uses the index to find the pointer and then retrieves the data from that specific location.

16. How do you troubleshoot common DB2 errors?

Troubleshooting DB2 errors involves a systematic approach. First, identify the error message and SQLCODE. Use the DB2 documentation (IBM Knowledge Center) to look up the SQLCODE, which provides a description of the error and possible causes. Check the DB2 diagnostic logs (db2diag.log) for more detailed information about the error, including timestamps, affected objects, and related events. This log often contains valuable clues not present in the initial error message. For connection issues, check dbm cfg to confirm the instance name and port are correct. Validate network connectivity using ping and telnet.

Common errors and troubleshooting steps:

- SQL0805N (Package not found): Verify the application is bound to the database correctly using the

db2 bindcommand. Check package names. - SQL1031N (Database is in rollforward pending state): Issue the

db2 rollforward db <dbname> completecommand. - Lock timeouts: Examine the lock timeout setting (

locktimeoutdatabase configuration parameter). Monitor lock activity withdb2pd -locks. Increase the lock timeout or optimize queries to reduce lock contention. - SQL0904N (Unsuccessful execution because of an unavailable resource): This is a generic error. The db2diag.log will have more information about the specific resource problem, such as table space full or a missing index. Resolve the specific underlying issue.

17. Describe the purpose of the DB2 command line processor (CLP).

The DB2 command line processor (CLP) provides a command-line interface to interact with DB2 databases. It allows users to execute SQL statements, DB2 commands, and operating system commands directly against a DB2 database system.

Specifically, the CLP is used for tasks like creating databases, managing tables, running queries, loading data, administering users and permissions, and performing other database administration tasks. It's a vital tool for both developers and database administrators when scripting, automating tasks, or performing ad-hoc database operations.

18. How can you import and export data in DB2?

DB2 offers several utilities for importing and exporting data. The primary utilities are IMPORT and EXPORT.

- EXPORT: Extracts data from a DB2 table or view and saves it to a file in various formats like DEL (Delimited ASCII), IXF (Integration Exchange Format), or WSF (WebSphere Studio Format).

EXPORT TO 'data.del' OF DEL SELECT * FROM employees; - IMPORT: Loads data from a file into a DB2 table. It supports the same file formats as EXPORT (DEL, IXF, WSF). You can choose to insert, replace, replace create, or insert update the existing table data.

IMPORT FROM 'data.del' OF DEL INSERT INTO employees;

Besides IMPORT and EXPORT, LOAD utility is also available, which is generally faster than IMPORT, especially for large datasets. LOAD offers more advanced options, such as data validation and error handling, but is also less flexible than IMPORT for simple data transfers. The LOAD utility requires exclusive access to the table.

19. What is the importance of transaction management in DB2?

Transaction management in DB2 is crucial for maintaining data integrity and consistency. It ensures that a series of operations (a transaction) are treated as a single logical unit of work. This means either all operations within the transaction are successfully completed (committed), or none are (rolled back), even in the face of system failures, concurrent access, or errors.

Without proper transaction management, DB2 databases could suffer from data corruption, inconsistencies, and loss of data integrity. Specifically, features like atomicity (all or nothing), consistency (maintaining data integrity rules), isolation (preventing interference between concurrent transactions), and durability (guaranteeing changes are permanent after commit) are vital for reliable data processing. Using COMMIT and ROLLBACK statements are fundamental for implementing transaction control in DB2.

20. Explain the concept of isolation levels in DB2 and their impact on concurrency.

Isolation levels in DB2 control the degree to which concurrent transactions are isolated from each other. They define the extent to which one transaction's modifications are visible to other transactions running simultaneously. Higher isolation levels provide greater data consistency but can reduce concurrency, while lower levels improve concurrency at the expense of some data integrity.

DB2 supports four standard isolation levels: Uncommitted Read (UR), Cursor Stability (CS), Read Stability (RS), and Repeatable Read (RR). UR offers the highest concurrency but allows 'dirty reads' (reading uncommitted data). CS prevents dirty reads by only reading committed data. RS adds protection against non-repeatable reads (where a transaction reads the same row twice and gets different values due to another transaction's commit). RR provides the highest level of isolation by preventing both non-repeatable reads and phantom reads (where a transaction executes the same query twice and gets different sets of rows).

21. How can you optimize a slow-running query in DB2?

To optimize a slow-running query in DB2, several strategies can be employed. First, analyze the query's execution plan using db2expln or the Visual Explain tool in Data Studio to identify bottlenecks like full table scans or inefficient index usage. Ensure appropriate indexes exist on columns used in WHERE clauses, JOIN conditions, and ORDER BY clauses; create new indexes or modify existing ones if necessary. Update table statistics regularly using RUNSTATS to help the optimizer choose the best execution plan. Review the query's SQL syntax; rewrite complex subqueries or joins if possible, using techniques like common table expressions (CTEs) or alternative join algorithms.

Other optimization techniques include adjusting DB2 configuration parameters related to buffer pools, sort heap size, and statement caching. Consider using materialized query tables (MQTs) for frequently executed, complex queries. Also, examine the application code to ensure that it's using proper connection pooling and not issuing excessive numbers of small queries. Consider implementing query hints if necessary, but use them judiciously as they can prevent the optimizer from adapting to data changes.

22. What are the different types of joins in SQL, and how do they work in DB2?

SQL joins are used to combine rows from two or more tables based on a related column. In DB2 (and standard SQL), there are several types of joins:

- INNER JOIN: Returns rows only when there is a match in both tables.

- LEFT (OUTER) JOIN: Returns all rows from the left table and matched rows from the right table. If there is no match in the right table, it returns

NULLvalues for the right table's columns. - RIGHT (OUTER) JOIN: Returns all rows from the right table and matched rows from the left table. If there is no match in the left table, it returns

NULLvalues for the left table's columns. - FULL (OUTER) JOIN: Returns all rows when there is a match in either the left or right table. If there is no match, the side lacking data will contain

NULLvalues. - CROSS JOIN: Returns the Cartesian product of the two tables. Every row from the first table is combined with every row from the second table. No

ONclause is needed for this join.

In DB2, these joins are implemented as described above using the JOIN keyword along with the ON clause to specify the join condition (except in the case of CROSS JOIN where no ON clause is used). For example: SELECT * FROM table1 INNER JOIN table2 ON table1.columnA = table2.columnB

23. How do you handle security in DB2, including user authentication and authorization?

DB2 security focuses on authentication and authorization. Authentication verifies user identity, often via passwords or LDAP. Authorization determines what actions a user can perform on database objects.

DB2 employs several mechanisms:

- GRANT/REVOKE: Used to assign/remove privileges to users or groups (e.g.,

GRANT SELECT ON TABLE employee TO USER 'john'). - Roles: Define sets of privileges that can be assigned to users.

- Database Privileges: Control access to database level operations, such as creating tables.

- Table Privileges: Control actions on tables (SELECT, INSERT, UPDATE, DELETE).

- Auditing: Track database activity for security monitoring and compliance.

24. Describe the process of upgrading a DB2 database to a newer version.

Upgrading a DB2 database involves several key steps. First, assess the compatibility of your current environment (OS, hardware, applications) with the target DB2 version. Consult the official IBM documentation for upgrade paths and system requirements. Next, perform a full database backup. Install the new DB2 version in a separate location, not overwriting the existing installation. Catalog the existing database in the new DB2 instance. Use the db2ckupgrade command to check database compatibility and address any reported issues. Then, run the db2 upgrade database <dbname> command. Finally, perform thorough testing to ensure data integrity and application functionality, revert to the backup if necessary.

25. What are some of the new features in the latest version of DB2 that you find particularly useful?

Some of the features in the latest version of DB2 that I find particularly useful include enhanced JSON support and improved data skipping capabilities. The improved JSON support makes it easier to work with NoSQL-style data directly within DB2, including new functions for querying and manipulating JSON documents. This simplifies development when dealing with semi-structured data. The advanced data skipping can improve query performance by efficiently avoiding irrelevant data pages, especially beneficial for large tables with sparse data.

I also appreciate the advancements in security, particularly around data masking and encryption enhancements. These features help ensure compliance with data privacy regulations and protect sensitive information. The improved integration with cloud platforms and containerization technologies, such as Kubernetes, streamlines deployment and management of DB2 in modern cloud environments.

Intermediate IBM DB2 interview questions

1. How would you optimize a slow-running DB2 query, and what tools would you use to identify the bottleneck?

To optimize a slow-running DB2 query, I would first identify the bottleneck using tools like DB2 Performance Expert, DB2 Explain, and the DB2 event monitor. These tools help pinpoint whether the issue is due to poor index usage, inefficient query plans, or resource constraints.

Based on the bottleneck, optimization steps could involve:

- Creating or modifying indexes to improve data access.

- Rewriting the query to use more efficient SQL constructs.

- Updating statistics on the tables involved (

RUNSTATS) to help the optimizer generate better plans. - Analyzing the query execution plan (using

EXPLAIN PLAN) and identifying costly operations. - Adjusting DB2 configuration parameters related to memory allocation or buffer pools, if resource constraints are the issue.

2. Explain the difference between clustered and non-clustered indexes in DB2, and when would you use each?

Clustered indexes determine the physical order of data in a table. A table can only have one clustered index because data can only be physically sorted in one way. When you retrieve data using a clustered index, DB2 can efficiently read a range of contiguous data pages. Non-clustered indexes, on the other hand, are separate structures that store a pointer to the actual data row. A table can have multiple non-clustered indexes.

Use a clustered index when you frequently retrieve data in a specific order or need to retrieve ranges of data efficiently. This is beneficial for range queries. Use non-clustered indexes when you need to speed up queries based on columns that are not used for clustering or when you need to search for individual rows based on specific criteria without affecting the physical data order. Non-clustered indexes are particularly useful for columns frequently used in WHERE clauses.

3. Describe a situation where you would use a materialized query table (MQT) in DB2, and how it improves performance.

Imagine a data warehouse scenario where we frequently need to run complex aggregate queries on a large sales transaction table to generate daily sales reports. These queries, joining the transaction table with dimension tables like products and customers, can be slow. We could use a materialized query table (MQT) to pre-calculate and store the results of these aggregate queries. The MQT would contain the summarized sales data, like total sales per product category per day.

When a user requests the daily sales report, DB2 can rewrite the query to use the MQT instead of the base tables. This significantly improves performance because the database retrieves pre-calculated results from the MQT, rather than performing the expensive joins and aggregations on the large transaction table every time. The MQT can be periodically refreshed (e.g., nightly) to keep the report data current. The query rewrite functionality is key; the application code doesn't need to change to benefit from the MQT.

4. What are the different isolation levels in DB2, and what are the trade-offs between them in terms of concurrency and data consistency?

DB2 supports four isolation levels: Uncommitted Read (UR), Cursor Stability (CS), Read Stability (RS), and Repeatable Read (RR). These levels control the degree to which concurrent transactions are isolated from each other.

- Uncommitted Read (UR): Offers the highest concurrency but the lowest consistency. Transactions can read uncommitted changes made by other transactions, leading to dirty reads, non-repeatable reads, and phantom reads. It's useful for read-only operations where absolute data accuracy isn't critical. It avoids locks, so concurrency is very high.

- Cursor Stability (CS): Prevents dirty reads. A transaction holds a lock on the row it is currently accessing, preventing other transactions from modifying that row until the lock is released. However, non-repeatable reads and phantom reads are still possible. It is the default isolation level in DB2.

- Read Stability (RS): Prevents dirty reads and non-repeatable reads. A transaction holds a lock on all rows it has read within the transaction, preventing other transactions from updating them. Phantom reads are still possible. This improves data consistency but reduces concurrency.

- Repeatable Read (RR): Provides the highest level of consistency. A transaction holds locks on all rows it has read or modified within the transaction, preventing dirty reads, non-repeatable reads, and phantom reads. This guarantees that a transaction will see the same data throughout its execution. However, it also results in the lowest concurrency due to the extensive locking.

5. Explain the concept of 'explain plan' in DB2. How do you read and interpret it to optimize a query?

An 'explain plan' in DB2 shows the execution path the database manager will take to execute a SQL query. It details the operations (like table scans, index lookups, sorts, joins) the database will perform, the order of operations, and the estimated cost associated with each operation. You generate it using the EXPLAIN statement before running the query.

To interpret an explain plan, focus on the cost estimates, access methods, and join types. High cost estimates suggest inefficient operations. Look for full table scans where indexes might be more appropriate. Nested loop joins are often less efficient than hash or merge joins, especially for large datasets. Understanding the plan allows you to rewrite the query, create or modify indexes, or adjust database configuration parameters to improve query performance. Use db2exfmt to format the explain tables for better readability.

6. How do you handle deadlocks in DB2, and what strategies can you implement to prevent them?

DB2 handles deadlocks by detecting them and automatically rolling back one of the transactions involved, choosing a victim based on factors like transaction cost and lock wait time. The victim transaction receives a SQLCODE -911 or -913 error, signaling the rollback. To prevent deadlocks, several strategies can be employed:

- Lock Timeout: Configure a lock timeout parameter to automatically roll back transactions waiting too long for a lock.

- Consistent Locking Order: Ensure applications acquire locks in a consistent order to avoid circular dependencies.

- Short Transactions: Keep transactions short to minimize the duration locks are held.

- Avoid User Interaction Within Transactions: Prevent users from interacting with the application during transactions, as pauses can prolong lock hold times.

- Use

LOCK TABLEStatement Carefully: While it can improve performance, it can also lead to deadlocks if not used judiciously. Consider row-level locking instead when possible.

7. Describe the purpose of DB2's RUNSTATS command, and how frequently should you run it on different types of tables?

The RUNSTATS command in DB2 is used to update the database manager's statistics about the characteristics of tables and indexes. These statistics are crucial for the DB2 optimizer to choose the most efficient access paths for SQL queries. Outdated or missing statistics can lead to suboptimal query execution plans, resulting in poor performance. RUNSTATS collects information such as the number of rows in a table, the number of pages used, and the distribution of values in columns.

The frequency of running RUNSTATS depends on how frequently the data in your tables changes. For volatile tables (tables with frequent inserts, updates, and deletes), it's recommended to run RUNSTATS more often, such as daily or even hourly. For relatively static tables, running RUNSTATS weekly or monthly might be sufficient. It's also a good practice to run RUNSTATS after significant data loads or after rebuilding indexes. Automated scheduling using the DB2 Task Scheduler is often used to manage RUNSTATS execution.

8. What is the difference between online and offline backups in DB2, and what are the advantages and disadvantages of each?

Online backups (also known as hot backups) are performed while the database is active and accessible to users. Offline backups (also known as cold backups) require the database to be shut down completely before the backup process can begin.

- Online Backup Advantages: Minimal downtime, continuous availability, can be scheduled during peak hours.

- Online Backup Disadvantages: More complex setup and recovery procedures, can be slower than offline backups, may require more disk space.

- Offline Backup Advantages: Simpler setup and recovery procedures, faster backup speed, ensures data consistency as no transactions are occurring.

- Offline Backup Disadvantages: Significant downtime required, impacts user accessibility, must be scheduled during off-peak hours.

9. Explain how you would implement auditing in DB2 to track changes to sensitive data.

To implement auditing in DB2 to track changes to sensitive data, I would leverage the built-in audit facility. First, I would define an audit policy specifying the events to be audited, such as UPDATE, DELETE, and INSERT operations on the tables containing sensitive data. I would also specify which users or roles to audit. For example:

CREATE AUDIT POLICY SensitiveDataAudit POLICY CATEGORIES EXECUTE, DML, DDL STATUS BOTH;

ALTER TABLE MySchema.SensitiveTable AUDIT POLICY SensitiveDataAudit;

Next, I'd configure the audit settings to store audit records. This typically involves specifying an audit log path and enabling the audit facility. The audit data can then be extracted and analyzed to track changes, identify unauthorized access, and ensure compliance. Reports can be generated from the audit logs to satisfy compliance requirements and aid in security investigations. Remember to rotate and archive audit logs regularly to manage storage and performance.

10. Describe the process of migrating a DB2 database to a new server, including pre-migration checks and post-migration validation.

Migrating a DB2 database involves several key steps. Before migration, perform thorough pre-migration checks. This includes assessing hardware and software compatibility on the target server, verifying database consistency with db2ckdbs, backing up the existing database, and estimating the required storage space on the new server. Also, document the current database configuration, including user permissions and database parameters.

During the migration, you can use methods like backup and restore, or HADR (High Availability Disaster Recovery) takeover. After the migration, perform validation. Run integrity checks again, test application connectivity, and compare performance metrics between the old and new servers. Verify data accuracy by running sample queries and comparing results. Monitor the database for any errors or performance issues.

11. How can you monitor DB2 database performance in real-time, and what key metrics would you track?

Real-time DB2 database performance monitoring can be achieved using several tools, including the DB2 command line processor (CLP), IBM Data Studio, and third-party monitoring solutions. Key metrics to track include CPU utilization, buffer pool hit ratio, lock wait time, deadlocks, transaction rate, and disk I/O. For example, you could use the db2top command in CLP for a real-time view.

Specifically, low buffer pool hit ratios indicate inefficient memory usage requiring adjustment of buffer pool sizes. High lock wait times or frequent deadlocks point to concurrency issues needing investigation and potentially schema or query optimization. Monitoring the transaction rate allows insights into database workload and capacity planning. Regular checks on these metrics, ideally with automated alerts for threshold breaches, are essential for proactive performance management.

12. What are the different types of locks in DB2, and how do they impact concurrency?

DB2 employs several lock types to manage concurrent access to data, impacting performance. Key lock types include: Share (S) locks which allow multiple transactions to read the same data concurrently, but prevent any exclusive locks. Exclusive (X) locks are used when a transaction needs to modify data, preventing other transactions from reading or writing it. Update (U) locks which are held when a transaction intends to update a row; it's compatible with share locks but prevents other update or exclusive locks, allowing potential upgrade to an exclusive lock. Intent locks (IS, IX, SIX) indicate a transaction's intention to acquire share or exclusive locks at a finer granularity (e.g., row level within a table).

The impact on concurrency stems from the level of restrictiveness each lock imposes. Share locks maximize read concurrency. Exclusive locks minimize concurrency for the locked resource. Intent locks balance concurrency and data integrity by signaling potential lock escalations. Lock contention (where transactions wait for locks held by others) can reduce concurrency, leading to performance degradation. Choosing the correct isolation level and understanding application access patterns helps minimize lock contention and maximize throughput.

13. Explain how you would implement data partitioning in DB2 to improve query performance and manage large tables.

In DB2, data partitioning (also known as table partitioning) can significantly improve query performance and manageability of large tables. I would implement it by defining a partitioning key and specifying ranges or lists of values for each partition. This allows DB2 to split the table's data into multiple storage objects, enabling parallel processing and reducing the amount of data scanned for a query. For example, using PARTITION BY RANGE (column_name) to divide data based on date ranges or numerical intervals.

To implement partitioning, I'd first analyze query patterns to choose an appropriate partitioning key. Then, I'd create the partitioned table using the CREATE TABLE statement with the PARTITION BY clause. Finally, I'd use techniques like partition elimination, where the database only accesses relevant partitions based on the query's WHERE clause, to optimize query execution. Regular maintenance, like rolling out or archiving old partitions, would also be part of the strategy.

14. Describe a scenario where you would use stored procedures in DB2, and what are the benefits of using them?

I would use stored procedures in DB2 when needing to perform complex data validation and manipulation as part of an order processing system. Imagine an order entry system where, upon receiving an order, several checks must be performed. These checks involve validating customer information, verifying product availability, applying discounts based on customer tier, and generating order IDs. This whole process also involves multiple SQL statements.

Using a stored procedure for this scenario offers several benefits: * Improved Performance: Stored procedures are precompiled and stored on the database server, reducing network traffic and execution time compared to sending multiple SQL statements from the application. * Enhanced Security: Access to underlying tables can be restricted, and users can be granted execute permissions only on the stored procedure, preventing direct access to sensitive data. * Code Reusability: The order processing logic is encapsulated within the stored procedure and can be reused by multiple applications, promoting consistency. * Reduced Network Traffic: Instead of sending multiple SQL queries from the application to the database server, the application only needs to call the stored procedure.

For example, consider a DB2 stored procedure that validates a customer's address using a geocoding service and updates the customer's record. The application only needs to call the stored procedure with the customer ID and address. The stored procedure handles all the complex operations.

15. How would you troubleshoot a DB2 database that is experiencing high CPU utilization?

To troubleshoot high CPU utilization in a DB2 database, I would start by identifying the most CPU-intensive SQL statements. I'd use DB2's monitoring tools such as the db2top utility or the MONREPORT.DBSUMMARY and MONREPORT.PKGCACHE routines to pinpoint the queries consuming the most CPU. Analyzing the execution plans of those high-CPU queries using db2expln will help reveal areas for optimization, such as missing indexes, full table scans, or inefficient join strategies.

Next, I would examine the database configuration parameters to ensure optimal resource allocation. This includes checking buffer pool sizes, sort heap sizes, and other memory-related settings. Monitoring the system's overall resource usage, including memory, I/O, and network activity, is also crucial to identify any bottlenecks that might be indirectly contributing to high CPU. Reviewing the DB2 diagnostic logs for any errors or warnings can also provide valuable clues.

16. Explain the purpose of the DB2 catalog tables, and how can you use them to gather information about the database?

The DB2 catalog tables (also known as system catalog views) store metadata about the database itself. They contain information about tables, views, indexes, columns, users, privileges, data types, and other database objects. The DB2 engine uses these tables to manage and optimize database operations. Essentially, the catalog provides a dictionary or directory of all the objects within the database.

You can query these catalog tables using standard SQL SELECT statements to gather information about the database structure and properties. For example:

- To list all tables:

SELECT TABNAME FROM SYSIBM.SYSTABLES WHERE TYPE = 'T' - To get column information for a specific table:

SELECT NAME, COLTYPE, LENGTH FROM SYSIBM.SYSCOLUMNS WHERE TBNAME = 'your_table_name' - To find indexes on a table:

SELECT INDEXNAME FROM SYSIBM.SYSINDEXES WHERE TBNAME = 'your_table_name'

17. How can you implement row-level security in DB2 to restrict access to specific rows based on user roles?

Row-level security in DB2 can be implemented using row permissions and column masks. First, define roles that represent different user groups. Then, create row permissions that specify which rows are visible to each role based on a defined condition. For example:

CREATE PERMISSION OrdersPermission ON Orders FOR ROWS

WHERE CURRENT_ROLE = 'Manager' OR CustomerID = SESSION_USER;

ALTER TABLE Orders ACTIVATE ROW ACCESS CONTROL;

This example grants access to all rows for users with the 'Manager' role or only the rows with a 'CustomerID' matching the current user's session ID. Remember to activate row access control for the table after creating the permission. You can create multiple permissions for different roles to implement a fine-grained security model. Column masks can similarly restrict visibility of specific columns.

18. Describe the process of recovering a DB2 database after a media failure.

Recovering a DB2 database after a media failure involves restoring the database from a backup and then applying transaction logs to bring the database to a consistent state. The process generally includes:

- Restore the database: Use the

RESTORE DATABASEcommand to restore from the most recent full or incremental backup. Ensure the target location has sufficient space. - Rollforward recovery: Execute the

ROLLFORWARD DATABASEcommand to apply transaction logs from the time of the backup until a specific point in time, the end of the logs, or a consistent point. You can useROLLFORWARD DATABASE TO END OF LOGSorROLLFORWARD DATABASE TO a specific timestamp. - Complete the recovery: Once rollforward is complete, issue the

db2 rollforward database <dbname> stopcommand. This will bring the database online and make it available for use.

19. Explain the purpose of the DB2 governor, and how can you use it to prevent runaway queries from impacting database performance?

The DB2 governor is a mechanism to control and limit the resources consumed by individual SQL queries. Its primary purpose is to prevent 'runaway queries' – those that take an excessively long time or consume disproportionate resources (CPU, memory, I/O) – from negatively impacting overall database performance and stability. By setting up rules and thresholds, the governor automatically terminates or prevents queries from executing if they exceed pre-defined limits.

You can use the DB2 governor to prevent runaway queries by defining governor rules based on several criteria: Estimated query cost, elapsed time, CPU usage, number of rows read/written, and temporary table space usage. When a query violates these thresholds, the governor can take actions such as: Preventing the query from running, terminating the query, or reducing the query's priority. For example, you might set a rule to terminate any query with an estimated cost exceeding a certain threshold or that has been running for more than a specific amount of time. This helps maintain overall system performance and prevent resource exhaustion caused by poorly written or overly complex queries.

20. How would you implement a rolling upgrade of a DB2 database to a newer version, minimizing downtime?

To implement a rolling upgrade of a DB2 database to minimize downtime, I'd use the online upgrade procedure. This involves upgrading instance members/partitions one at a time while the database remains available. The process typically involves these steps:

- Backup the database before beginning. Prepare the new DB2 version on all servers. Initially upgrade only one member (e.g., the one with fewer connections) using the

db2iupgradecommand. After upgrading the initial member, bring the database online in restricted access mode (or quiesce user connections) and perform necessary data migrations. Gradually upgrade the remaining members/partitions. Reconnect user connections as members become available. Regularly monitor the upgrade progress and system health. Finally, after upgrading all members, perform any final post-upgrade tasks and fully enable all database features. During the upgrade process, implement a database proxy or load balancer to direct connections to upgraded members and to reroute traffic away from members being upgraded.

Advanced IBM DB2 interview questions

1. How do you optimize a complex DB2 query involving multiple joins and subqueries for performance?

To optimize a complex DB2 query with multiple joins and subqueries, focus on query rewrite, index usage, and statistics. Start by examining the query plan using EXPLAIN to identify bottlenecks, such as full table scans or inefficient join orders. Rewrite the query to use joins instead of subqueries where possible, and ensure appropriate indexes exist on join columns and WHERE clause columns. Use RUNSTATS to keep table and index statistics up-to-date, allowing the DB2 optimizer to make informed decisions.

Specifically, consider these points:

- Indexing: Verify indexes exist on join columns and columns used in

WHEREclauses. - Query Rewrite: Convert subqueries to joins where feasible.

- Join Order: Force a specific join order using hints (use judiciously).

- Statistics: Ensure statistics are current using

RUNSTATS. - Materialized Query Tables (MQTs): Consider using MQTs for frequently accessed pre-aggregated data.

EXPLAIN: UseEXPLAINto understand the query execution plan and identify areas for improvement.- Data Types: ensure matching data types for comparison predicates and join conditions.

- Avoid functions in WHERE clauses: avoid using functions on columns in

WHEREclauses, as this can prevent index usage.

2. Explain the concept of DB2's autonomic computing features and how they contribute to database administration.

DB2's autonomic computing features aim to reduce the need for manual database administration tasks. These features allow the database to self-manage certain aspects of its operation, improving efficiency and reducing administrative overhead.

Key autonomic features in DB2 include:

- Self-tuning memory: Automatically adjusts memory allocation for different database components based on workload demands.

- Automatic storage management: Simplifies storage administration by automatically managing storage paths and data placement.

- Self-maintaining utilities: Features like automatic backup, recovery, and reorganization which automatically schedule and execute these tasks based on database activity and health. Also includes automatic statistics collection.

- Health monitoring: Continuously monitors the database for potential problems and alerts administrators or takes corrective actions.

These features contribute to database administration by automating routine tasks, optimizing resource utilization, and proactively addressing potential issues, freeing up administrators to focus on more strategic initiatives.

3. Describe the different types of locking mechanisms available in DB2 and their impact on concurrency.

DB2 offers several locking mechanisms to manage concurrent access to data. These include row-level locking, which locks individual rows, maximizing concurrency but increasing overhead. Table-level locking locks the entire table, offering simplicity but severely limiting concurrency. Page-level locking locks data pages (a fixed-size block of data), balancing concurrency and overhead. Finally, lock escalation automatically converts fine-grained locks (e.g., row-level) to coarser-grained locks (e.g., table-level) when a transaction acquires too many locks, reducing overhead but potentially decreasing concurrency.

The choice of locking mechanism impacts concurrency. Fine-grained locking (row, page) allows multiple transactions to access different parts of the same table simultaneously, increasing concurrency. However, it also increases the overhead of managing locks. Coarse-grained locking (table) reduces overhead but limits concurrency as only one transaction can access the table at a time. Understanding the trade-offs is crucial for optimizing database performance. DB2 automatically manages much of this, but understanding the underlying principles helps with performance tuning.

4. How would you implement a data partitioning strategy in DB2 to improve query performance and manageability?

In DB2, data partitioning can significantly improve query performance and manageability. I would use the CREATE TABLE statement with the PARTITION BY clause to define the partitioning strategy. For example, range partitioning is common: CREATE TABLE my_table (column1 INT, column2 DATE) PARTITION BY RANGE (column2) (PARTITION STARTING '2023-01-01' ENDING '2023-06-30', PARTITION STARTING '2023-07-01' ENDING '2023-12-31');. This divides the table based on date ranges.

For manageability, I would regularly monitor partition sizes and consider reorganizing or redistributing data if partitions become unbalanced. Additionally, utilizing partition-level operations such as DETACH and ATTACH can simplify maintenance tasks like archiving older data.

5. What are the considerations when designing a high availability and disaster recovery solution for a DB2 database?

Designing a high availability (HA) and disaster recovery (DR) solution for a DB2 database involves several key considerations. For HA, focus on minimizing downtime within the primary data center. This typically involves techniques like: DB2 HADR (High Availability Disaster Recovery) for near real-time replication to a standby database, clustering to automatically failover to a secondary server in case of primary server failure, and online maintenance to perform tasks like index rebuilds without interrupting service. Regularly monitor database health and performance.

For DR, the goal is to recover the database in a separate geographical location in case of a major disaster. Considerations include: Implementing HADR to a remote site, regular backups (both online and offline) stored offsite, thorough testing of the DR plan to ensure it works as expected, and clearly defined recovery point objective (RPO) and recovery time objective (RTO) to guide the recovery process. Also, consider using IBM GDPS (Geographically Dispersed Parallel Sysplex) for automatic failover to a remote site.

6. Explain the role of the DB2 catalog and how it's used by the database manager.

The DB2 catalog is a set of system tables that contain metadata about all the objects in a DB2 database system. It describes the structure of the database, including tables, views, indexes, packages, users, privileges, and other database objects. Essentially, it's a dictionary or repository of information about the database itself.

The DB2 database manager uses the catalog extensively. When a SQL statement is executed, the database manager first consults the catalog to verify the existence and properties of the objects referenced in the statement (e.g., table names, column names). It also uses the catalog for authorization checking (to determine if the user has the required privileges) and for query optimization (to choose the best access path based on index information and table statistics stored in the catalog). Changes to the database structure are reflected in updates to the DB2 catalog tables. The catalog is crucial for the database manager's correct functioning.

7. How can you monitor and troubleshoot performance bottlenecks in a DB2 environment?

Monitoring and troubleshooting DB2 performance bottlenecks involves several key techniques. We can use DB2's built-in tools like the db2top utility for real-time monitoring of database activity, CPU usage, and buffer pool performance. The db2pd command provides detailed information about database manager processes, memory usage, and lock contention. Additionally, DB2's event monitors can be configured to capture specific performance-related events for later analysis. The health monitor automatically tracks key metrics and provides alerts when thresholds are breached. These alerts should be investigated.

Troubleshooting steps include identifying slow-running queries using query snapshots and the EXPLAIN statement to understand query access paths. Check buffer pool hit ratios, index usage, and I/O wait times. Analyze lock waits and deadlocks using lock event monitors. Consider using the AUTOCONFIGURE command to automatically adjust database configuration parameters. Regular database maintenance, including running RUNSTATS on tables and indexes, is crucial for optimal performance.

8. Describe the steps involved in upgrading a DB2 database to a newer version, including pre- and post-upgrade tasks.

Upgrading a DB2 database involves several key steps. Before starting, a thorough assessment is critical. This includes reviewing the release notes of the target version to identify deprecated features or compatibility issues, running the DB2 pre-upgrade advisor (db2ckupgrade) to highlight potential problems, and backing up the existing database. You should also freeze application changes to prevent data inconsistencies during the upgrade.

The upgrade process itself generally involves stopping the database instance, installing the new DB2 version, upgrading the instance, and then upgrading the databases. The db2iupgrade command upgrades the instance. The db2 upgrade database command upgrades each database. Post-upgrade tasks include running db2ckupgrade again to confirm a successful upgrade and addressing any issues, updating application connection strings if needed, rebinding packages, and thoroughly testing all applications to ensure they function correctly with the upgraded database. Finally, update statistics on the upgraded database.

9. What are the advantages and disadvantages of using DB2 BLU Acceleration for analytical workloads?

DB2 BLU Acceleration offers significant advantages for analytical workloads. Its columnar storage and in-memory processing drastically improve query performance, especially for complex queries involving aggregations and large datasets. Data compression minimizes storage footprint and further speeds up I/O operations. Adaptive compression tailors the compression algorithm to the data type, maximizing compression ratios.

However, BLU Acceleration also has disadvantages. It is optimized for read-heavy workloads, and write performance can be slower compared to traditional row-based storage. The initial data load can be resource-intensive. BLU Acceleration also has limitations regarding the types of indexes it supports. As such, it is most applicable to workloads where analytical queries are far more frequent than write operations and requires careful planning and consideration of data characteristics.

10. Explain the different isolation levels in DB2 and how they affect data consistency and concurrency.

DB2 supports four isolation levels: Uncommitted Read (UR), Cursor Stability (CS), Read Stability (RS), and Repeatable Read (RR). These levels control the degree to which concurrent transactions are isolated from each other, impacting data consistency and concurrency.

- Uncommitted Read (UR): Allows a transaction to read uncommitted changes made by other transactions. Offers the highest concurrency but lowest data consistency as "dirty reads" are possible. That means it reads data that might later be rolled back.

- Cursor Stability (CS): Prevents a transaction from reading uncommitted changes but only releases shared locks row by row. It avoids dirty reads. However, non-repeatable reads are possible, meaning the same query might return different results if another transaction modifies and commits the data in between.

- Read Stability (RS): Ensures that a transaction reads only committed data and prevents non-repeatable reads within the same transaction by holding shared locks until the end of the transaction. Phantom reads can still occur, where new rows matching the query criteria are inserted by other transactions.

- Repeatable Read (RR): Provides the highest level of isolation. It prevents dirty reads, non-repeatable reads, and phantom reads. Transactions hold locks on all rows read until the end of the transaction. This level offers the highest data consistency but lowest concurrency.

11. How do you implement auditing in DB2 to track data access and modifications for security and compliance?

DB2 provides a built-in auditing facility to track data access and modifications. To implement auditing, you'll typically use the CREATE AUDIT POLICY and AUDIT statements. CREATE AUDIT POLICY defines what events to audit (e.g., EXECUTE, SELECT, UPDATE) and where to store the audit records (e.g., a specific table). The AUDIT statement then associates the policy with specific database objects like tables, views, or even user actions. For example:

CREATE AUDIT POLICY my_audit_policy CATEGORIES EXECUTE, SELECT, UPDATE STATUS BOTH;

AUDIT TABLE my_table USING POLICY my_audit_policy;

After enabling auditing, you can query the audit tables to review data access and modifications. Regularly review and archive audit logs to comply with security and compliance requirements. You can use FLUSH EVENT MONITOR to force write of the current audit records to ensure integrity. Finally, consider implementing role based access control (RBAC) in conjunction with audit policies for better overall security.

12. Describe how you would handle a situation where a DB2 database is experiencing severe performance degradation due to locking contention.

To address severe DB2 performance degradation due to locking contention, my approach would involve several steps. First, I'd monitor the database using tools like DB2 Performance Expert or built-in monitoring utilities to identify the specific locks causing the contention, the tables/indexes involved, and the applications or users holding or waiting on those locks. I'd analyze the lock wait times and the number of applications affected.

Next, I would attempt to alleviate the immediate pressure by addressing the most significant contention points. This could involve killing idle or long-running transactions holding locks (as a last resort), adjusting application logic to commit more frequently, optimizing SQL queries to reduce lock duration (e.g., using WITH UR if appropriate and acceptable), or tuning DB2 parameters related to locking (e.g., lock timeout values, although this requires careful consideration). Furthermore, I would analyze the application code to identify and correct any coding practices contributing to excessive locking, such as holding locks for longer than necessary or accessing tables in a non-optimal order. Creating indexes for common queries can also prevent full table locks. Implementing row-level locking can also be a good strategy. Finally, I would investigate whether the LOCK TABLE command is being used excessively by the application.

13. What are the key differences between DB2 on-premises and DB2 on Cloud, and when would you choose one over the other?

DB2 on-premises refers to the traditional deployment of DB2 on your own hardware and infrastructure, offering full control over the environment. DB2 on Cloud, on the other hand, is a DB2 deployment on a cloud provider's infrastructure (like IBM Cloud, AWS, or Azure), managed either by you or the cloud provider, offering scalability and reduced operational overhead. The primary difference lies in infrastructure management: you handle it all on-premises, while the cloud provider handles much of it in DB2 on Cloud.

Choose DB2 on-premises when you need maximum control over security, compliance, and hardware configurations, especially if you have strict regulatory requirements or existing infrastructure investments. Opt for DB2 on Cloud when you prioritize scalability, faster deployment, reduced IT burden, and cost-effectiveness, allowing you to focus on application development and data analysis rather than database administration. Cost modelling also plays a crucial role in deciding where to deploy.

14. How can you use DB2's explain facility to analyze query execution plans and identify optimization opportunities?

DB2's explain facility allows you to analyze query execution plans, helping identify optimization opportunities. First, enable explain by setting the CURRENT EXPLAIN MODE special register to EXPLAIN. Then, execute your SQL query. DB2 will populate explain tables with information about the query execution plan, such as access paths, join methods, and estimated costs, without actually executing the query.

To analyze the plan, use the db2exfmt command or query the explain tables directly. Look for high estimated costs, full table scans where indexes could be used, inefficient join methods (e.g., nested loop joins on large tables), and missing or ineffective indexes. Based on the analysis, you can optimize the query by creating or modifying indexes, rewriting the query, updating statistics on tables and indexes, or adjusting DB2 configuration parameters. db2expln command shows the query execution plan in a hierarchical format. db2advis command can be used to get index recommendations.

15. Describe the process of creating and managing user-defined functions (UDFs) in DB2.

Creating and managing UDFs in DB2 involves defining the function's logic (often in SQL, external languages like Java or C), registering the function with DB2, and then managing its lifecycle. The basic steps include:

- Define the function logic: This involves writing the code that the UDF will execute. For SQL UDFs, this is SQL code. For external UDFs, it's code in the specified language (e.g., Java). For example, a SQL UDF to calculate the square of a number would look like:

CREATE FUNCTION SQUARE (IN num INTEGER) RETURNS INTEGER BEGIN ATOMIC RETURN num * num; END - Register the function: Use the

CREATE FUNCTIONstatement to register the UDF with DB2. Specify the function name, input parameters, return type, language, and the path to the external routine (if applicable). The SQL example above demonstrates registration. - Manage the UDF: You can alter UDFs using the

ALTER FUNCTIONstatement to modify their properties (e.g., parameter list, external routine path). Use theDROP FUNCTIONstatement to remove a UDF from the database. Also, managing security, permissions and dependencies.

16. What are the security considerations when designing and implementing a DB2 database application?

When designing a DB2 database application, security should be a primary concern. Some key considerations include: Authentication and Authorization: Ensure robust user authentication mechanisms and implement granular authorization controls using roles and privileges to restrict access to sensitive data. Data Encryption: Encrypt sensitive data both in transit (using TLS/SSL) and at rest (using DB2's built-in encryption features) to protect against unauthorized access. Auditing: Enable auditing to track user activity and database changes, providing a record for security analysis and compliance. Network Security: Secure the network connection between the application and the database server using firewalls and other network security measures. Input Validation: Implement thorough input validation to prevent SQL injection attacks. Use parameterized queries or stored procedures to sanitize user input.

Regular security assessments and penetration testing should be performed to identify and address potential vulnerabilities. Keep the DB2 server and related software patched with the latest security updates. Finally, properly handle database connection strings and credentials - avoid hardcoding them in the application and use secure storage mechanisms.

17. How do you back up and restore a DB2 database, and what are the different types of backup options available?

To back up a DB2 database, you use the BACKUP DATABASE command. For example: BACKUP DATABASE <dbname> TO <location>. To restore, you use the RESTORE DATABASE command: RESTORE DATABASE <dbname> FROM <location>. After restoring, you typically need to roll forward the logs to a consistent point using ROLLFORWARD DATABASE.

Different backup types include: Full backups (backs up the entire database), Incremental backups (backs up changes since the last full backup), and Delta backups (backs up changes since the last any backup). You can also perform online backups while the database is running, or offline backups that require exclusive access.

18. Explain how you would use DB2's workload management (WLM) features to prioritize and manage database resources.

DB2's Workload Management (WLM) allows prioritizing database resources based on workload characteristics. I would define workloads based on application type (e.g., OLTP, reporting), user group, or connection attributes. Each workload is then assigned to a service class, which represents a priority level. Higher priority service classes receive preferential access to CPU, memory, and I/O resources. For example, I could create a 'critical_oltp' service class with high priority for transaction processing and a 'reporting' service class with lower priority to prevent reporting queries from impacting transaction performance. This ensures critical applications receive necessary resources even under heavy load.

To configure WLM, I would use DB2 commands or the DB2 Control Center. This involves defining workloads, service classes, and assigning them appropriately. CREATE WORKLOAD, CREATE SERVICE CLASS, and ALTER WORKLOAD are crucial commands. Regular monitoring of WLM performance metrics using tools like db2top is important to fine-tune configurations and ensure optimal resource allocation and efficient resource use.

19. What are the challenges and best practices for migrating data from other database systems to DB2?

Migrating data to DB2 presents challenges such as schema differences, data type incompatibilities, character set variations, and potential data loss or corruption during the transfer. Performance issues can arise from inefficient extraction, transformation, and loading (ETL) processes. Ensuring data integrity and security throughout the migration is crucial. Best practices include thorough planning and assessment of source and target databases. Develop a detailed migration plan including schema mapping, data transformation rules, and validation procedures. Perform a pilot migration with a subset of data to identify and resolve potential issues before the full migration. Use appropriate ETL tools and techniques for data extraction, transformation, and loading, and implement robust error handling and auditing mechanisms. Finally, perform comprehensive data validation and reconciliation after the migration to ensure data accuracy and completeness.

Specific considerations include: Character Set Conversion: Ensuring proper conversion to avoid data corruption. Data type Mapping: Choosing the most appropriate DB2 data types for source data. Lob Data: Efficiently handling large objects to avoid performance bottlenecks. Security: Apply appropriate security settings after migration. Use appropriate tools such as db2move, LOAD, or IMPORT commands based on the data volume and complexity.

20. How does DB2 handle data compression, and what are the benefits of using compression?

DB2 supports data compression at the table and index level. It uses various compression techniques, including row compression and page compression. Row compression identifies and replaces repeating patterns within a row. Page compression identifies repeating values across multiple rows on a page and stores them in a symbol table. This reduces the amount of storage space required.

The benefits of using compression include reduced storage costs, improved I/O performance because less data is read and written, and potentially improved query performance as more data can fit in memory. Furthermore, compression can reduce network bandwidth consumption when transferring data.

21. Describe the process of tuning DB2 memory parameters to optimize database performance.

Tuning DB2 memory parameters involves adjusting settings to efficiently use available memory for caching data and executing queries. Key parameters include database_memory, which controls the overall memory allocated to the database, and bufferpool size, which determines the amount of memory used for caching data pages. To optimize, start by monitoring bufferpool hit ratios using DB2 monitoring tools and snapshots. If the hit ratio is low, increase bufferpool size. Similarly, monitor the overall database memory usage and adjust database_memory to avoid excessive paging or swapping by the operating system. Automatic memory tuning (STMM) can also be enabled to dynamically adjust memory parameters based on workload.

22. What are the different types of indexes in DB2, and how do you choose the appropriate index type for a given query?

DB2 supports several index types, primarily focusing on performance optimization: B-tree indexes are the most common and suitable for equality and range queries. They provide balanced search trees for efficient data retrieval. Index on Expression lets you create an index based on the result of an expression, allowing for optimized querying based on transformed data.

Choosing the right index depends on the query patterns. For equality lookups (e.g., WHERE column = value), a standard B-tree index on that column is ideal. For range queries (WHERE column BETWEEN value1 AND value2), B-tree indexes also work well. If your queries involve functions or calculations, consider Index on Expression to pre-compute the results and index the computed value.

23. Explain the concept of DB2 pureXML and how it can be used to store and query XML data.

DB2 pureXML is a feature that allows you to natively store and manage XML data within a DB2 database. Instead of storing XML as CLOBs or breaking it down into relational tables, pureXML stores the XML data in its hierarchical structure. This provides several advantages for querying and manipulating XML.

With pureXML, you can use SQL/XML queries to directly access elements and attributes within the XML documents. This avoids the complexities of parsing and transforming XML data outside the database. You can use XQuery expressions directly in SQL statements. For example, SELECT XMLQUERY('//book/title' PASSING doc) FROM books. DB2 also provides indexes on XML data to improve query performance.

24. How do you monitor and manage DB2 HADR (High Availability Disaster Recovery) environments?

Monitoring and managing DB2 HADR involves several key aspects. I would regularly monitor HADR status using command-line tools such as db2pd -hadr -db <dbname> to check the connection state, role (primary/standby), and log shipping status. Key metrics include log gap, connection latency, and overall synchronization health. I'd also configure alerts based on these metrics to proactively address potential issues. For example, if the log gap exceeds a threshold, it might indicate network problems or slow log shipping, prompting investigation.

Management tasks include performing planned failovers using db2 takeover, ensuring seamless switchover to the standby database with minimal downtime. After a failover, I verify the new primary database is functioning correctly and that the old primary is now the standby. I'd also schedule regular HADR testing, like forced failovers, to validate the recovery process and identify potential weaknesses in the configuration. Furthermore, log archiving and retention policies are reviewed to ensure data recoverability in case of a disaster.

Expert IBM DB2 interview questions

1. Explain the concept of 'dirty reads' in DB2 and how to prevent them. Can you illustrate with an example?

A dirty read occurs when a transaction reads data that has been modified by another transaction but not yet committed. If the modifying transaction rolls back, the reading transaction will have read incorrect data. To prevent dirty reads in DB2, you should use appropriate isolation levels. The READ UNCOMMITTED isolation level allows dirty reads. READ COMMITTED, REPEATABLE READ, and SERIALIZABLE do not. For example, setting the isolation level to READ COMMITTED avoids dirty reads, as it only allows a transaction to read data that has been committed. You can set isolation level via the WITH RR clause in SQL.

2. What are the different types of locking mechanisms available in DB2, and when would you choose one over the other?

DB2 offers several locking mechanisms to manage concurrent access to data:

- Share (S) locks: Allow multiple transactions to read the same data concurrently.

- Exclusive (X) locks: Only one transaction can hold an exclusive lock on a resource, preventing other transactions from reading or writing.

- Update (U) locks: A 'soft' exclusive lock. It allows reading but signals intent to update. Can be converted to an X lock. Useful to avoid deadlocks when reading for update.

- Intent locks (IS, IX, SIX): Indicate a transaction's intention to acquire S or X locks on finer-grained resources (e.g., rows within a table). Prevents incompatible locks at higher levels (e.g., table-level).

The choice depends on the operation and concurrency requirements. S locks are suitable for read-only operations needing high concurrency. X locks are necessary for write operations ensuring data integrity. U locks are used for read-modify-write operations. Intent locks improve concurrency by allowing multiple transactions to access different parts of the same table simultaneously without blocking each other until a lock escalation happens.

3. Describe the DB2 RUNSTATS command and its importance in query optimization. What are some best practices for using it?

The RUNSTATS command in DB2 collects statistics about the data in tables and indexes. This information is crucial for the DB2 optimizer to choose the most efficient access path for queries. Without up-to-date statistics, the optimizer might make suboptimal decisions, leading to poor query performance. These statistics include cardinality (number of rows), distribution of data values, and size of tables and indexes. RUNSTATS helps to ensure the optimizer has an accurate picture of the data.

Best practices include running RUNSTATS after significant data changes (inserts, updates, deletes), focusing on tables frequently accessed by performance-critical queries, using sampling options to balance accuracy and performance (e.g., RUNSTATS ON TABLE table_name WITH DISTRIBUTION ON COLUMNS (column1, column2) AND INDEXES ALL), and scheduling RUNSTATS jobs during off-peak hours to minimize impact on system performance.

4. Explain the difference between clustered and non-clustered indexes in DB2. How does each impact query performance?

Clustered indexes physically sort the data in a table based on the index key. There can only be one clustered index per table because the data can only be physically sorted in one way. This makes retrieval of rows in the index order very fast. Non-clustered indexes, on the other hand, are separate structures that store the index key and a pointer to the actual data row. A table can have multiple non-clustered indexes.