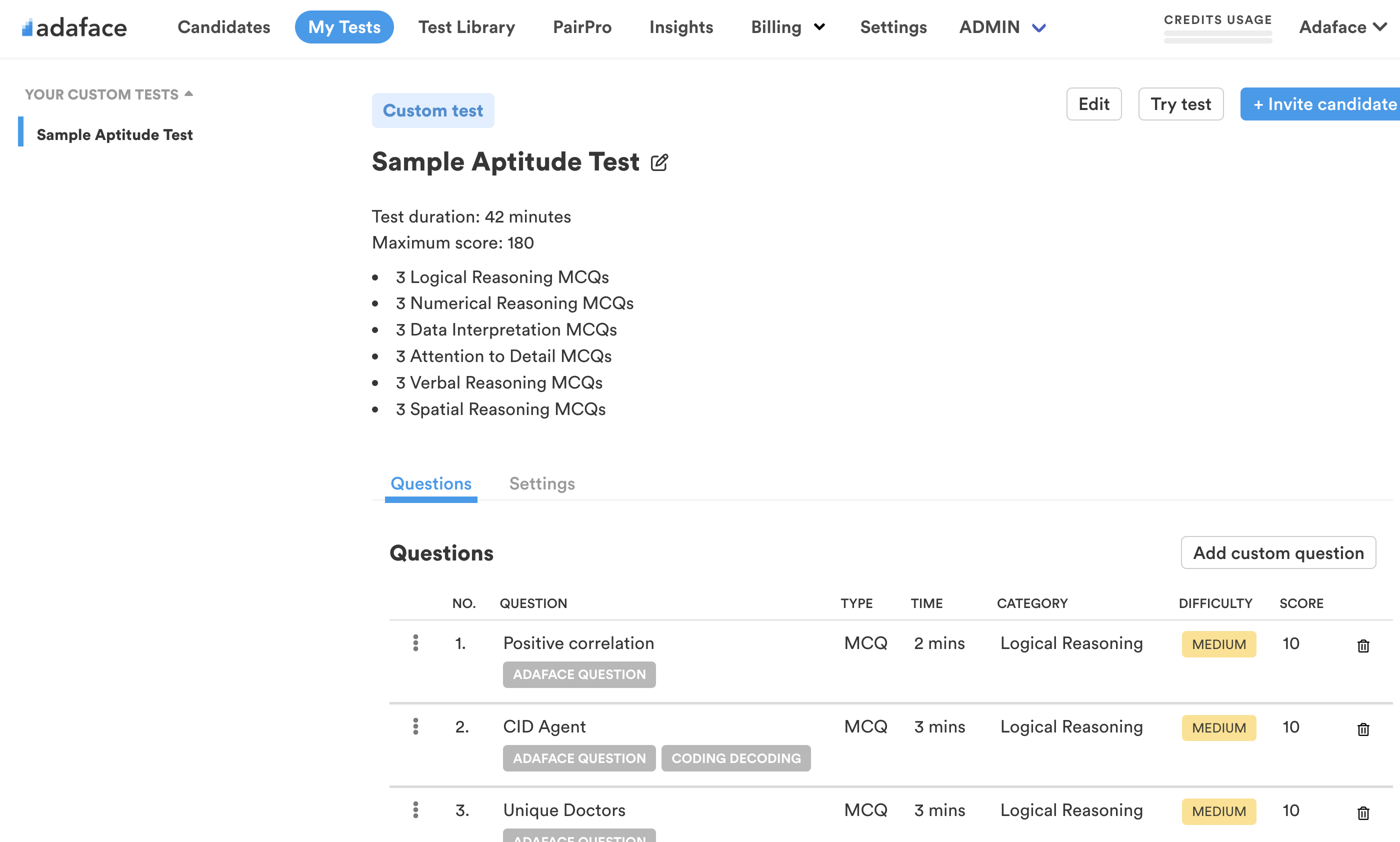

Incredibly accurate screening tests

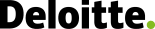

Evaluate coding, aptitude, psychometric and 500+ skills using conversational assessments.

Screen candidates for all your roles with the Adaface online assessment platform

Evaluate coding, aptitude, psychometric and 500+ skills using conversational assessments.

Screen candidates for all your roles with our ready-to-use tests.

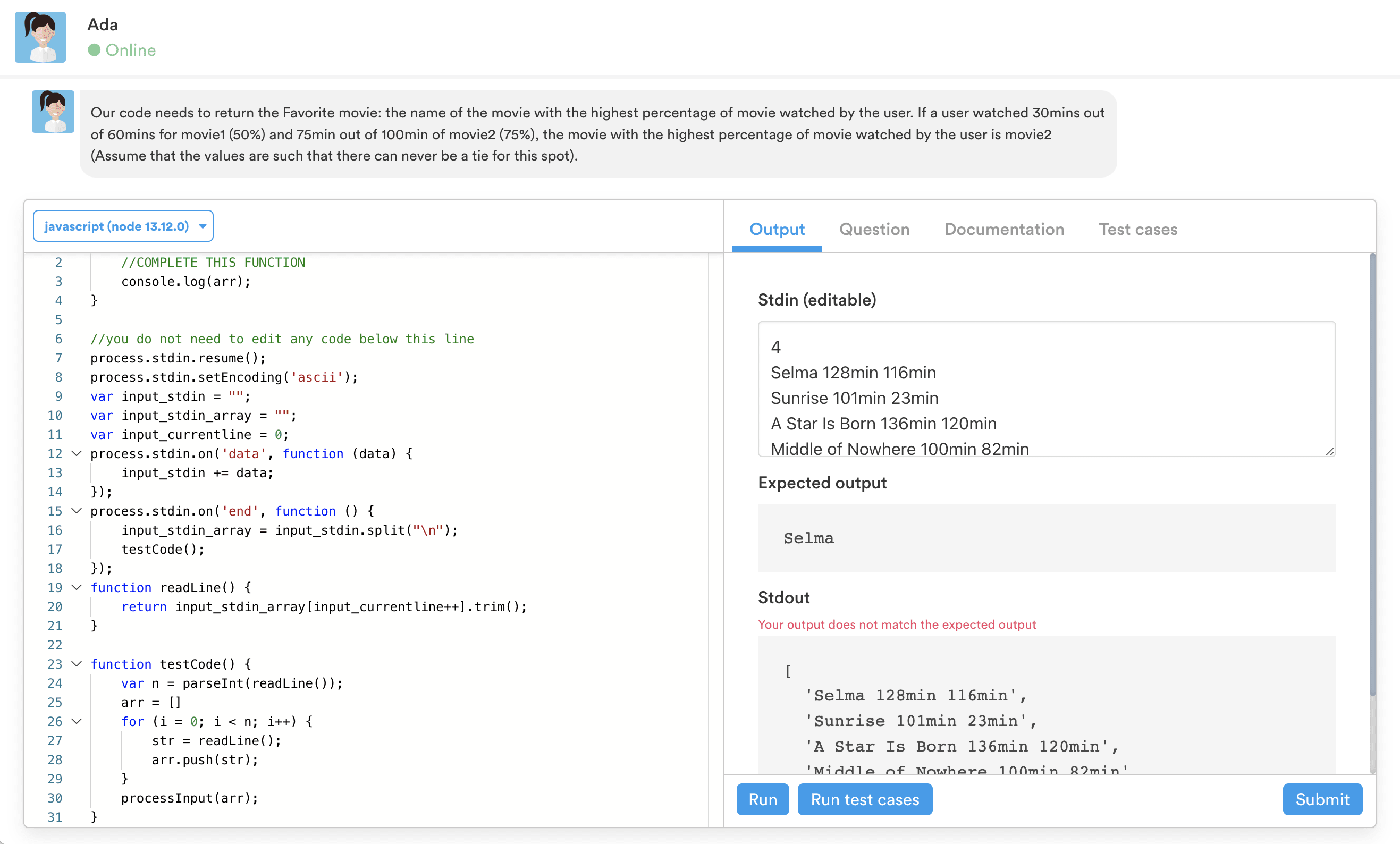

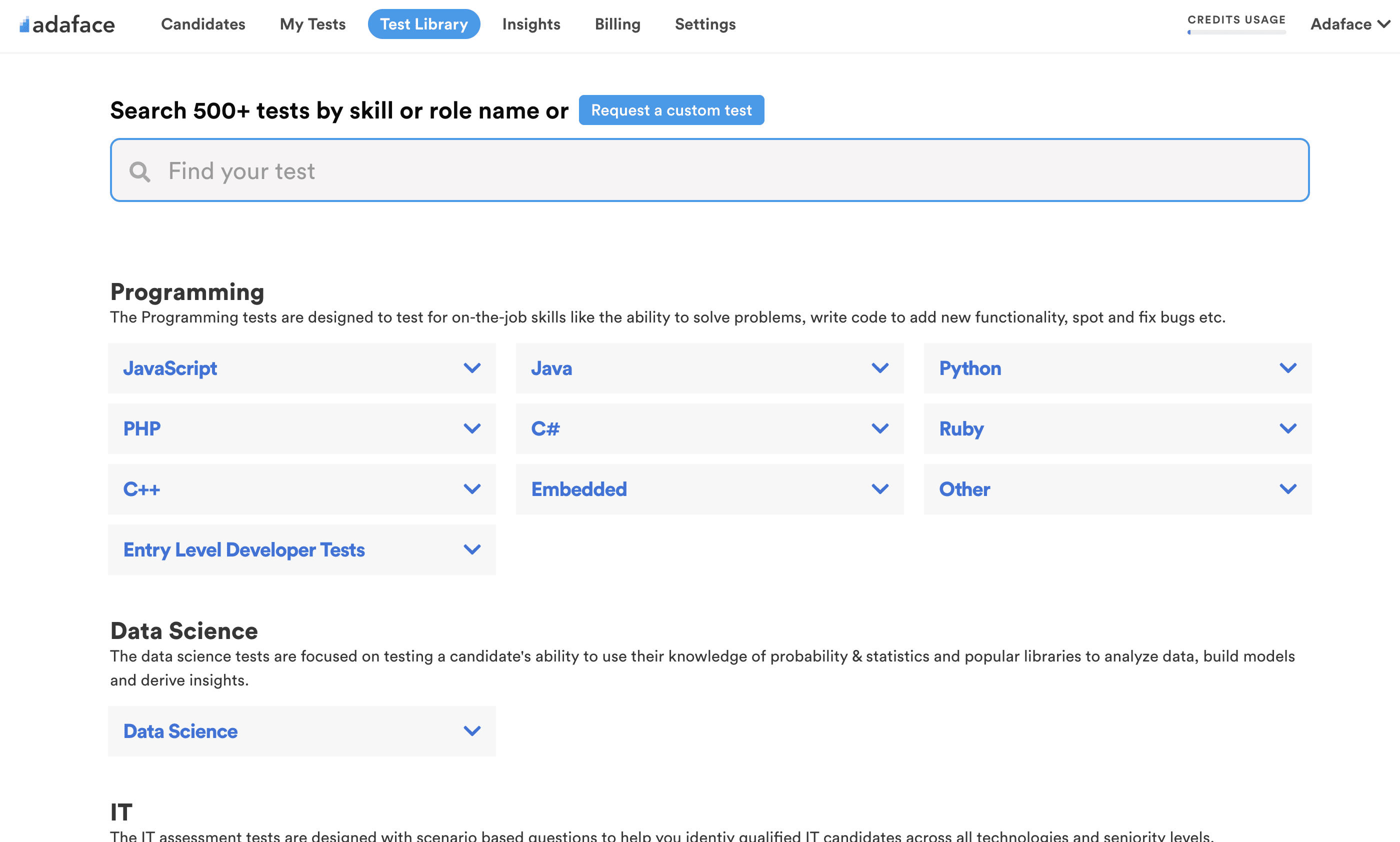

Our friendly chatbot guides your candidates through the assessment.

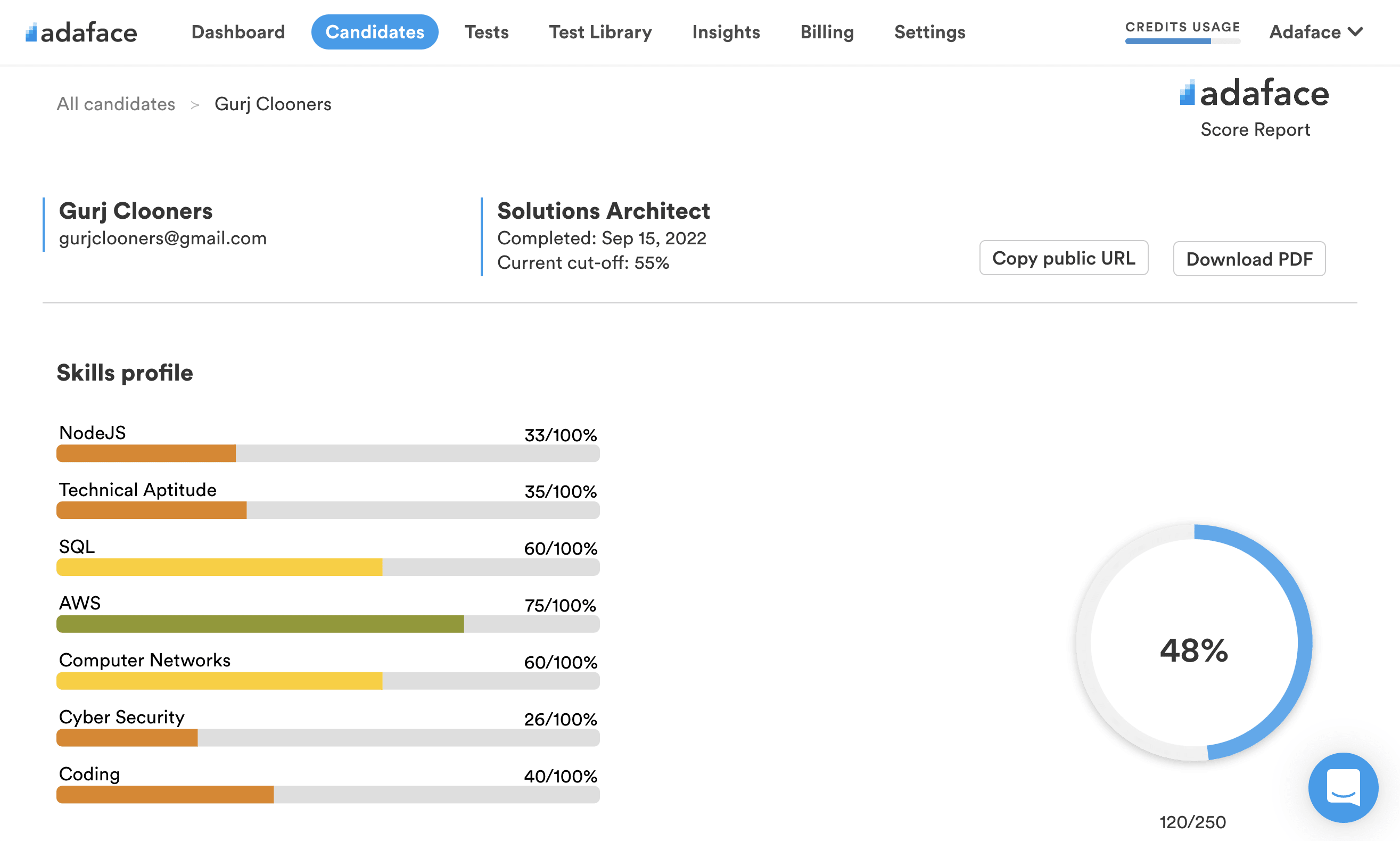

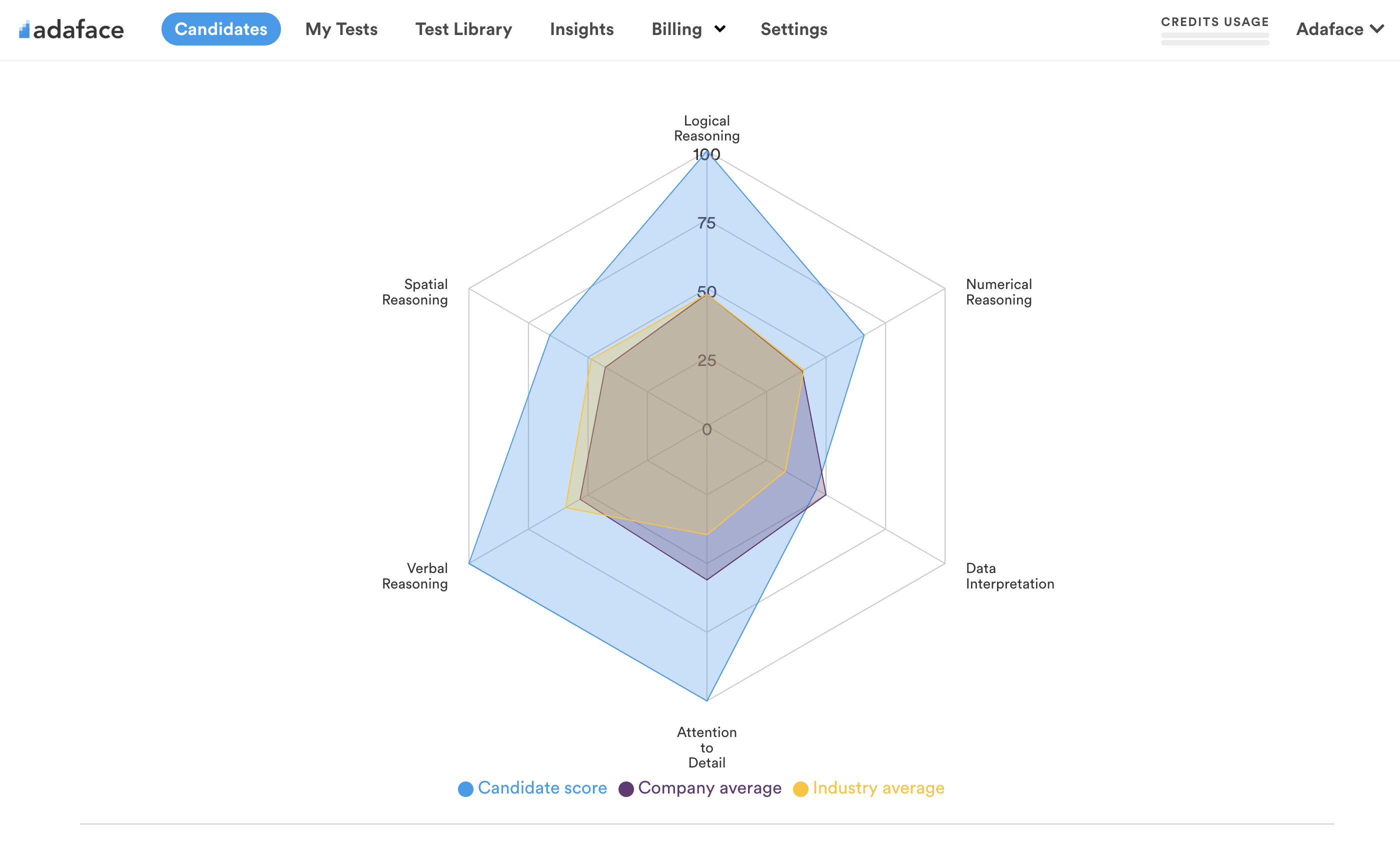

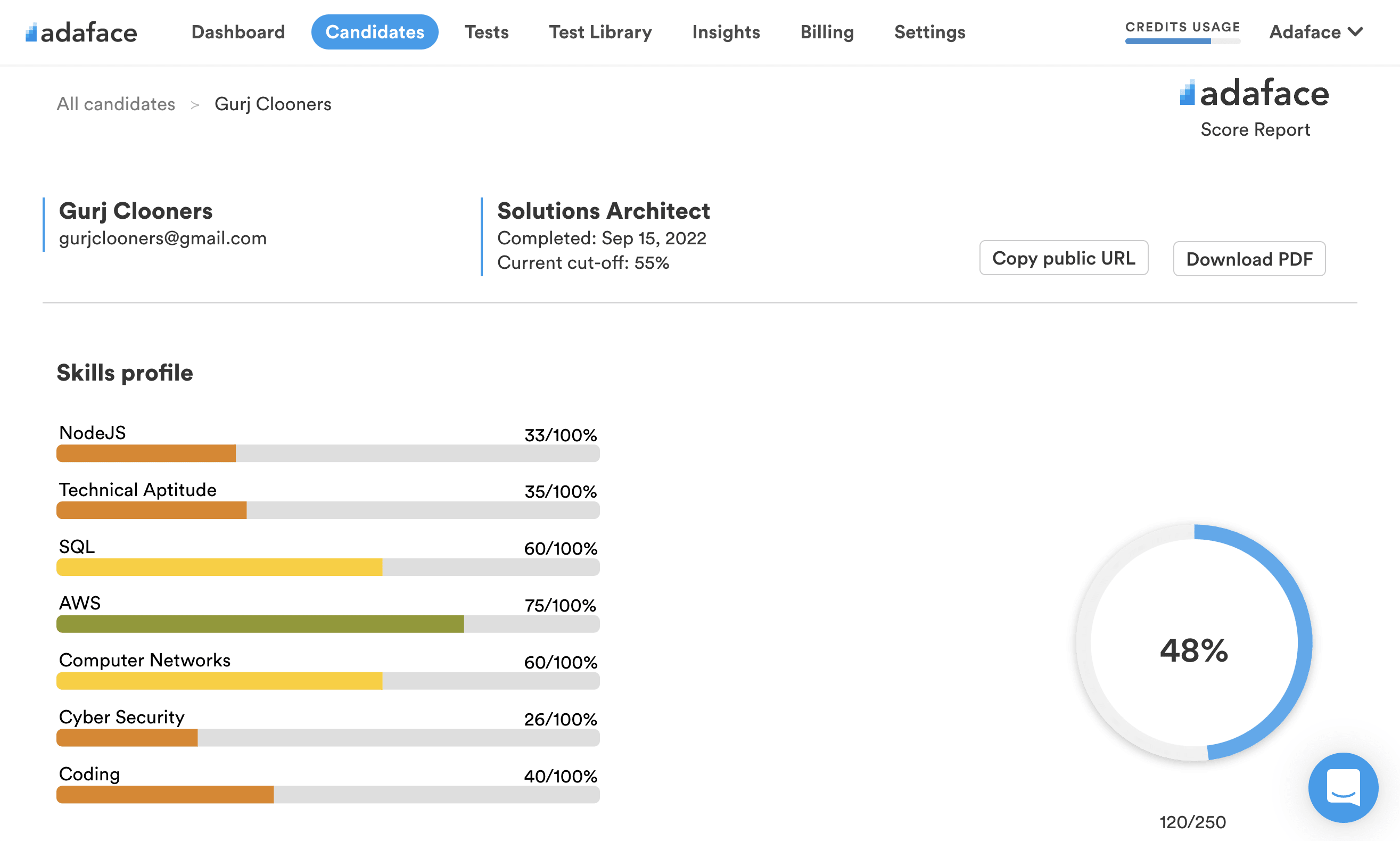

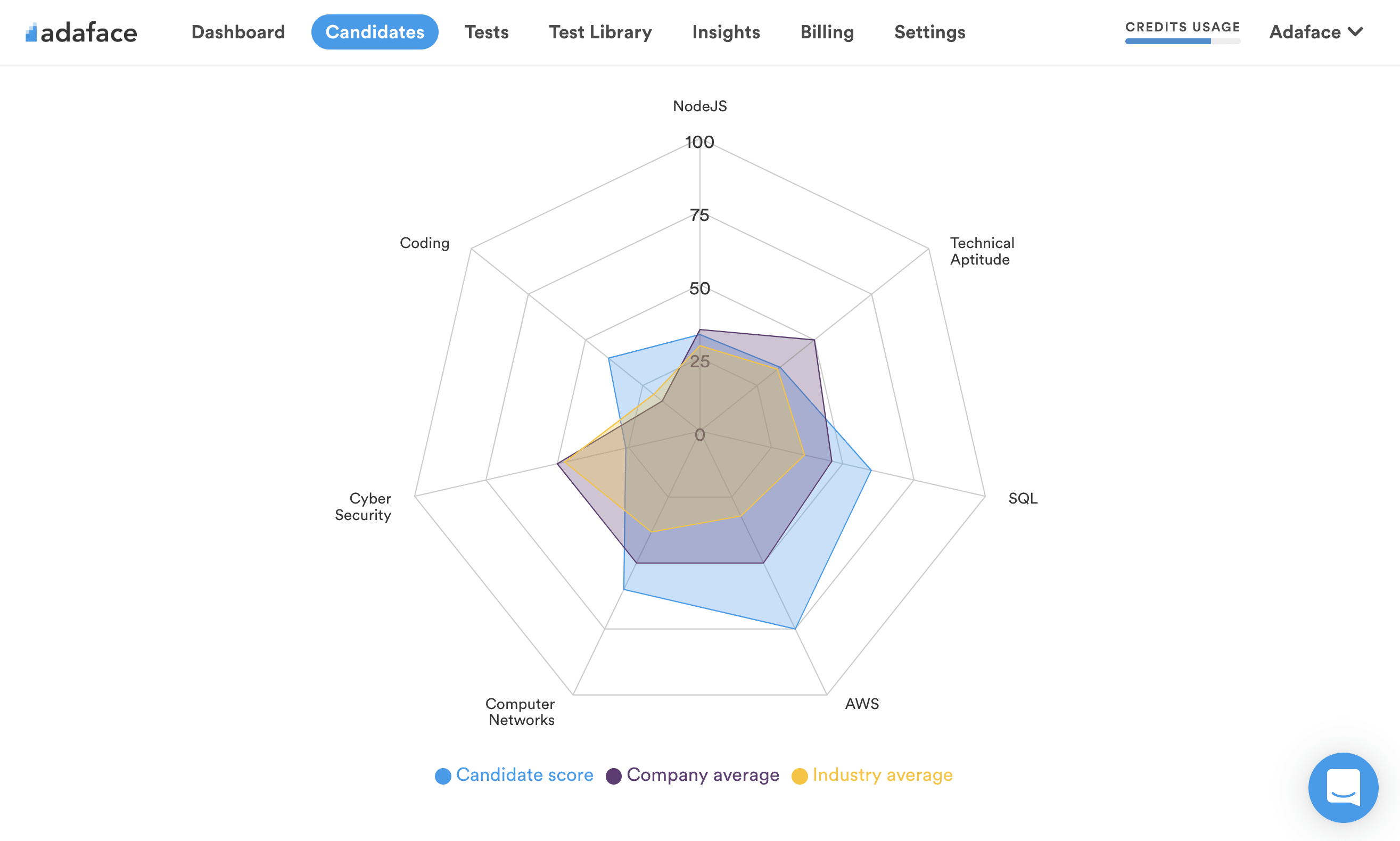

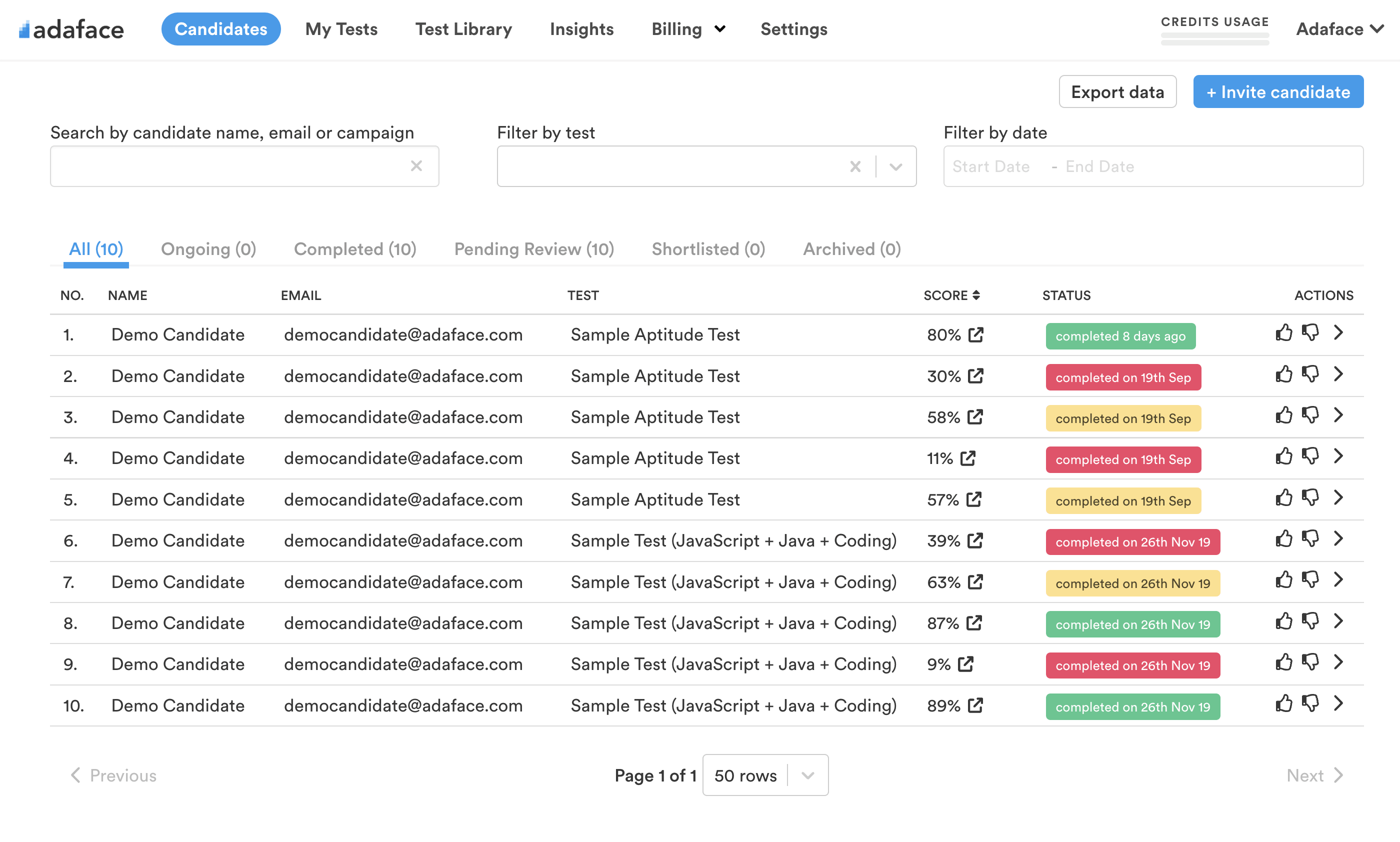

Detailed breakdown of each candidate's performance & industry benchmarks.

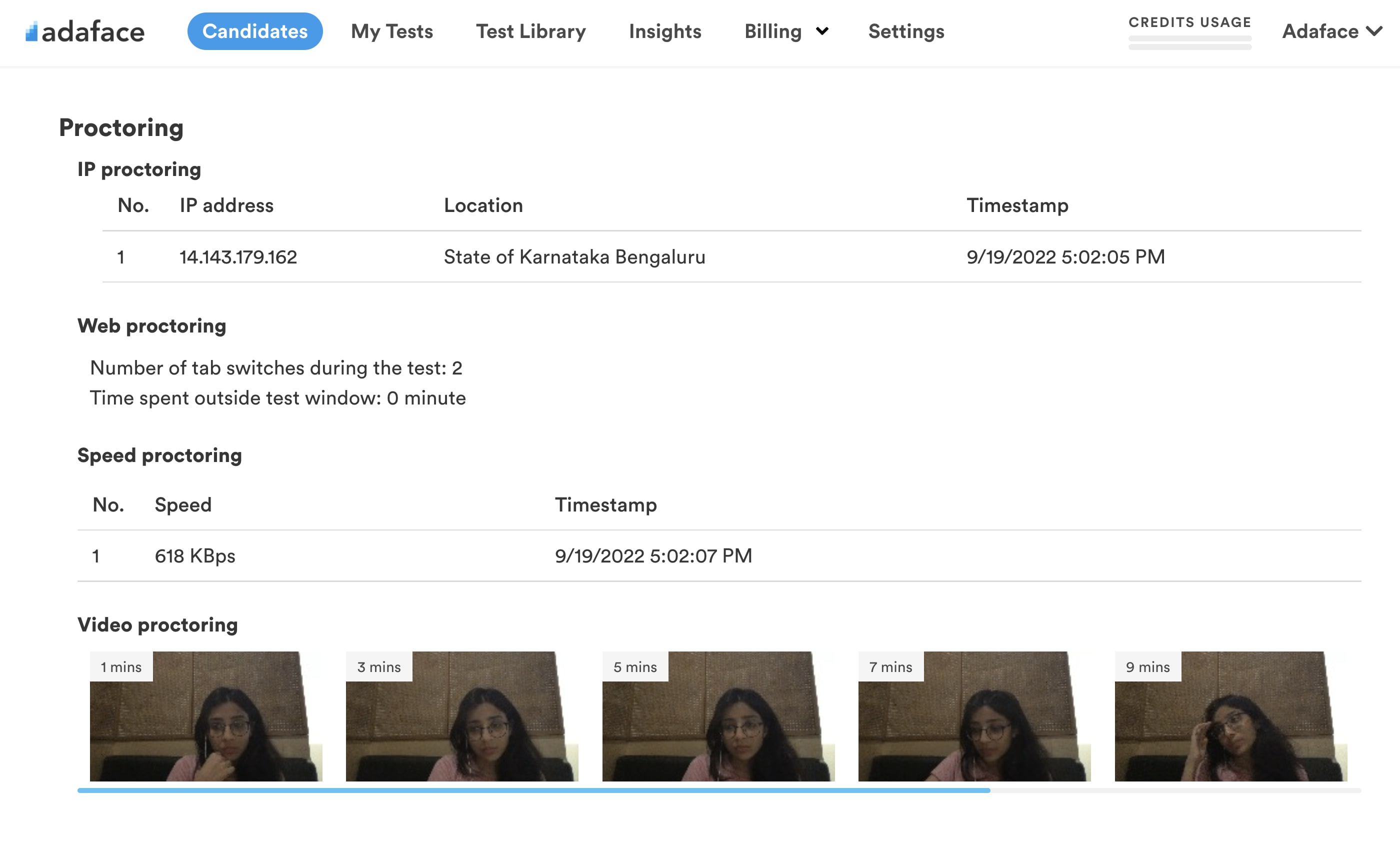

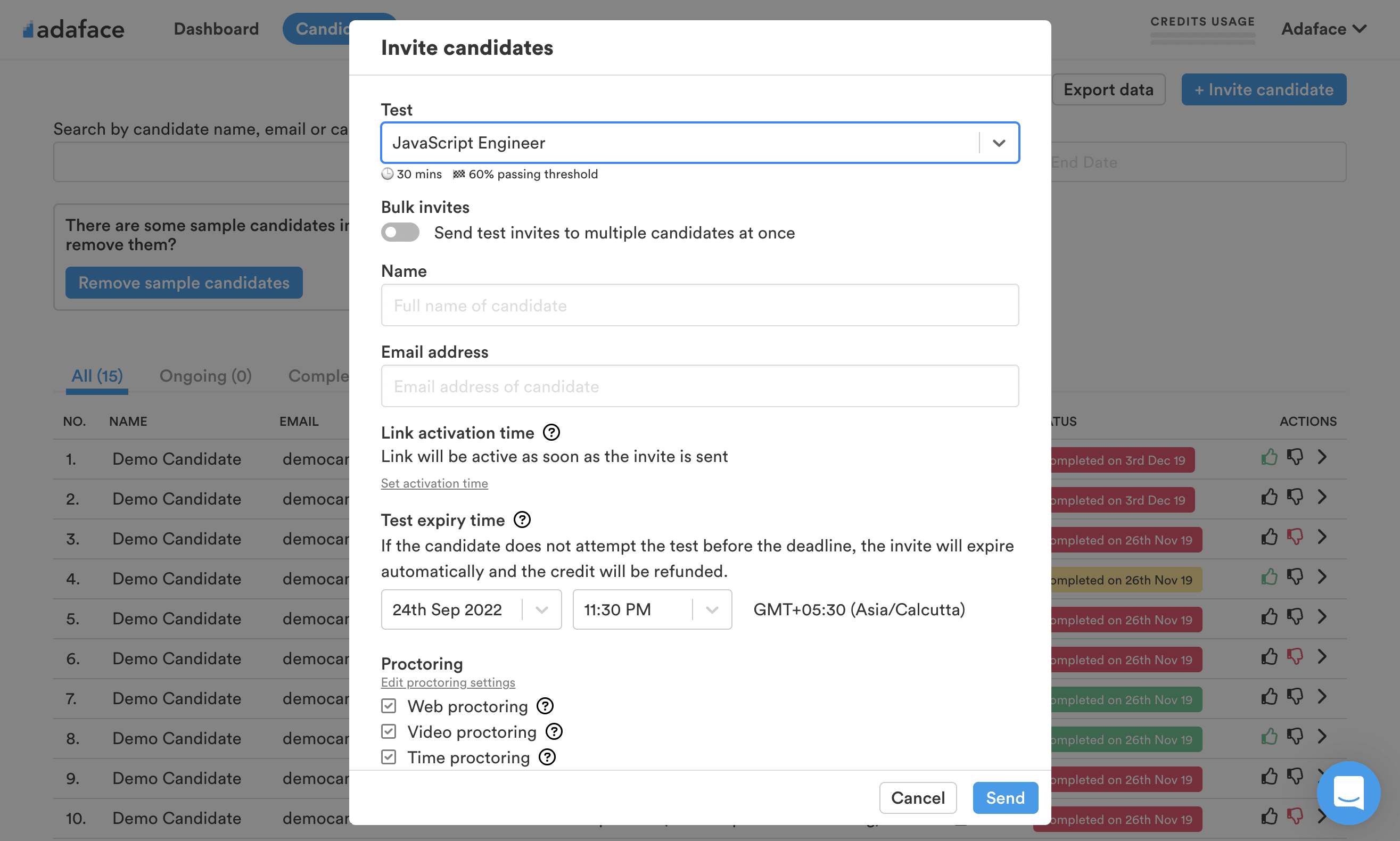

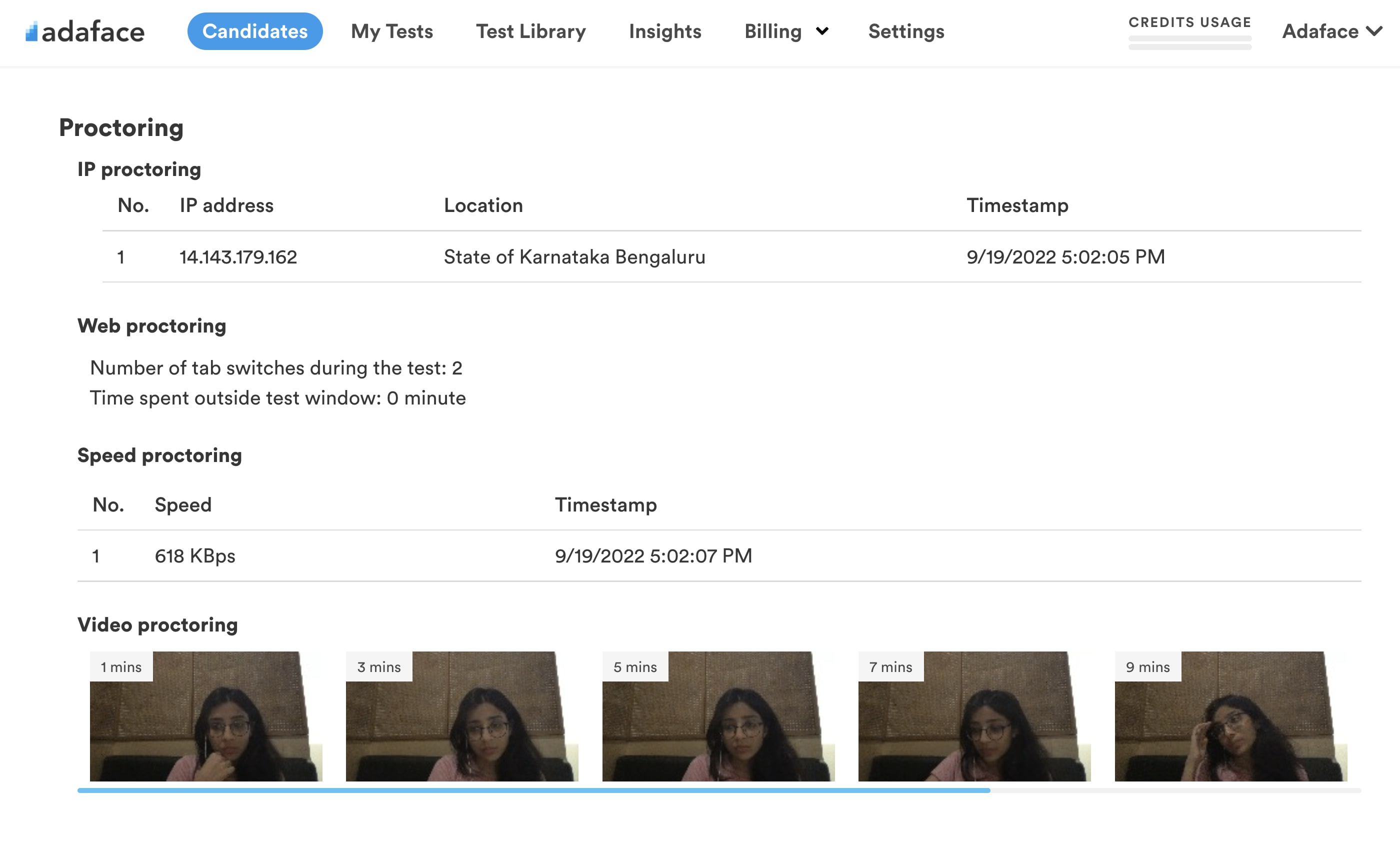

Proctoring features enable you to confidently administer online assessments.

With Adaface, we were able to optimise our initial screening process by upwards of 75%, freeing up precious time for both hiring managers and our talent acquisition team alike!

Brandon Lee, Head of People, Love, Bonito

Use questions that test for core fundamentals & on-the-job skills for accurate shortlisting. Here are a few sample Adaface questions:

| 🧐 Question | |||||

|---|---|---|---|---|---|

Hard CID Agent Logical Deduction Pattern Recognition Problem Solving | Solve | ||||

A code ("EIG AUC REO RAI COG") was sent to the criminal office by a CID agent named Batra. However, four of the five words are fake, with only one containing the information. They also included a clue in the form of a sentence: "If I tell you any character of the code word, you will be able to tell how many vowels there are in the code word." Are you able to figure out what the code word is?

A: RAI

B: EIG

C: AUC

D: REO

E: COG

F: None

| |||||

Medium Code language Code Decipherment Symbolic Representation | Solve | ||||

In a new code language called Adira,

- '4A, 2B, 9C' means 'truth is eternal'

- '9C, 2B, 8G, 3F' means 'hatred is not eternal'

- '4A, 5T, 3F, 1X' means 'truth does not change'

What is the code for 'hatred' in Adira?

| |||||

Hard Magic bag Arithmetic Sequences Pattern Recognition Problem Solving | Solve | ||||

Alex’s uncle is a magician who gave them a magic bag in which coins get doubled each time you put those coins into it. Initially, Alex had few coins with them. So, Alex put all the coins, and the coins got doubled. Alex took out all the coins and gave a few to their friend and then again put the remaining coins back in the bag. The coins doubled again; Alex took out all the coins again and gave a few coins to their second friend. Alex then put the remaining coins in the bag and the coins doubled again. Alex took out all the coins and gave a few coins to their third friend. There were no coins left with Alex when Alex gave coins to the third friend and Alex gave an equal number of coins to each friend. What is the minimum number of coins Alex had initially and how much did Alex give to each friend? A: Started with 3 coins

B: Started with 5 coins

C: Started with 6 coins

D: Started with 7 coins

E: Started with 9 coins

F: Gave 3 coins in every turn

G: Gave 4 coins in every turn

H: Gave 5 coins in every turn

I: Gave 7 coins in every turn

J: Gave 8 coins in every turn

K: Gave 9 coins in every turn

| |||||

Medium Claims for a new drug Xylanex Critical Thinking Evaluating Arguments Identifying Assumptions | Solve | ||||

A pharmaceutical company claims that their new drug, Xylanex, is highly effective in treating a specific medical condition. They provide statistical data from a clinical trial to support their claim. However, a group of scientists has raised concerns about the validity of the study design and potential bias in the data collection process. They argue that the results may be inflated and not truly representative of the drug's effectiveness.

Which of the following assumptions is necessary to support the pharmaceutical company's claim?

A: The clinical trial participants were randomly selected and representative of the target population.

B: The scientists raising concerns have a conflict of interest and are biased against the pharmaceutical company.

C: The statistical analysis of the clinical trial data was conducted by independent experts.

D: The medical condition being treated by Xylanex is widespread and affects a large number of individuals.

E: The pharmaceutical company has a proven track record of developing effective drugs for similar medical conditions.

| |||||

Medium China manufacturing Economic reasoning Cost analysis Inference Analysis | Solve | ||||

The cost of manufacturing phones in China is twenty percent lesser than the cost of manufacturing phones in Vietnam. Even after adding shipping fees and import taxes, it is cheaper to import phones from China to Vietnam than to manufacture phones in Vietnam. Which of the following statements is best supported by the given information. A: The shipping fee from China to Vietnam is more than 20% of the cost of manufacturing a phone in China.

B: The import taxes on a phone imported from China to Vietnam is less than 20% of the cost of manufacturing the phone in China.

C: Importing phones in Vietnam will cut 20% of the manufacturing jobs in Vietnam.

D: It takes 20% more time to manufacture a phone in Vietnam than it does in China.

E: Labour costs in Vietnam are 20% higher than in China.

| |||||

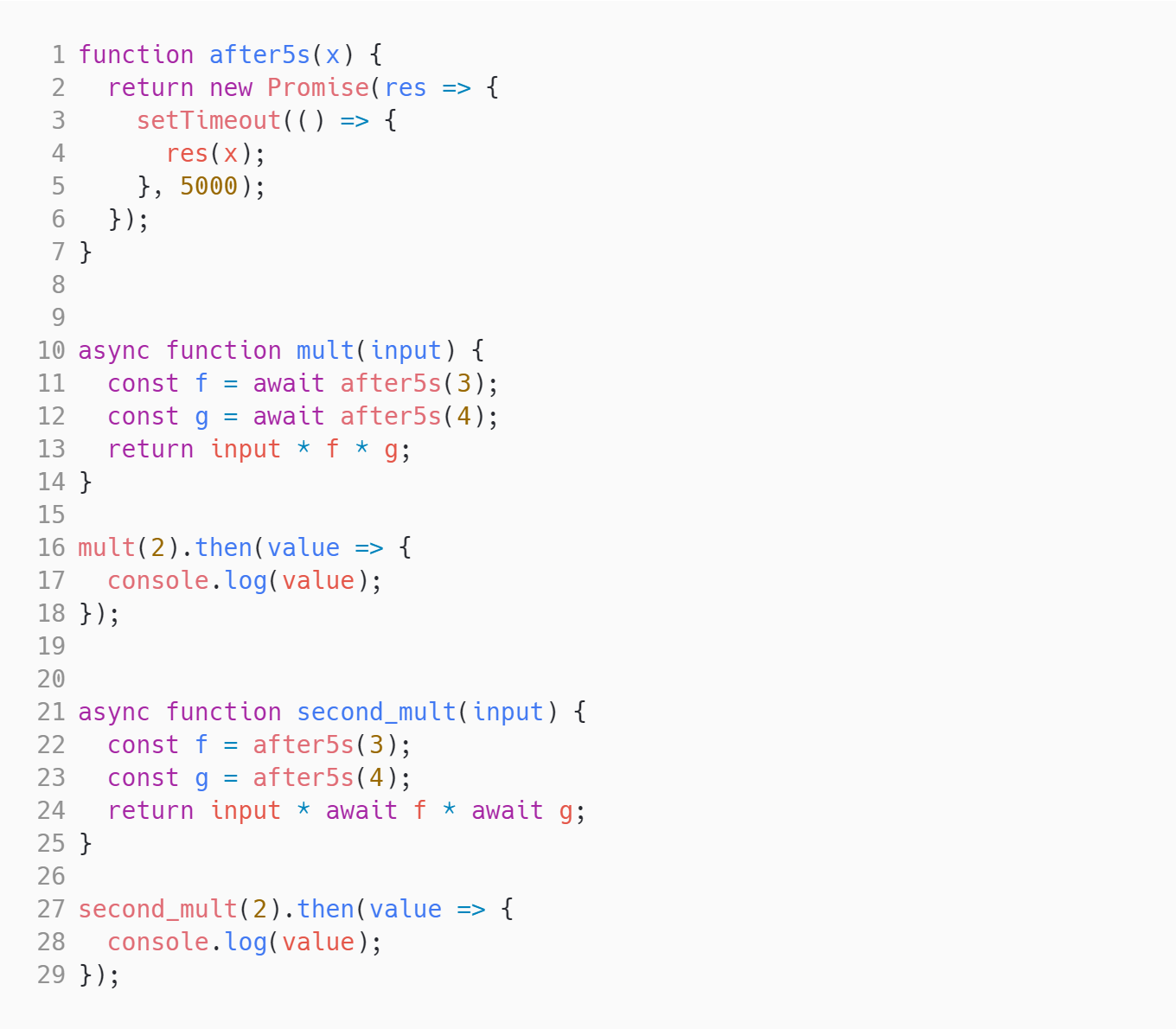

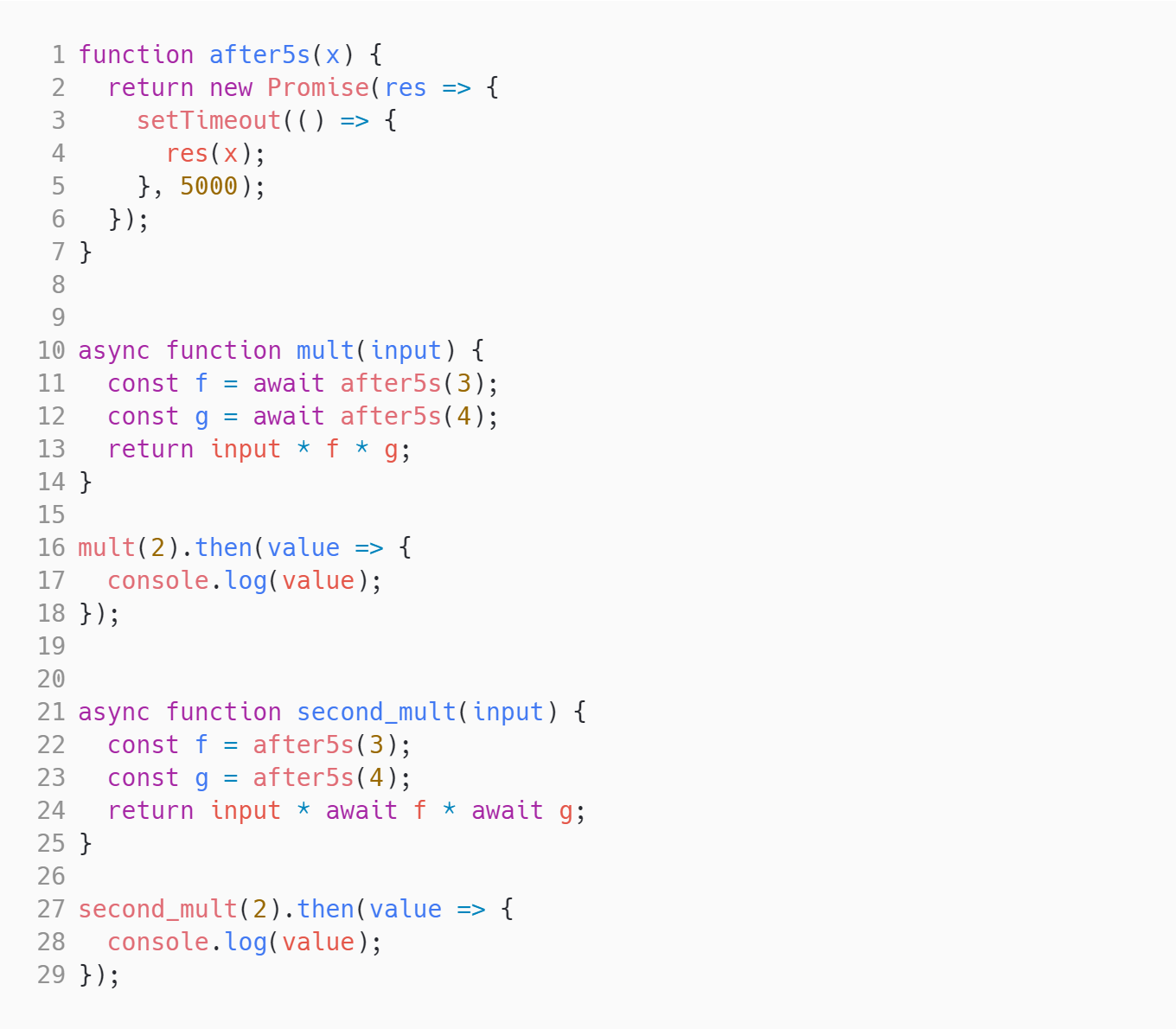

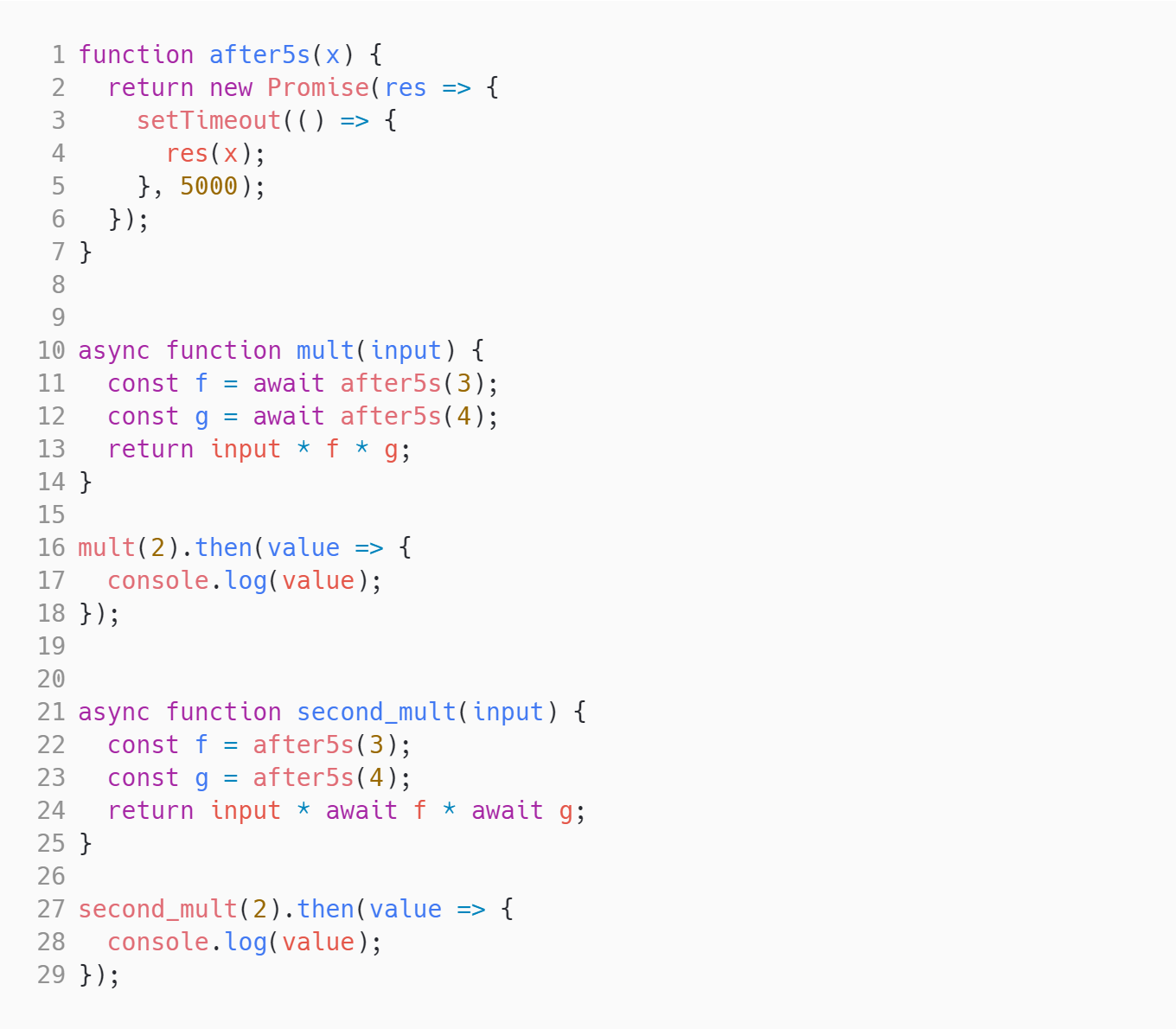

Medium Async Await Promises Promises Async-Await Asynchronous Programming | Solve | ||||

What will the following code output?  A: 24 after 5 seconds and after another 5 seconds, another 24

B: 24 followed by another 24 immediately

C: 24 immediately and another 24 after 5 seconds

D: After 5 seconds, 24 and 24

E: Undefined

F: NaN

G: None of these

| |||||

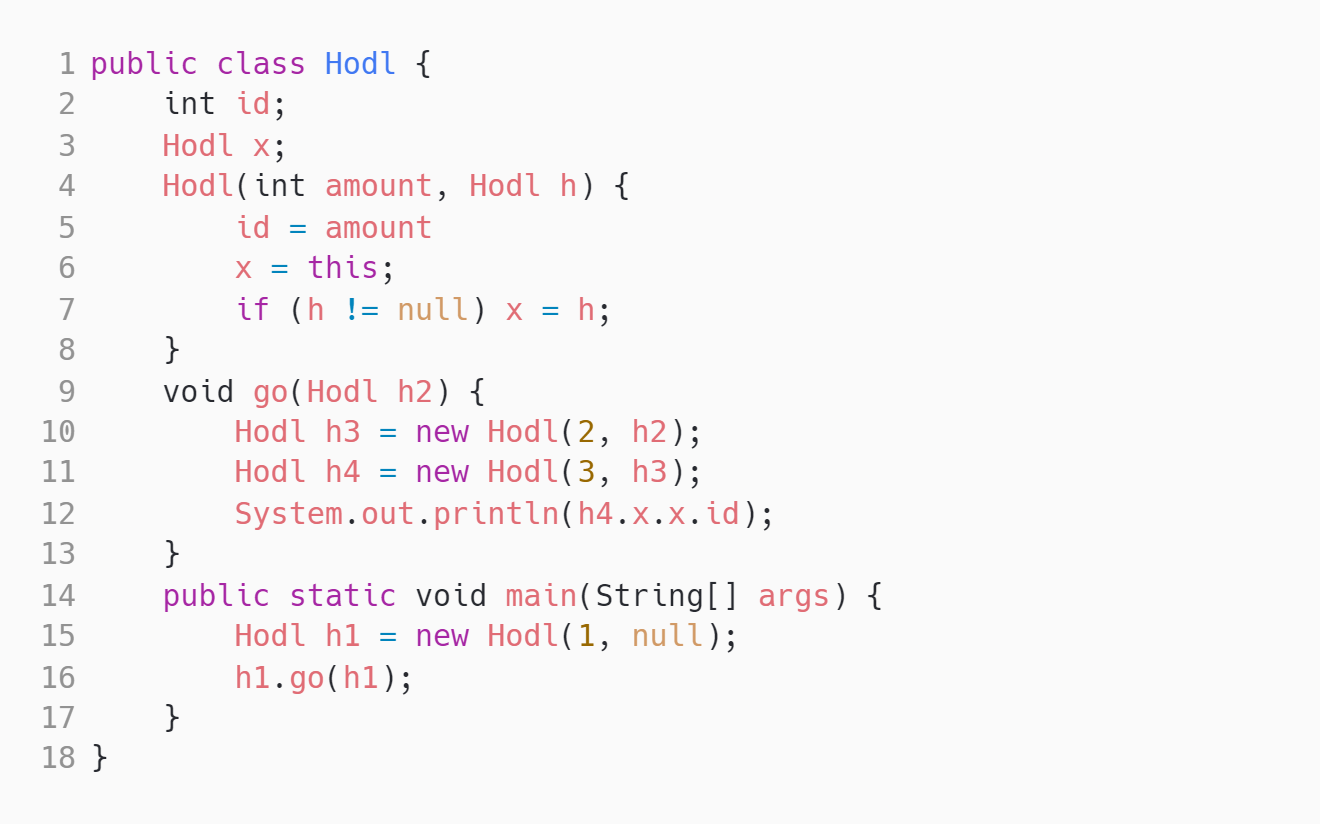

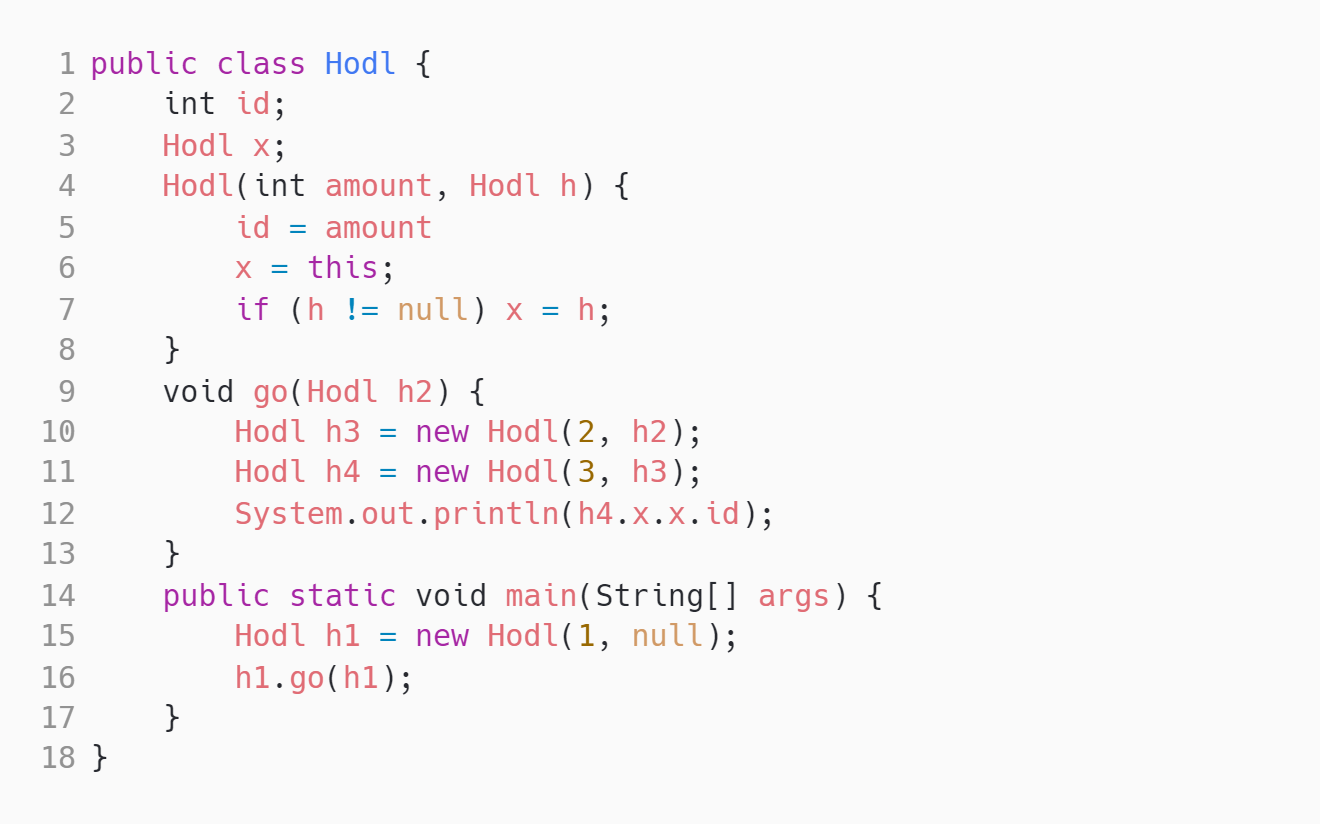

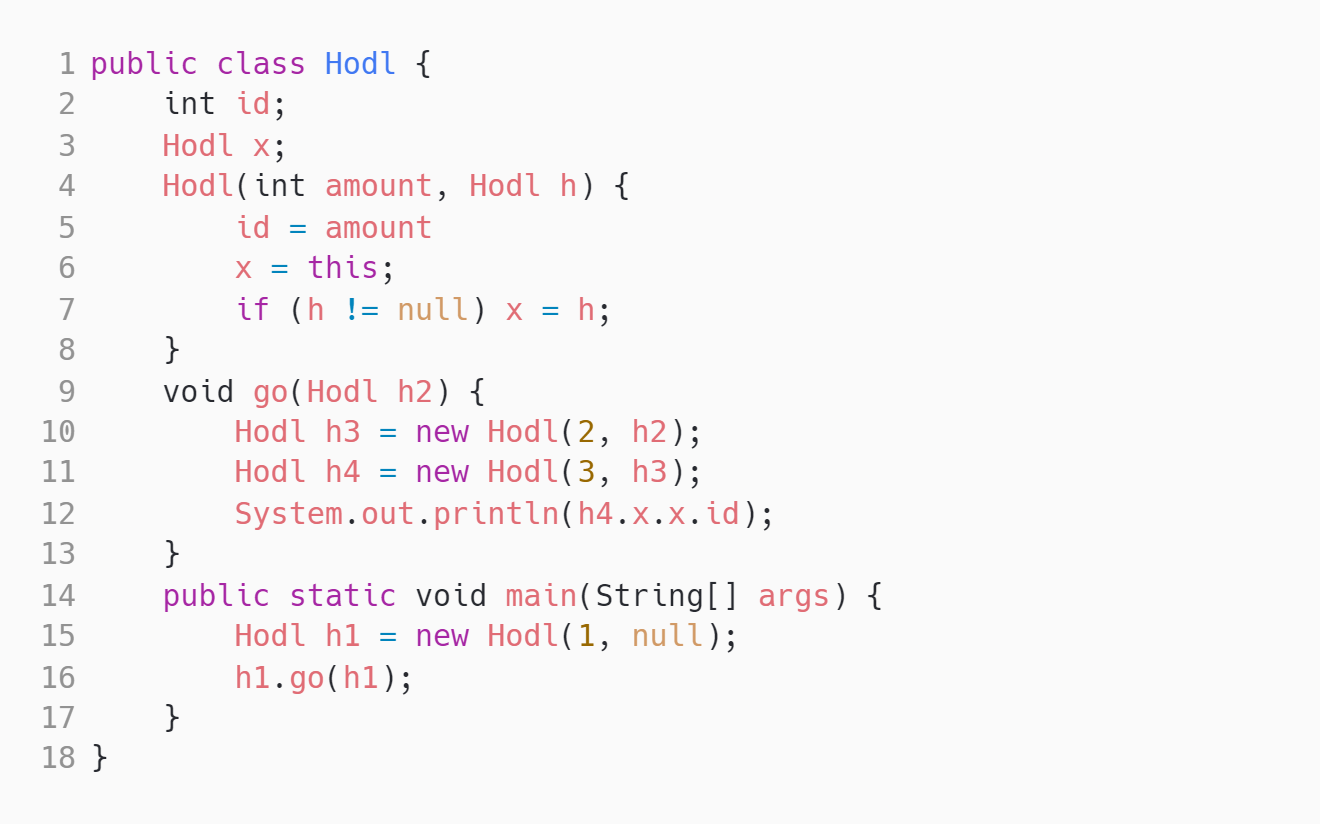

Hard Holding References | Solve | ||||

What does the following Java code output?  | |||||

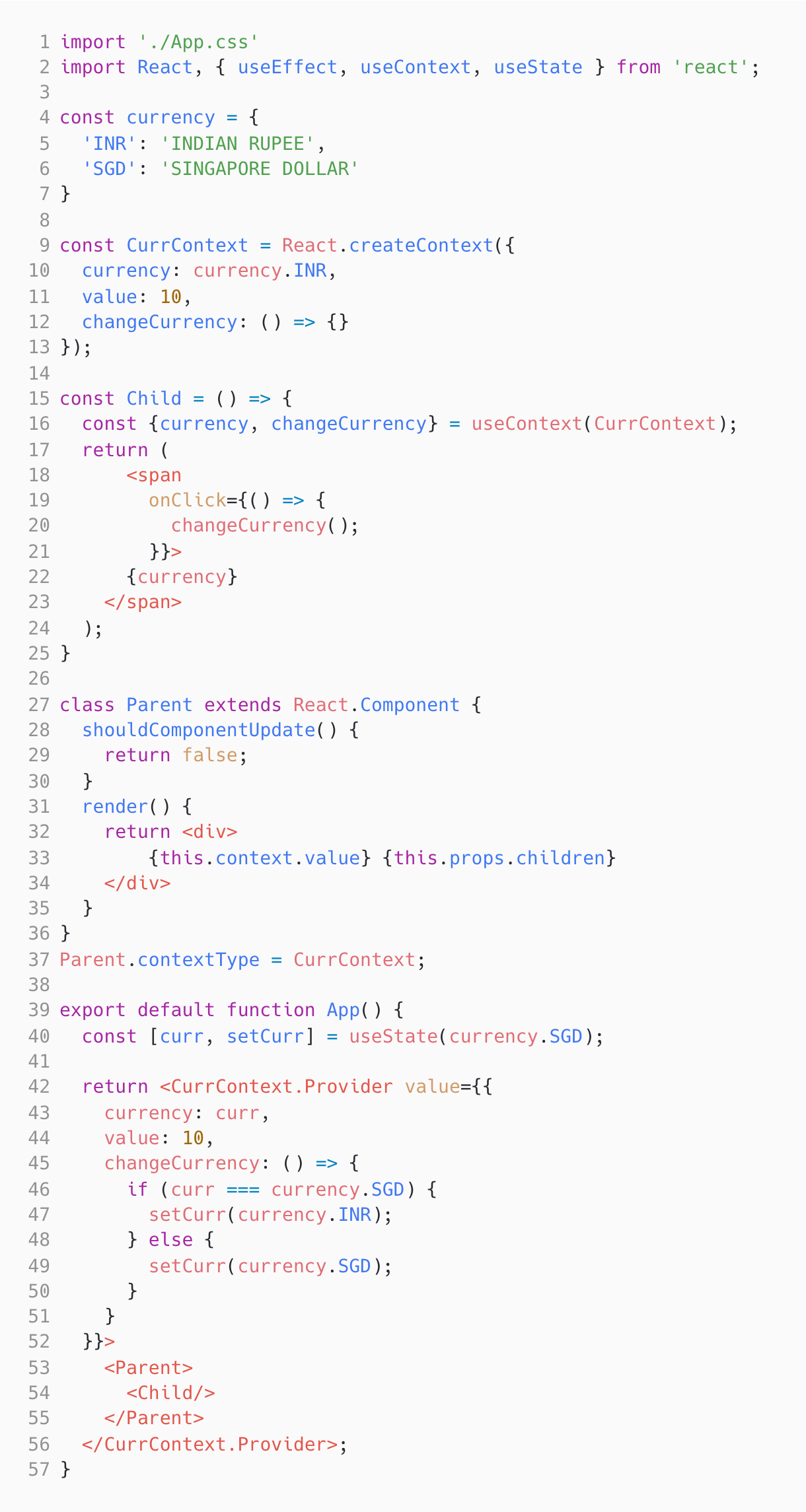

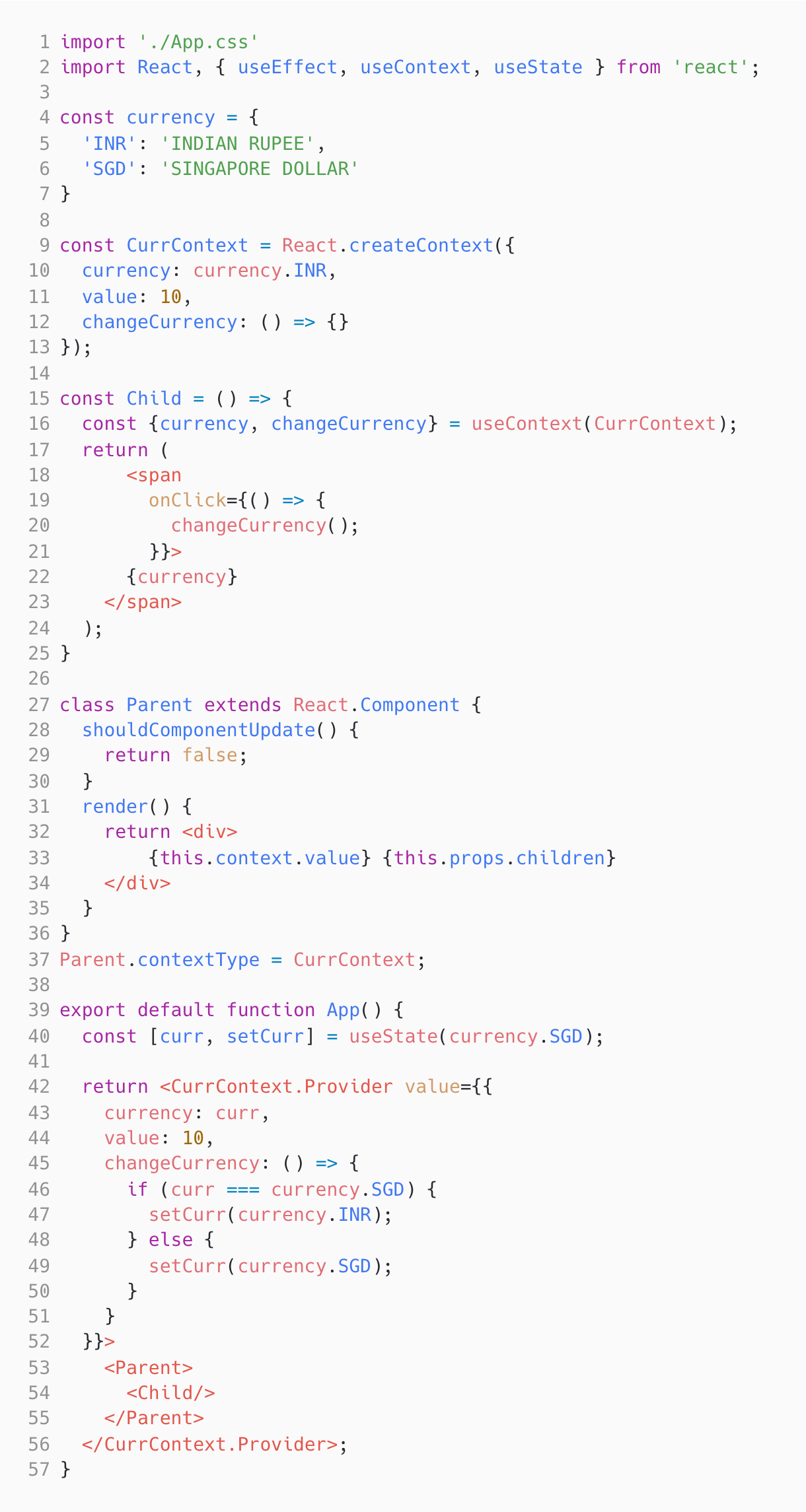

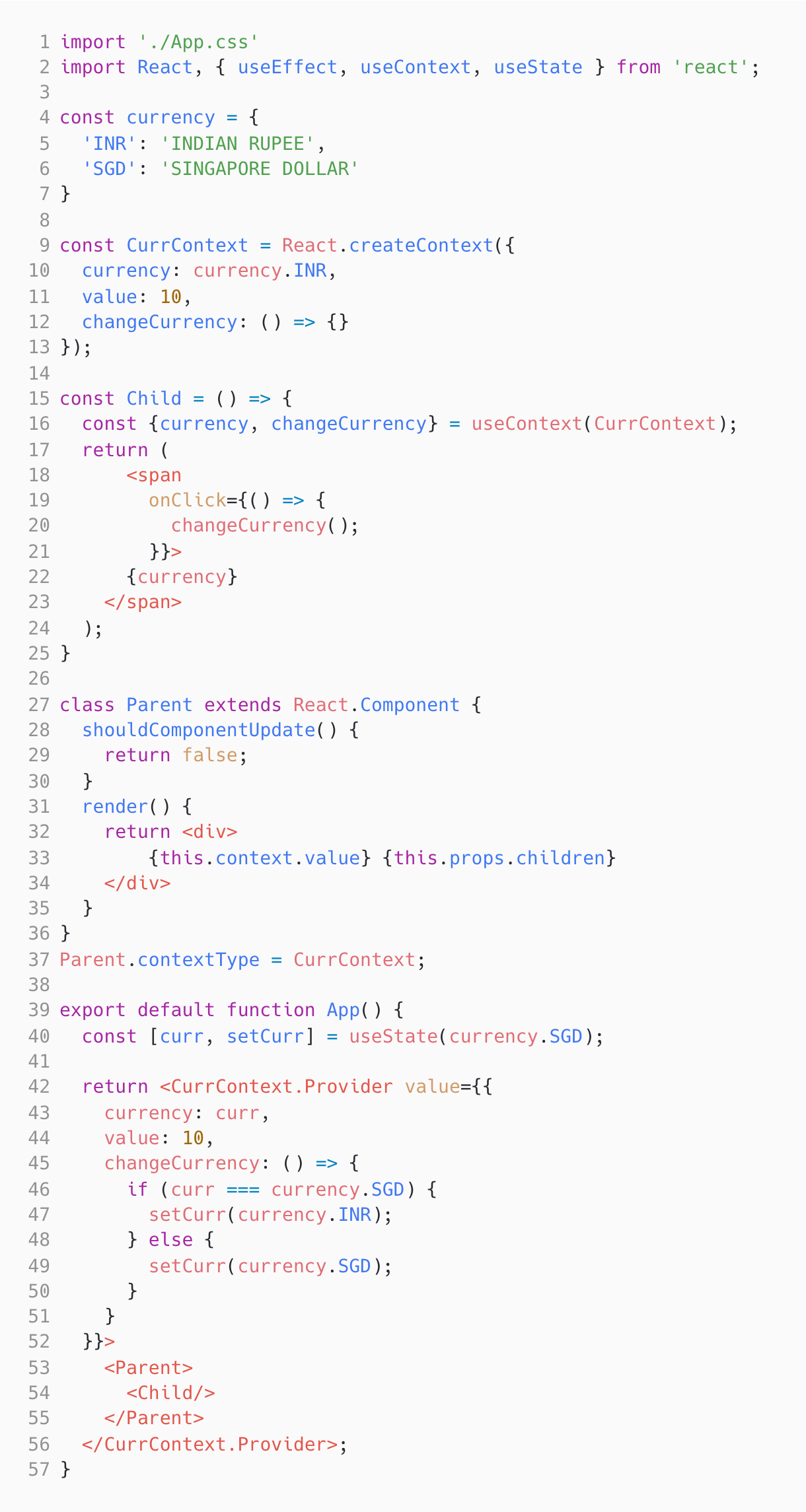

Hard Context re-renders React Context API Conditional Rendering Component Lifecycle State | Solve | ||||

Review the following React code:  Pick the correct statements:

A: The code renders 10 INDIAN RUPEE

B: The code renders 10 SINGAPORE DOLLAR

C: The code does not render anything and throws an error since JavaScript objects are not valid as React children

D: When the currency portion is clicked, the parent component is re-rendered

E: When the currency portion is clicked, parent component will skip the re-render because shouldComponentUpdate returns false

F: Parent component can be converted to a functional component with memoization (useMemo or memo) to avoid the re-render

| |||||

Medium EC2DataProcessing EC2 S3 Security Groups Network ACLs | Solve | ||||

You work as a Solutions Architect for a data analysis firm. The company stores large datasets on Amazon S3, and you've been tasked with setting up an Amazon EC2 instance to process this data. The EC2 instance will fetch data from S3, process it, and then write the results back to a different S3 bucket.

To ensure security, the EC2 instance should not have direct internet access, and it should only be able to communicate with the S3 buckets. You've decided to place the EC2 instance in a VPC private subnet with a CIDR block of 10.0.1.0/24. The associated security group is set to deny all inbound traffic and allow all outbound traffic.

Which additional configuration should you make to enable the EC2 instance to access the S3 buckets while complying with the security requirements?

A: Modify the Network ACL of the subnet to allow outbound connections to the IP range of the S3 service.

B: Assign a Public IP to the EC2 instance and update the security group to allow inbound and outbound traffic to S3.

C: Add a NAT Gateway in the private subnet and update the route tables to direct S3 traffic to the NAT Gateway.

D: Create an S3 VPC Gateway Endpoint and update the route tables for the private subnet to direct S3 traffic to the VPC Endpoint.

E: Create a VPN connection from the VPC to the S3 buckets.

| |||||

Medium Visitors Count Strings Logic String Parsing Character Counting | Solve | ||||

A manager hires a staff member to keep a record of the number of men, women, and children visiting the museum daily. The staff will note W if any women visit, M for men, and C for children. You need to write code that takes the string that represents the visits and prints the count of men, woman and children. The sequencing should be in decreasing order. Example:

Input:

WWMMWWCCC

Expected Output:

4W3C2M

Explanation:

‘W’ has the highest count, then ‘C’, then ‘M’.

⚠️⚠️⚠️ Note:

- The input is already parsed and passed to a function.

- You need to "print" the final result (not return it) to pass the test cases.

- If the input is- “MMW”, then the expected output is "2M1W" since there is no ‘C’.

- If any of them have the same count, the output should follow this order - M, W, C.

| |||||

Medium Data Filtering Report Development M Language Data Visualization | Solve | ||||

You are creating a Power BI report where users need to dynamically filter data based on a range of dates. The report has a Sales table with columns Date, ProductID, and Amount. You decide to use a Power Query parameter to allow users to select a start and end date, which will then filter the Sales table accordingly. The parameter is named DateRange and has a type of List. You need to write a Power Query M formula to filter the Sales table based on the selected date range. The DateRange parameter contains two dates: the first item is the start date, and the second item is the end date. How should the M formula be structured to achieve this functionality? A: Table.SelectRows(Sales, each [Date] >= DateRange{0} and [Date] <= DateRange{1})

B: Sales{[Date] >= DateRange{0}, [Date] <= DateRange{1}}

C: Table.FilterRows(Sales, each [Date] >= List.First(DateRange) and [Date] <= List.Last(DateRange))

D: Table.SelectRows(Sales, each [Date] >= List.First(DateRange) and [Date] <= List.Last(DateRange))

E: Filter.Table(Sales, [Date] >= DateRange{0}, [Date] <= DateRange{1})

| |||||

| 🧐 Question | 🔧 Skill | ||||

|---|---|---|---|---|---|

Hard CID Agent Logical Deduction Pattern Recognition Problem Solving | 3 mins Logical Reasoning | Solve | |||

A code ("EIG AUC REO RAI COG") was sent to the criminal office by a CID agent named Batra. However, four of the five words are fake, with only one containing the information. They also included a clue in the form of a sentence: "If I tell you any character of the code word, you will be able to tell how many vowels there are in the code word." Are you able to figure out what the code word is?

A: RAI

B: EIG

C: AUC

D: REO

E: COG

F: None

| |||||

Medium Code language Code Decipherment Symbolic Representation | 2 mins Attention to Detail | Solve | |||

In a new code language called Adira,

- '4A, 2B, 9C' means 'truth is eternal'

- '9C, 2B, 8G, 3F' means 'hatred is not eternal'

- '4A, 5T, 3F, 1X' means 'truth does not change'

What is the code for 'hatred' in Adira?

| |||||

Hard Magic bag Arithmetic Sequences Pattern Recognition Problem Solving | 3 mins Numerical Reasoning | Solve | |||

Alex’s uncle is a magician who gave them a magic bag in which coins get doubled each time you put those coins into it. Initially, Alex had few coins with them. So, Alex put all the coins, and the coins got doubled. Alex took out all the coins and gave a few to their friend and then again put the remaining coins back in the bag. The coins doubled again; Alex took out all the coins again and gave a few coins to their second friend. Alex then put the remaining coins in the bag and the coins doubled again. Alex took out all the coins and gave a few coins to their third friend. There were no coins left with Alex when Alex gave coins to the third friend and Alex gave an equal number of coins to each friend. What is the minimum number of coins Alex had initially and how much did Alex give to each friend? A: Started with 3 coins

B: Started with 5 coins

C: Started with 6 coins

D: Started with 7 coins

E: Started with 9 coins

F: Gave 3 coins in every turn

G: Gave 4 coins in every turn

H: Gave 5 coins in every turn

I: Gave 7 coins in every turn

J: Gave 8 coins in every turn

K: Gave 9 coins in every turn

| |||||

Medium Claims for a new drug Xylanex Critical Thinking Evaluating Arguments Identifying Assumptions | 2 mins Critical Thinking | Solve | |||

A pharmaceutical company claims that their new drug, Xylanex, is highly effective in treating a specific medical condition. They provide statistical data from a clinical trial to support their claim. However, a group of scientists has raised concerns about the validity of the study design and potential bias in the data collection process. They argue that the results may be inflated and not truly representative of the drug's effectiveness.

Which of the following assumptions is necessary to support the pharmaceutical company's claim?

A: The clinical trial participants were randomly selected and representative of the target population.

B: The scientists raising concerns have a conflict of interest and are biased against the pharmaceutical company.

C: The statistical analysis of the clinical trial data was conducted by independent experts.

D: The medical condition being treated by Xylanex is widespread and affects a large number of individuals.

E: The pharmaceutical company has a proven track record of developing effective drugs for similar medical conditions.

| |||||

Medium China manufacturing Economic reasoning Cost analysis Inference Analysis | 2 mins Verbal Reasoning | Solve | |||

The cost of manufacturing phones in China is twenty percent lesser than the cost of manufacturing phones in Vietnam. Even after adding shipping fees and import taxes, it is cheaper to import phones from China to Vietnam than to manufacture phones in Vietnam. Which of the following statements is best supported by the given information. A: The shipping fee from China to Vietnam is more than 20% of the cost of manufacturing a phone in China.

B: The import taxes on a phone imported from China to Vietnam is less than 20% of the cost of manufacturing the phone in China.

C: Importing phones in Vietnam will cut 20% of the manufacturing jobs in Vietnam.

D: It takes 20% more time to manufacture a phone in Vietnam than it does in China.

E: Labour costs in Vietnam are 20% higher than in China.

| |||||

Medium Async Await Promises Promises Async-Await Asynchronous Programming | 2 mins JavaScript | Solve | |||

What will the following code output?  A: 24 after 5 seconds and after another 5 seconds, another 24

B: 24 followed by another 24 immediately

C: 24 immediately and another 24 after 5 seconds

D: After 5 seconds, 24 and 24

E: Undefined

F: NaN

G: None of these

| |||||

Hard Holding References | 2 mins Java | Solve | |||

What does the following Java code output?  | |||||

Hard Context re-renders React Context API Conditional Rendering Component Lifecycle State | 3 mins React | Solve | |||

Review the following React code:  Pick the correct statements:

A: The code renders 10 INDIAN RUPEE

B: The code renders 10 SINGAPORE DOLLAR

C: The code does not render anything and throws an error since JavaScript objects are not valid as React children

D: When the currency portion is clicked, the parent component is re-rendered

E: When the currency portion is clicked, parent component will skip the re-render because shouldComponentUpdate returns false

F: Parent component can be converted to a functional component with memoization (useMemo or memo) to avoid the re-render

| |||||

Medium EC2DataProcessing EC2 S3 Security Groups Network ACLs | 2 mins AWS | Solve | |||

You work as a Solutions Architect for a data analysis firm. The company stores large datasets on Amazon S3, and you've been tasked with setting up an Amazon EC2 instance to process this data. The EC2 instance will fetch data from S3, process it, and then write the results back to a different S3 bucket.

To ensure security, the EC2 instance should not have direct internet access, and it should only be able to communicate with the S3 buckets. You've decided to place the EC2 instance in a VPC private subnet with a CIDR block of 10.0.1.0/24. The associated security group is set to deny all inbound traffic and allow all outbound traffic.

Which additional configuration should you make to enable the EC2 instance to access the S3 buckets while complying with the security requirements?

A: Modify the Network ACL of the subnet to allow outbound connections to the IP range of the S3 service.

B: Assign a Public IP to the EC2 instance and update the security group to allow inbound and outbound traffic to S3.

C: Add a NAT Gateway in the private subnet and update the route tables to direct S3 traffic to the NAT Gateway.

D: Create an S3 VPC Gateway Endpoint and update the route tables for the private subnet to direct S3 traffic to the VPC Endpoint.

E: Create a VPN connection from the VPC to the S3 buckets.

| |||||

Medium Visitors Count Strings Logic String Parsing Character Counting | 30 mins Coding | Solve | |||

A manager hires a staff member to keep a record of the number of men, women, and children visiting the museum daily. The staff will note W if any women visit, M for men, and C for children. You need to write code that takes the string that represents the visits and prints the count of men, woman and children. The sequencing should be in decreasing order. Example:

Input:

WWMMWWCCC

Expected Output:

4W3C2M

Explanation:

‘W’ has the highest count, then ‘C’, then ‘M’.

⚠️⚠️⚠️ Note:

- The input is already parsed and passed to a function.

- You need to "print" the final result (not return it) to pass the test cases.

- If the input is- “MMW”, then the expected output is "2M1W" since there is no ‘C’.

- If any of them have the same count, the output should follow this order - M, W, C.

| |||||

Medium Data Filtering Report Development M Language Data Visualization | 2 mins Power BI | Solve | |||

You are creating a Power BI report where users need to dynamically filter data based on a range of dates. The report has a Sales table with columns Date, ProductID, and Amount. You decide to use a Power Query parameter to allow users to select a start and end date, which will then filter the Sales table accordingly. The parameter is named DateRange and has a type of List. You need to write a Power Query M formula to filter the Sales table based on the selected date range. The DateRange parameter contains two dates: the first item is the start date, and the second item is the end date. How should the M formula be structured to achieve this functionality? A: Table.SelectRows(Sales, each [Date] >= DateRange{0} and [Date] <= DateRange{1})

B: Sales{[Date] >= DateRange{0}, [Date] <= DateRange{1}}

C: Table.FilterRows(Sales, each [Date] >= List.First(DateRange) and [Date] <= List.Last(DateRange))

D: Table.SelectRows(Sales, each [Date] >= List.First(DateRange) and [Date] <= List.Last(DateRange))

E: Filter.Table(Sales, [Date] >= DateRange{0}, [Date] <= DateRange{1})

| |||||

| 🧐 Question | 🔧 Skill | 💪 Difficulty | ⌛ Time | ||

|---|---|---|---|---|---|

CID Agent Logical Deduction Pattern Recognition Problem Solving | Logical Reasoning | Hard | 3 mins | Solve | |

A code ("EIG AUC REO RAI COG") was sent to the criminal office by a CID agent named Batra. However, four of the five words are fake, with only one containing the information. They also included a clue in the form of a sentence: "If I tell you any character of the code word, you will be able to tell how many vowels there are in the code word." Are you able to figure out what the code word is?

A: RAI

B: EIG

C: AUC

D: REO

E: COG

F: None

| |||||

Code language Code Decipherment Symbolic Representation | Attention to Detail | Medium | 2 mins | Solve | |

In a new code language called Adira,

- '4A, 2B, 9C' means 'truth is eternal'

- '9C, 2B, 8G, 3F' means 'hatred is not eternal'

- '4A, 5T, 3F, 1X' means 'truth does not change'

What is the code for 'hatred' in Adira?

| |||||

Magic bag Arithmetic Sequences Pattern Recognition Problem Solving | Numerical Reasoning | Hard | 3 mins | Solve | |

Alex’s uncle is a magician who gave them a magic bag in which coins get doubled each time you put those coins into it. Initially, Alex had few coins with them. So, Alex put all the coins, and the coins got doubled. Alex took out all the coins and gave a few to their friend and then again put the remaining coins back in the bag. The coins doubled again; Alex took out all the coins again and gave a few coins to their second friend. Alex then put the remaining coins in the bag and the coins doubled again. Alex took out all the coins and gave a few coins to their third friend. There were no coins left with Alex when Alex gave coins to the third friend and Alex gave an equal number of coins to each friend. What is the minimum number of coins Alex had initially and how much did Alex give to each friend? A: Started with 3 coins

B: Started with 5 coins

C: Started with 6 coins

D: Started with 7 coins

E: Started with 9 coins

F: Gave 3 coins in every turn

G: Gave 4 coins in every turn

H: Gave 5 coins in every turn

I: Gave 7 coins in every turn

J: Gave 8 coins in every turn

K: Gave 9 coins in every turn

| |||||

Claims for a new drug Xylanex Critical Thinking Evaluating Arguments Identifying Assumptions | Critical Thinking | Medium | 2 mins | Solve | |

A pharmaceutical company claims that their new drug, Xylanex, is highly effective in treating a specific medical condition. They provide statistical data from a clinical trial to support their claim. However, a group of scientists has raised concerns about the validity of the study design and potential bias in the data collection process. They argue that the results may be inflated and not truly representative of the drug's effectiveness.

Which of the following assumptions is necessary to support the pharmaceutical company's claim?

A: The clinical trial participants were randomly selected and representative of the target population.

B: The scientists raising concerns have a conflict of interest and are biased against the pharmaceutical company.

C: The statistical analysis of the clinical trial data was conducted by independent experts.

D: The medical condition being treated by Xylanex is widespread and affects a large number of individuals.

E: The pharmaceutical company has a proven track record of developing effective drugs for similar medical conditions.

| |||||

China manufacturing Economic reasoning Cost analysis Inference Analysis | Verbal Reasoning | Medium | 2 mins | Solve | |

The cost of manufacturing phones in China is twenty percent lesser than the cost of manufacturing phones in Vietnam. Even after adding shipping fees and import taxes, it is cheaper to import phones from China to Vietnam than to manufacture phones in Vietnam. Which of the following statements is best supported by the given information. A: The shipping fee from China to Vietnam is more than 20% of the cost of manufacturing a phone in China.

B: The import taxes on a phone imported from China to Vietnam is less than 20% of the cost of manufacturing the phone in China.

C: Importing phones in Vietnam will cut 20% of the manufacturing jobs in Vietnam.

D: It takes 20% more time to manufacture a phone in Vietnam than it does in China.

E: Labour costs in Vietnam are 20% higher than in China.

| |||||

Async Await Promises Promises Async-Await Asynchronous Programming | JavaScript | Medium | 2 mins | Solve | |

What will the following code output?  A: 24 after 5 seconds and after another 5 seconds, another 24

B: 24 followed by another 24 immediately

C: 24 immediately and another 24 after 5 seconds

D: After 5 seconds, 24 and 24

E: Undefined

F: NaN

G: None of these

| |||||

Holding References | Java | Hard | 2 mins | Solve | |

What does the following Java code output?  | |||||

Context re-renders React Context API Conditional Rendering Component Lifecycle State | React | Hard | 3 mins | Solve | |

Review the following React code:  Pick the correct statements:

A: The code renders 10 INDIAN RUPEE

B: The code renders 10 SINGAPORE DOLLAR

C: The code does not render anything and throws an error since JavaScript objects are not valid as React children

D: When the currency portion is clicked, the parent component is re-rendered

E: When the currency portion is clicked, parent component will skip the re-render because shouldComponentUpdate returns false

F: Parent component can be converted to a functional component with memoization (useMemo or memo) to avoid the re-render

| |||||

EC2DataProcessing EC2 S3 Security Groups Network ACLs | AWS | Medium | 2 mins | Solve | |

You work as a Solutions Architect for a data analysis firm. The company stores large datasets on Amazon S3, and you've been tasked with setting up an Amazon EC2 instance to process this data. The EC2 instance will fetch data from S3, process it, and then write the results back to a different S3 bucket.

To ensure security, the EC2 instance should not have direct internet access, and it should only be able to communicate with the S3 buckets. You've decided to place the EC2 instance in a VPC private subnet with a CIDR block of 10.0.1.0/24. The associated security group is set to deny all inbound traffic and allow all outbound traffic.

Which additional configuration should you make to enable the EC2 instance to access the S3 buckets while complying with the security requirements?

A: Modify the Network ACL of the subnet to allow outbound connections to the IP range of the S3 service.

B: Assign a Public IP to the EC2 instance and update the security group to allow inbound and outbound traffic to S3.

C: Add a NAT Gateway in the private subnet and update the route tables to direct S3 traffic to the NAT Gateway.

D: Create an S3 VPC Gateway Endpoint and update the route tables for the private subnet to direct S3 traffic to the VPC Endpoint.

E: Create a VPN connection from the VPC to the S3 buckets.

| |||||

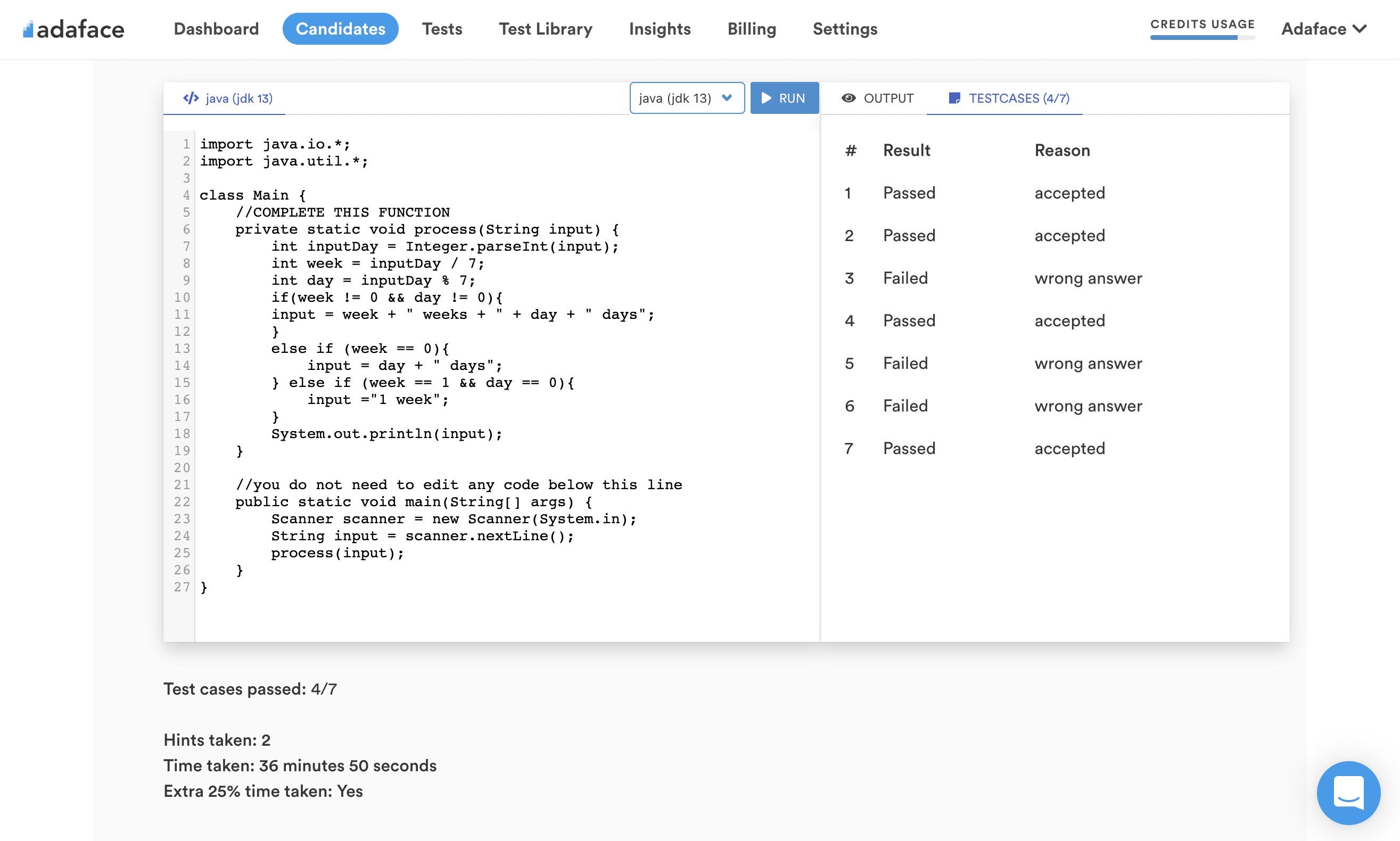

Visitors Count Strings Logic String Parsing Character Counting | Coding | Medium | 30 mins | Solve | |

A manager hires a staff member to keep a record of the number of men, women, and children visiting the museum daily. The staff will note W if any women visit, M for men, and C for children. You need to write code that takes the string that represents the visits and prints the count of men, woman and children. The sequencing should be in decreasing order. Example:

Input:

WWMMWWCCC

Expected Output:

4W3C2M

Explanation:

‘W’ has the highest count, then ‘C’, then ‘M’.

⚠️⚠️⚠️ Note:

- The input is already parsed and passed to a function.

- You need to "print" the final result (not return it) to pass the test cases.

- If the input is- “MMW”, then the expected output is "2M1W" since there is no ‘C’.

- If any of them have the same count, the output should follow this order - M, W, C.

| |||||

Data Filtering Report Development M Language Data Visualization | Power BI | Medium | 2 mins | Solve | |

You are creating a Power BI report where users need to dynamically filter data based on a range of dates. The report has a Sales table with columns Date, ProductID, and Amount. You decide to use a Power Query parameter to allow users to select a start and end date, which will then filter the Sales table accordingly. The parameter is named DateRange and has a type of List. You need to write a Power Query M formula to filter the Sales table based on the selected date range. The DateRange parameter contains two dates: the first item is the start date, and the second item is the end date. How should the M formula be structured to achieve this functionality? A: Table.SelectRows(Sales, each [Date] >= DateRange{0} and [Date] <= DateRange{1})

B: Sales{[Date] >= DateRange{0}, [Date] <= DateRange{1}}

C: Table.FilterRows(Sales, each [Date] >= List.First(DateRange) and [Date] <= List.Last(DateRange))

D: Table.SelectRows(Sales, each [Date] >= List.First(DateRange) and [Date] <= List.Last(DateRange))

E: Filter.Table(Sales, [Date] >= DateRange{0}, [Date] <= DateRange{1})

| |||||