How many golf balls can you fit in an airplane? Why are manhole covers round?

These are some of the brain teasers asked frequently in interviews. As a candidate one might think what does the number of golf balls in an airplane have to do with the role. According to the Journal of Applied Social Psychology, very little.

“They don’t predict anything,” Laszlo Bock, Google’s Senior Vice President of People Operations, told The New York Times. “They serve primarily to make the interviewer feel smart.” Google banned their infamous, mind-boggling, brain teaser interview questions about 8 years ago. Microsoft discontinued their “lateral thinking puzzle” interview questions over a decade ago as well. So you might wonder why so many companies would still be following a controversial process started by Microsoft in the 90s. Limited resources, timeframe and inability to create a replica of the actual work environment leads to managers, HR representatives, IT leads and CEOs take the popular yet debatable approach of puzzles or riddle based interviews.

Why do companies use puzzle questions for interviews?

Brainteaser interview questions have been around longer than most people realise. These interviews are used as a metric to understand how a person would work in a stressful environment Two major sectors where these interviews are extremely popular are software development and finance.

In the software development industry, sometimes it is not the go-to option for businesses but rather the most feasible option since a lot of leads and recruiters have a very surface level understanding of what programmers actually do. They lie more on the behavioral end of the interviewing spectrum than skill.

While in the finance industry, these interviews are a form of stress test. A lot of jobs in the finance industry require a person to be very quick on their feet and use their quantitative and analytical skills on a very short notice. These types of interviews can also be an efficient test of basic analytical and quantitative skills. With a large application pool, these tests can be a good filtering tool.

Types of Riddle and Puzzle Questions asked in interviews

There are typically two types of puzzles being asked in interviews. One that requires an abstract “eureka” moment and another that relies on logical deductions. Including the latter to kick off the interview can be perceived as a small step in a multi layered process. But the first type can be considered at best, of being equal to compiler micro-benchmarks. It can tell a lot about a candidate’s puzzle solving skills and very less about their programming skills.

Why is it not a good idea to use puzzle questions for interviews?

1. Little to no evidence supporting correlation between personality traits and future performance.

Puzzle based interview questions aim at predicting a candidate’s reaction to a certain environment or situation. Very little to no evidence exists supporting the correlation between personality traits and their impact on the person’s future performance. The correlations are limited and the predictions extremely broad. For example we can reckon with a probability puzzle whether a person will be good at financial modelling but we can’t determine whether they would be able to deploy a strategy with the help of Python or how smoothly they would perform in a team setting.

2. The “thin-slice” judgement

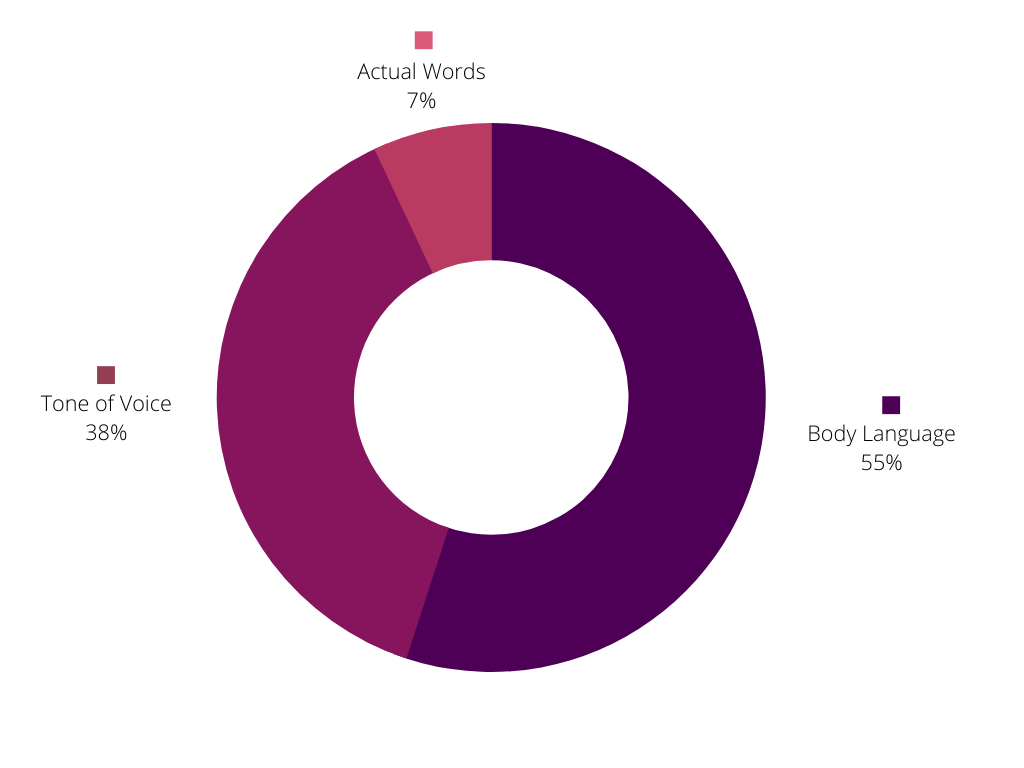

Interviews often revolve around the candidates’ background, personality, education and relevant work experience. This can lead to the interviewer identifying with the candidate on some aspects and developing a bias. More so when they see a candidate approach a certain puzzle the way they would have. The thin-slice judgement is exactly this phenomenon. When we form a measurable opinion about someone based on limited exposure and information, we can make a misinformed and biased judgement. Studies show a high correlation between body language and likeability of a candidate and their chances of being selected after an interview.

3. Importance of body language in interview

Source: Albert Mehrabian, Silent Messages

4. Non standardised format of puzzles in interviews

Statistics, probability and set theory are some of the topics on which most puzzles are based. Some of them involve high approximations and unclear instructions regarding constraints. These issues can make puzzle based interviews highly erratic and inconsistent as an interview tool. The results of which are not highly dependable or useful in determining the best fit for a certain role.

An article from Microsoft "What would Feynman do?" discredits the efficiency of puzzles and brain teasers in a humorous way. A better way to predict a candidate’s future performance would be by collecting data on their past performances in similar roles and situations. Consistent performance is a far better indicator of a person’s capability than a stress test that only identifies their interest in solving puzzles.

Standardising the interviewing process also helps in alleviating biases and inconsistencies. Adaface aims at standardising the screening stage of interviews and removing any biases and irrelevant exchange of information.

5. Ambiguous language of puzzles

Puzzles often have a very riddled language and irrelevant information hidden with the required data. Extracting information from puzzles can be improved by practice and hence gives an advantage to people interested in solving puzzles over those who do not enjoy them. Most of the time the interviewers have a certain solution at their disposal and candidates with the same approach are given a preference over the ones with the most innovative approach.

Conclusion

Finding out a suitable candidate among hundreds is difficult. But to Google and Microsoft’s credit, they did away with this regressive method of interviewing almost a decade ago. Companies that adopted this following Microsoft and Google should do away with this too. Deciding job seekers' careers based on puzzles and games is not only frivolous but also disrespectful.

Entrepreneur in Residence at Adaface

Spending too much time screening candidates?

We make it easy for you to find the best candidates in your pipeline-

with a 40 min skills test.