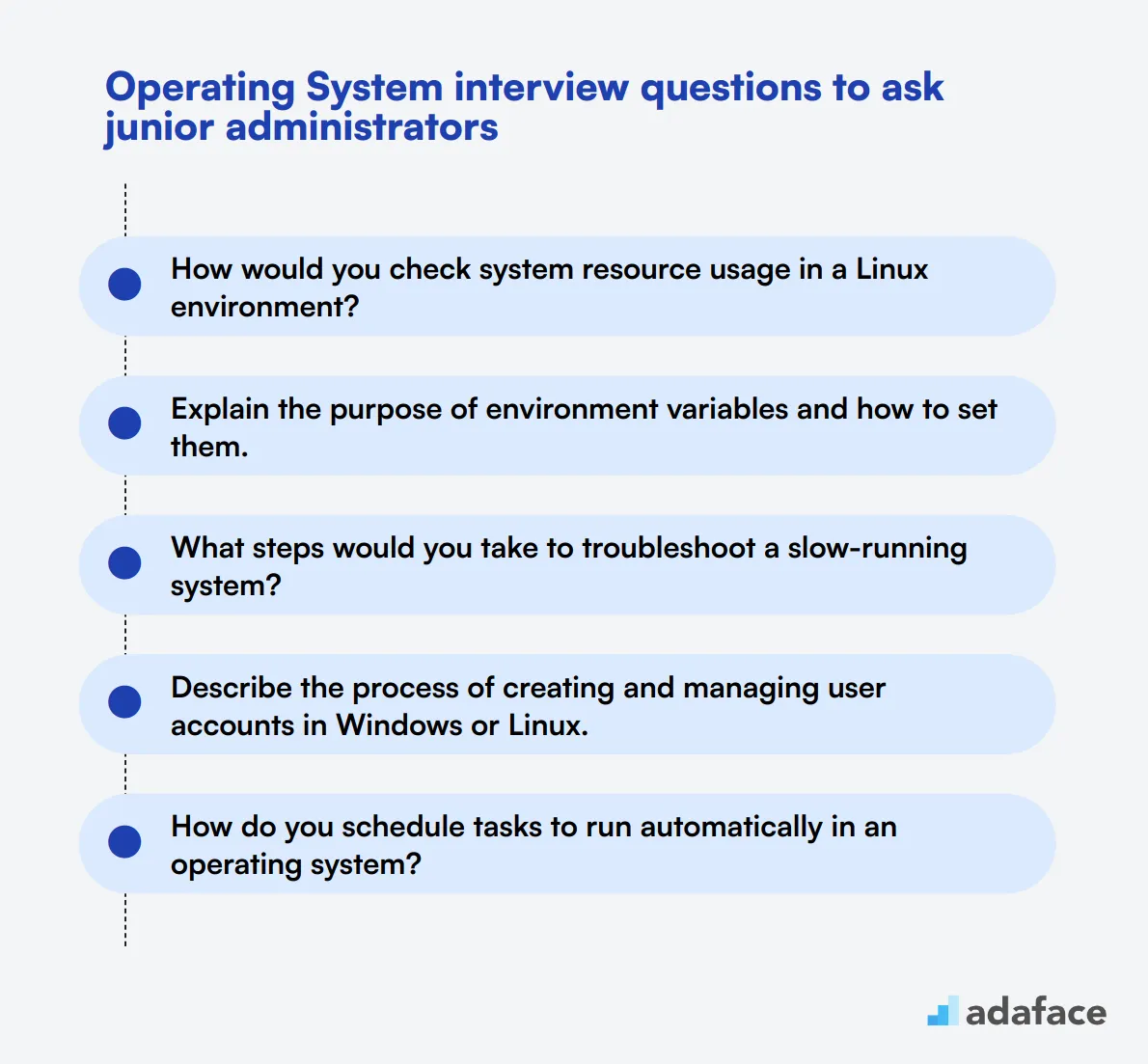

When hiring for roles that require Operating System knowledge, asking the right interview questions is essential. This ensures you identify candidates who possess the technical know-how and problem-solving skills needed for the job.

In this blog post, we've compiled various sets of Operating System interview questions, ranging from general to advanced, and covering topics such as system processes and memory management. By incorporating these questions into your interview process, you can effectively evaluate both junior and senior administrators.

Using these questions will help you assess candidates' technical skills and readiness for the role. Additionally, consider using our System Administration Test to complement the interview process and ensure you're hiring the most qualified applicants.

Table of contents

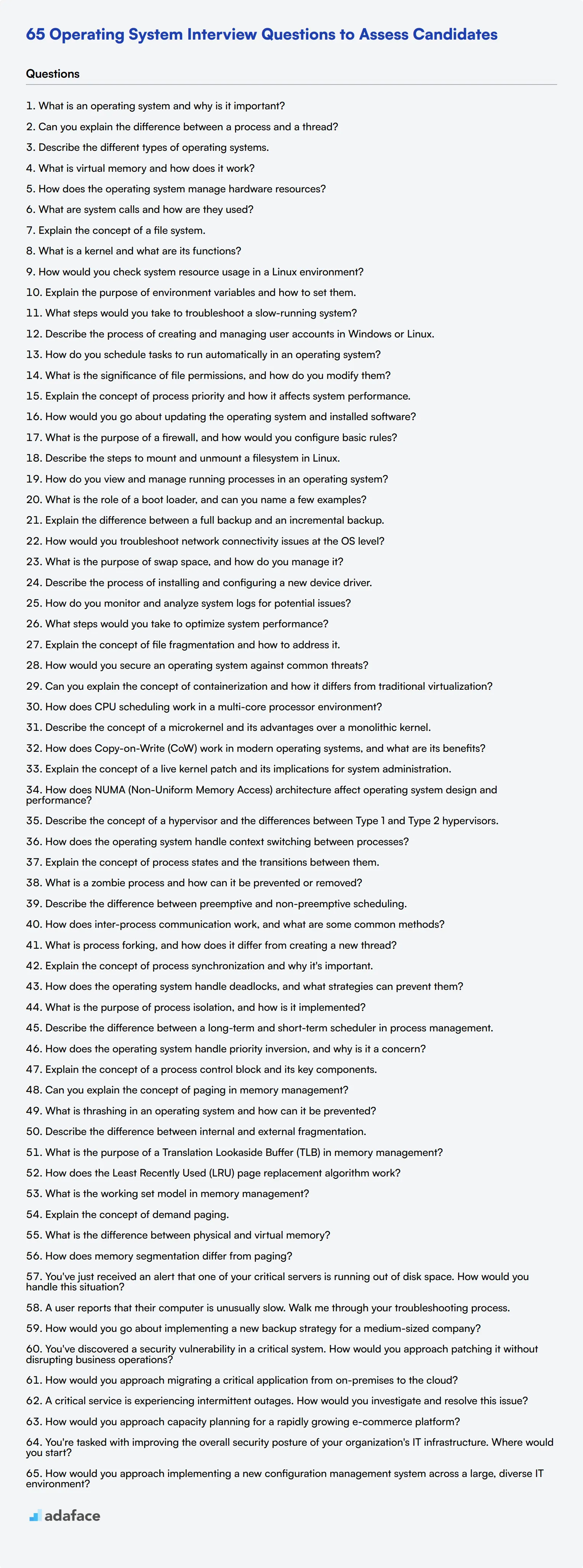

8 general Operating System interview questions and answers

To ensure your candidates have a solid understanding of operating systems, use this list of questions during your interviews. These questions will help you assess their knowledge and determine if they have the skills necessary for your team.

1. What is an operating system and why is it important?

An operating system (OS) is software that manages computer hardware and software resources, providing common services for computer programs. It acts as an intermediary between users and the computer hardware.

The OS is important because it ensures that different programs and users running on a computer do not interfere with each other. It also manages the computer's memory, processes, and all of its software and hardware. Without an operating system, a computer would be useless.

Ideal candidates should highlight the OS's role in resource management and user interface. Look for explanations that cover its fundamental importance in enabling software applications to function.

2. Can you explain the difference between a process and a thread?

A process is an instance of a program that is being executed. It contains the program code and its current activity. Each process has a separate memory space.

A thread, on the other hand, is the smallest unit of a process that can be scheduled for execution. A process can have multiple threads that share the same memory space but can execute independently.

Strong answers will include mentions of how threads are more lightweight compared to processes, and the benefits of multithreading in terms of resource efficiency and performance.

3. Describe the different types of operating systems.

There are several types of operating systems, including:

- Batch Operating Systems: These systems process batches of jobs without user interaction. Examples include early versions of IBM OS/360.

- Time-Sharing Operating Systems: These systems allow multiple users to share computer resources simultaneously. Examples include Unix.

- Distributed Operating Systems: These systems manage a group of distinct computers and make them appear to be a single computer. Examples include Microsoft Windows Server.

- Real-Time Operating Systems: These systems are used for time-critical tasks. Examples include VxWorks.

Candidates should be able to categorize these types and provide examples. Look for clarity in their distinctions and practical knowledge of where each type is typically used.

4. What is virtual memory and how does it work?

Virtual memory is a memory management capability that provides an 'idealized abstraction of the storage resources that are actually available on a given machine.' This makes the system appear to have more RAM than it physically does by using disk space for additional memory.

It works by dividing the physical memory into blocks and mapping them to virtual memory addresses. When the system runs out of RAM, it swaps data to and from the disk storage to ensure that applications continue to run smoothly.

An ideal response will explain both the concept and the mechanism of virtual memory. Look for a candidate's understanding of how virtual memory improves system efficiency and allows for the running of larger applications.

5. How does the operating system manage hardware resources?

The operating system manages hardware resources through a combination of drivers, which are specific software that communicates with hardware components, and resource management routines that allocate and deallocate resources as needed.

For example, the OS handles CPU scheduling to ensure that processes get CPU time. It also manages memory allocation, I/O operations, and storage management, ensuring that all hardware components work together seamlessly.

Candidates should emphasize the OS's role in coordinating hardware resources to optimize performance and maintain system stability. Look for examples and clear explanations of resource management techniques.

6. What are system calls and how are they used?

System calls are the interface between a process and the operating system. They provide a means for user programs to request services from the OS kernel, such as accessing hardware or memory management.

For example, when a program needs to read a file, it makes a system call to the OS, which then handles the actual reading from the hardware disk.

Ideal answers should include examples of common system calls such as open, read, write, and close. Look for understanding of how system calls facilitate communication between user applications and the OS.

7. Explain the concept of a file system.

A file system is a way of organizing and storing files on a disk or other storage medium. It manages how data is stored, retrieved, and updated, ensuring that files are kept in a structured and accessible manner.

Different types of file systems include NTFS, FAT32, and ext4, each with its own features and use cases. The file system also manages metadata such as filenames, permissions, and dates.

Candidates should be able to explain the functions of a file system and mention different types. Look for knowledge of how file systems impact data management and access.

8. What is a kernel and what are its functions?

The kernel is the core part of an operating system, responsible for managing system resources and facilitating communication between hardware and software components. It operates at a low level, interacting directly with the hardware.

The kernel's functions include process management, memory management, device management, and system calls handling. It ensures that different applications and services run efficiently without interfering with each other.

Look for responses that highlight the kernel's critical role in system stability and performance. Ideal candidates should understand how the kernel operates as the OS's backbone.

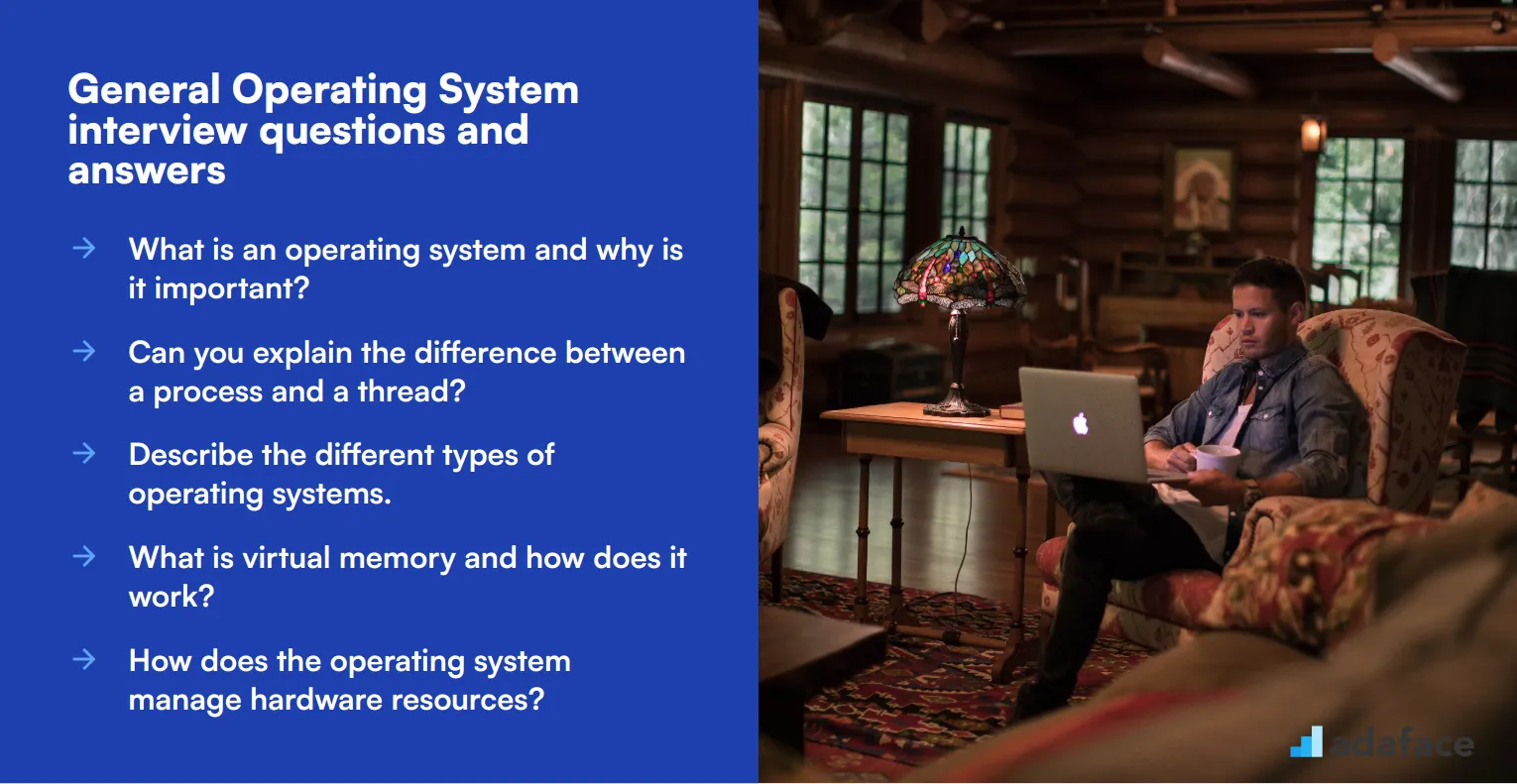

20 Operating System interview questions to ask junior administrators

When interviewing junior administrators for operating system roles, it's crucial to assess their foundational knowledge and practical skills. Use these questions to gauge candidates' understanding of basic OS concepts, troubleshooting abilities, and familiarity with common administrative tasks.

- How would you check system resource usage in a Linux environment?

- Explain the purpose of environment variables and how to set them.

- What steps would you take to troubleshoot a slow-running system?

- Describe the process of creating and managing user accounts in Windows or Linux.

- How do you schedule tasks to run automatically in an operating system?

- What is the significance of file permissions, and how do you modify them?

- Explain the concept of process priority and how it affects system performance.

- How would you go about updating the operating system and installed software?

- What is the purpose of a firewall, and how would you configure basic rules?

- Describe the steps to mount and unmount a filesystem in Linux.

- How do you view and manage running processes in an operating system?

- What is the role of a boot loader, and can you name a few examples?

- Explain the difference between a full backup and an incremental backup.

- How would you troubleshoot network connectivity issues at the OS level?

- What is the purpose of swap space, and how do you manage it?

- Describe the process of installing and configuring a new device driver.

- How do you monitor and analyze system logs for potential issues?

- What steps would you take to optimize system performance?

- Explain the concept of file fragmentation and how to address it.

- How would you secure an operating system against common threats?

7 advanced Operating System interview questions and answers to evaluate senior administrators

Ready to put your senior administrators to the test? These 7 advanced Operating System interview questions will help you evaluate the depth of their knowledge and problem-solving skills. Use these questions to assess candidates' ability to handle complex scenarios and their understanding of advanced OS concepts. Remember, the goal is to find a system analyst who can navigate the intricacies of modern operating systems with ease.

1. Can you explain the concept of containerization and how it differs from traditional virtualization?

Containerization is a lightweight form of virtualization that allows applications to run in isolated environments called containers. Unlike traditional virtualization, which emulates entire hardware systems, containers share the host system's kernel and isolate the application processes from each other.

Key differences include:

- Resource efficiency: Containers have less overhead and start up faster than VMs

- Portability: Containers can run consistently across different environments

- Scalability: Containers can be easily scaled up or down based on demand

Look for candidates who can articulate the benefits of containerization in terms of efficiency, consistency, and scalability. They should also be able to discuss potential use cases and limitations compared to traditional VMs.

2. How does CPU scheduling work in a multi-core processor environment?

CPU scheduling in a multi-core processor environment involves distributing processes or threads across multiple processor cores to maximize system performance and efficiency. The scheduler must consider factors such as:

- Load balancing across cores

- Cache affinity (keeping processes on cores where their data is cached)

- Power management (utilizing or idling cores based on workload)

Advanced scheduling algorithms like Completely Fair Scheduler (CFS) in Linux or Windows' scheduler take these factors into account, often using hierarchical approaches to manage both per-core and system-wide scheduling.

A strong candidate should be able to discuss the challenges of multi-core scheduling, such as avoiding cache thrashing and maintaining fairness. They might also mention concepts like processor affinity and the differences between symmetric and asymmetric multiprocessing.

3. Describe the concept of a microkernel and its advantages over a monolithic kernel.

A microkernel is a minimal operating system kernel that provides only basic features like address spaces, inter-process communication (IPC), and basic scheduling. Most OS services, including device drivers, file systems, and networking stacks, run in user space as separate processes.

Advantages of microkernels include:

- Improved stability: A failure in one service doesn't bring down the entire system

- Enhanced security: Services have limited access to the kernel

- Easier maintenance: Components can be updated independently

- Flexibility: The system can be more easily customized for different environments

Look for candidates who can contrast this with monolithic kernels, where all OS services run in kernel space. They should be able to discuss trade-offs, such as the potential performance overhead of IPC in microkernels versus the simplicity and speed of monolithic designs. Knowledge of real-world microkernel implementations like QNX or seL4 would be impressive.

4. How does Copy-on-Write (CoW) work in modern operating systems, and what are its benefits?

Copy-on-Write (CoW) is a resource management technique used in modern operating systems to optimize memory usage and improve performance. When a process forks or duplicates itself, instead of immediately copying all the memory pages, the OS initially shares the same physical memory between the parent and child processes.

The CoW mechanism works as follows:

- Pages are marked as read-only for both processes

- If either process attempts to modify a page, a page fault occurs

- The OS then creates a copy of the page for the modifying process

- The copy is made writable, and the process continues with its modification

Benefits of CoW include reduced memory usage, faster process creation, and more efficient use of system resources. A strong candidate should be able to explain how CoW impacts system performance, particularly in scenarios involving frequent process forking (like in web servers). They might also discuss how CoW is used in other contexts, such as file systems or container implementations.

5. Explain the concept of a live kernel patch and its implications for system administration.

A live kernel patch, also known as dynamic kernel patching or hot patching, is a technique that allows kernel-level updates to be applied to a running system without requiring a reboot. This is particularly valuable for systems that require high availability or have strict uptime requirements.

The process typically involves:

- Preparing a patch module compatible with the running kernel

- Loading the patch module into the kernel

- Redirecting function calls to the new, patched versions

- Ensuring consistency by managing in-flight operations

An ideal candidate should discuss the benefits of live patching, such as reduced downtime and improved security posture, as well as potential risks like increased complexity and the need for careful testing. They should also be aware of tools and frameworks that support live patching, such as Ksplice, kpatch, or kGraft. Look for understanding of when live patching is appropriate and when a full system reboot might still be necessary.

6. How does NUMA (Non-Uniform Memory Access) architecture affect operating system design and performance?

NUMA (Non-Uniform Memory Access) is a computer memory design used in multiprocessor systems where the memory access time depends on the memory location relative to the processor. In NUMA systems, processors can access their local memory faster than non-local memory.

NUMA affects OS design and performance in several ways:

- Memory allocation: The OS must be NUMA-aware to allocate memory close to the CPU that will use it

- Process scheduling: Schedulers need to consider memory locality when assigning processes to CPUs

- Cache coherence: Maintaining cache consistency across NUMA nodes becomes more complex

- Memory migration: The OS may need to migrate memory pages between nodes to optimize access patterns

A strong candidate should be able to discuss the challenges of programming for NUMA systems and how modern OSes handle NUMA architectures. They might mention specific NUMA optimization techniques or tools for monitoring NUMA performance. Look for understanding of the trade-offs between memory access speed and overall system scalability in NUMA designs.

7. Describe the concept of a hypervisor and the differences between Type 1 and Type 2 hypervisors.

A hypervisor, also known as a Virtual Machine Monitor (VMM), is software that creates and manages virtual machines (VMs), allowing multiple operating systems to share a single hardware host. Hypervisors are fundamental to cloud computing and virtualization technologies.

Type 1 (Bare-metal) Hypervisors:

- Run directly on the host's hardware

- Offer better performance and security

- Examples: VMware ESXi, Microsoft Hyper-V, Xen

Type 2 (Hosted) Hypervisors:

- Run on top of a conventional operating system

- Easier to set up and manage

- Examples: VMware Workstation, Oracle VirtualBox, Parallels

A strong candidate should be able to discuss the pros and cons of each type, including use cases where one might be preferred over the other. They should also understand concepts like hardware-assisted virtualization and para-virtualization. Look for knowledge of current trends in virtualization technology and how hypervisors relate to container technologies.

12 Operating System questions related to system processes

To assess candidates' understanding of system processes in operating systems, use these 12 interview questions. They are designed to probe a software engineer's knowledge of process management, scheduling, and inter-process communication. These questions can help you evaluate an applicant's ability to work with and optimize system-level operations.

- How does the operating system handle context switching between processes?

- Explain the concept of process states and the transitions between them.

- What is a zombie process and how can it be prevented or removed?

- Describe the difference between preemptive and non-preemptive scheduling.

- How does inter-process communication work, and what are some common methods?

- What is process forking, and how does it differ from creating a new thread?

- Explain the concept of process synchronization and why it's important.

- How does the operating system handle deadlocks, and what strategies can prevent them?

- What is the purpose of process isolation, and how is it implemented?

- Describe the difference between a long-term and short-term scheduler in process management.

- How does the operating system handle priority inversion, and why is it a concern?

- Explain the concept of a process control block and its key components.

9 Operating System interview questions and answers related to memory management

Ready to dive into the memory maze of operating systems? These 9 memory management questions will help you gauge a candidate's understanding of this crucial aspect of OS functionality. Whether you're interviewing for a software engineer or a system analyst role, these questions will help you separate the RAM rookies from the memory masters.

1. Can you explain the concept of paging in memory management?

Paging is a memory management scheme that eliminates the need for contiguous allocation of physical memory. In this system, the operating system divides physical memory into fixed-size blocks called frames and logical memory into blocks of the same size called pages.

When a process is to be executed, its pages are loaded into any available memory frames from the backing store. The operating system keeps a page table for each process, which maps logical addresses to physical addresses.

Look for candidates who can explain how paging improves memory utilization and supports virtual memory. They should also be able to discuss the role of the Translation Lookaside Buffer (TLB) in speeding up address translation.

2. What is thrashing in an operating system and how can it be prevented?

Thrashing is a phenomenon in virtual memory systems where the computer's performance severely degrades due to excessive paging. It occurs when a computer's virtual memory resources are overused, leading to a constant state of paging and page faults, inhibiting most application-level processing.

To prevent thrashing, several techniques can be employed:

- Increasing the number of page frames allocated to a process

- Implementing better page replacement algorithms

- Using a working set model to ensure a process has its most important pages in memory

- Implementing page buffering schemes

A strong candidate should be able to explain the causes of thrashing and discuss multiple prevention strategies. They might also touch on how thrashing relates to overall system performance and resource management.

3. Describe the difference between internal and external fragmentation.

Internal fragmentation occurs when memory is allocated in fixed-size blocks, and the allocated block is larger than the requested memory. The unused portion of the allocated block is wasted and cannot be used by other processes. This type of fragmentation is common in systems using fixed-size allocation units, such as some paging systems.

External fragmentation occurs when free memory is broken into small blocks by allocated memory blocks. Although the total amount of free memory may be sufficient to satisfy a request, it's not contiguous, and therefore can't be used. This type of fragmentation is common in systems that use variable-size allocations, such as in segmentation.

Look for candidates who can clearly differentiate between the two types and provide examples. They should also be able to discuss the impact of each type on memory utilization and potential mitigation strategies, such as compaction for external fragmentation or adjusting allocation unit sizes for internal fragmentation.

4. What is the purpose of a Translation Lookaside Buffer (TLB) in memory management?

The Translation Lookaside Buffer (TLB) is a memory cache that stores recent translations of virtual memory to physical memory addresses. It's part of the Memory Management Unit (MMU) and acts as a fast lookup hardware cache.

The main purpose of the TLB is to speed up virtual address translation. When a virtual address needs to be translated to a physical address, the system first checks the TLB. If the mapping is found (a TLB hit), the translation can be done quickly. If not (a TLB miss), the system needs to walk through the page tables, which is much slower.

A good candidate should understand that the TLB significantly improves system performance by reducing the time required for address translation. They might also discuss TLB management issues such as handling context switches or maintaining consistency with page tables.

5. How does the Least Recently Used (LRU) page replacement algorithm work?

The Least Recently Used (LRU) page replacement algorithm is based on the idea that pages that have been frequently used in the recent past will likely be used again in the near future. When a page fault occurs and no free frames are available, LRU selects for replacement the page that has not been used for the longest period of time.

To implement LRU, the system must keep track of when each page was last used. This can be done using a stack or a matrix chain. When a page is referenced, it's moved to the top of the stack or its row/column is updated in the matrix. When a page needs to be replaced, the bottom of the stack or the least recently used page according to the matrix is chosen.

Look for candidates who can explain the principle behind LRU and describe at least one implementation method. They should also be able to discuss the advantages (good performance for many common access patterns) and disadvantages (overhead of keeping track of page usage) of this algorithm.

6. What is the working set model in memory management?

The working set model is a memory management concept that aims to improve system performance by keeping a process's most actively used pages in memory. The working set of a process is defined as the collection of pages that the process is actively using during a particular time window.

The model operates on the principle of locality, which suggests that a process tends to use a relatively small portion of its address space at any given time. By ensuring that a process's working set is in memory before the process starts executing, the system can reduce page faults and improve overall performance.

A strong candidate should be able to explain how the working set size changes over time and how it relates to thrashing prevention. They might also discuss how the working set model influences decisions about process scheduling and memory allocation in multiprogramming environments.

7. Explain the concept of demand paging.

Demand paging is a method of virtual memory management where pages are loaded into main memory from secondary storage only when they are required or 'demanded' by the program. This is in contrast to systems that attempt to predict which pages will be needed and load them in advance.

In a demand paging system, when a process tries to access a page that isn't currently in memory, a page fault occurs. The operating system then suspends the process, locates the required page in secondary storage, loads it into a free frame in main memory, updates the page table, and resumes the process.

Look for candidates who understand that demand paging allows a process to run even when its entire memory space is not in physical memory. They should be able to discuss the trade-offs involved, such as the overhead of handling page faults versus the efficient use of memory. A good answer might also touch on how demand paging relates to the principle of locality.

8. What is the difference between physical and virtual memory?

Physical memory refers to the actual RAM installed in a computer. It's the hardware where data and instructions are stored for quick access by the CPU. Physical memory is finite and its size is determined by the amount of RAM installed.

Virtual memory, on the other hand, is an abstraction of physical memory implemented by the operating system. It provides an 'idealized abstraction of the storage resources that are actually available on a given machine' which 'creates the illusion to users of a very large (main) memory.' Virtual memory uses both hardware (RAM) and software (disk space) to provide a larger address space to processes than what is available in physical memory alone.

A strong candidate should be able to explain how virtual memory allows for efficient use of physical memory, enables isolation between processes, and supports features like demand paging. They might also discuss the role of the Memory Management Unit (MMU) in translating between virtual and physical addresses.

9. How does memory segmentation differ from paging?

Memory segmentation and paging are both memory management schemes, but they operate on different principles:

Segmentation divides memory into segments of varying sizes, each with a specific purpose (like code, data, stack). Each segment is a logically separate unit, and processes reference memory using a segment identifier and an offset within the segment. Segmentation can lead to external fragmentation.

Paging, on the other hand, divides memory into fixed-size pages. The logical address space of a process is divided into pages of the same size, which can be mapped to any available frame in physical memory. Paging can lead to internal fragmentation but eliminates external fragmentation.

Look for candidates who can articulate the pros and cons of each approach. They should understand that segmentation aligns better with how programmers view memory (as distinct logical units) while paging is more efficient from the system's perspective. A good answer might also touch on how modern systems often combine aspects of both schemes.

9 situational Operating System interview questions with answers for hiring top administrators

Ready to find your next stellar OS administrator? These 9 situational questions will help you dive deep into a candidate's problem-solving skills and practical knowledge. Use them to assess how well potential hires can handle real-world scenarios and keep your systems running smoothly. Remember, the best administrators don't just know theory—they shine when the unexpected happens!

1. You've just received an alert that one of your critical servers is running out of disk space. How would you handle this situation?

A strong candidate should outline a systematic approach to addressing this issue:

- Quickly assess the severity of the situation by checking the current disk usage and rate of consumption.

- Identify large files or directories that can be safely removed or archived.

- Use disk cleanup tools to remove temporary files and clear caches.

- If necessary, expand the disk space either physically or by extending logical volumes.

- Implement monitoring to prevent future occurrences.

Look for candidates who prioritize immediate action to prevent system failure while also considering long-term solutions. A great answer might include mentioning the importance of root cause analysis to prevent similar issues in the future.

2. A user reports that their computer is unusually slow. Walk me through your troubleshooting process.

An effective troubleshooting process for this scenario might include:

- Gather information from the user about when the problem started and any recent changes.

- Check system resource usage (CPU, memory, disk I/O) to identify bottlenecks.

- Scan for malware and check for unnecessary startup programs.

- Review recent software installations or updates that might be causing issues.

- Check hardware health, including hard drive SMART status and temperature readings.

- If necessary, perform a clean boot to isolate the problem.

The ideal candidate should demonstrate a logical, step-by-step approach to problem-solving. They should also show good communication skills in explaining technical concepts to users and the ability to prioritize actions based on the severity of the issue.

3. How would you go about implementing a new backup strategy for a medium-sized company?

Implementing a new backup strategy requires careful planning and execution. A strong answer might include the following steps:

- Assess current data and systems to determine backup needs.

- Define recovery point objectives (RPO) and recovery time objectives (RTO).

- Choose appropriate backup methods (full, incremental, differential) and media.

- Select and implement backup software that meets the company's needs.

- Set up a regular backup schedule and automate where possible.

- Implement off-site or cloud backups for disaster recovery.

- Regularly test backups to ensure data can be successfully restored.

- Document the backup process and train relevant staff.

Look for candidates who emphasize the importance of aligning the backup strategy with business needs and regulatory requirements. They should also mention the need for ongoing monitoring and adjustment of the backup system as the company's data needs evolve.

4. You've discovered a security vulnerability in a critical system. How would you approach patching it without disrupting business operations?

Addressing a security vulnerability while minimizing disruption requires a careful, methodical approach:

- Assess the severity of the vulnerability and potential impact if exploited.

- Develop a detailed patching plan, including rollback procedures.

- Create a test environment to verify the patch doesn't introduce new issues.

- Schedule the patch installation during off-hours or a maintenance window.

- Communicate with stakeholders about the planned maintenance.

- Apply the patch in stages, starting with non-critical systems.

- Monitor systems closely after patching for any unexpected behavior.

- Document the process and update security protocols as needed.

The ideal candidate should demonstrate a balance between security urgency and operational stability. They should also mention the importance of change management processes and clear communication with all affected parties throughout the patching process.

5. How would you approach migrating a critical application from on-premises to the cloud?

Migrating a critical application to the cloud requires careful planning and execution. A strong answer might include these steps:

- Assess the current application architecture and dependencies.

- Choose the appropriate cloud service model (IaaS, PaaS, or SaaS).

- Develop a detailed migration plan, including timelines and resource allocation.

- Set up a test environment in the cloud to validate application functionality.

- Plan for data migration, considering volume and sensitivity of data.

- Implement necessary security measures for the cloud environment.

- Train staff on new cloud management tools and processes.

- Execute the migration in phases, if possible, to minimize disruption.

- Perform thorough testing post-migration.

- Monitor application performance and costs in the new cloud environment.

Look for candidates who emphasize the importance of thorough planning, risk assessment, and having a rollback strategy. They should also mention considerations like compliance requirements, network latency, and potential cost optimizations in the cloud environment.

6. A critical service is experiencing intermittent outages. How would you investigate and resolve this issue?

Investigating intermittent outages requires a systematic approach:

- Gather data on when outages occur and their duration.

- Check system logs for error messages or unusual patterns.

- Monitor resource usage (CPU, memory, network) during outages.

- Review recent changes to the system or related services.

- Use diagnostic tools to capture system state during an outage.

- If possible, try to reproduce the issue in a test environment.

- Analyze collected data to identify potential root causes.

- Implement a fix and monitor closely to confirm resolution.

The ideal candidate should demonstrate patience and persistence in troubleshooting intermittent issues. They should also mention the importance of clear documentation throughout the investigation process and the potential need for collaboration with other teams or vendors to resolve complex problems.

7. How would you approach capacity planning for a rapidly growing e-commerce platform?

Effective capacity planning for a growing e-commerce platform involves several key steps:

- Analyze current resource usage and growth trends.

- Identify peak usage periods (e.g., holiday seasons) and plan for them.

- Use load testing tools to simulate increased traffic and identify bottlenecks.

- Implement performance monitoring tools to track key metrics.

- Consider adopting auto-scaling solutions for cloud-based resources.

- Plan for both vertical scaling (upgrading hardware) and horizontal scaling (adding more servers).

- Optimize database queries and implement caching where appropriate.

- Consider content delivery networks (CDNs) for static content.

- Regularly review and update the capacity plan as the business grows.

Look for candidates who emphasize the importance of proactive planning and continuous monitoring. They should also mention the need to balance performance requirements with cost considerations and the potential benefits of cloud elasticity for handling variable loads.

8. You're tasked with improving the overall security posture of your organization's IT infrastructure. Where would you start?

Improving an organization's security posture is a comprehensive task that requires a strategic approach:

- Conduct a thorough security audit to identify vulnerabilities.

- Implement or update a robust access control system, including multi-factor authentication.

- Ensure all systems and software are up-to-date with the latest security patches.

- Implement network segmentation to limit the potential spread of breaches.

- Set up comprehensive logging and monitoring systems.

- Develop and enforce strong password policies.

- Implement encryption for data at rest and in transit.

- Conduct regular security awareness training for all employees.

- Develop and test an incident response plan.

- Implement regular vulnerability scanning and penetration testing.

The ideal candidate should demonstrate a holistic view of security, balancing technical solutions with policy and training. They should also mention the importance of staying informed about emerging threats and the need for continuous improvement in security practices.

9. How would you approach implementing a new configuration management system across a large, diverse IT environment?

Implementing a new configuration management system requires careful planning and execution:

- Assess the current environment and identify all systems to be managed.

- Choose an appropriate configuration management tool based on the organization's needs.

- Develop a detailed implementation plan, including timelines and resource allocation.

- Start with a pilot project on a small subset of systems.

- Develop standardized configurations for different types of systems.

- Implement version control for configuration files.

- Gradually roll out the system across the environment, starting with less critical systems.

- Provide training for IT staff on using the new system.

- Implement monitoring and reporting to track the effectiveness of the system.

- Regularly review and update configurations to ensure they remain aligned with business needs.

Look for candidates who emphasize the importance of standardization and automation in configuration management. They should also mention the potential challenges of implementing such a system in a diverse environment and strategies for overcoming resistance to change.

Which Operating System skills should you evaluate during the interview phase?

While it's impossible to fully gauge a candidate's expertise in a single interview, focusing on core Operating System skills during the interview phase is invaluable. This approach allows you to assess essential capabilities that are indicative of the candidate's ability to perform in real-world scenarios.

Linux Administration

Linux is a powerhouse in the server environment, making Linux administration skills fundamental for system administrators. Mastery of Linux commands and understanding system behavior under various loads are critical for optimizing performance and ensuring security.

Consider using a Linux Administration test to pre-screen candidates. This can help ensure that only knowledgeable candidates proceed to the interview stage. We recommend using the Linux Administration Test from Adaface's library.

In addition to the assessment test, a targeted interview question can further help assess their practical knowledge.

Explain how you would find and terminate a process that is using a specific port in Linux.

Look for detailed knowledge of commands like lsof and kill. The candidate's ability to articulate the process clearly and accurately reflects deep understanding.

Memory Management

Effective memory management is central to system performance and stability. Candidates must understand how operating systems handle memory allocation, paging, and segmentation.

To assess understanding in this area, present a specific scenario during the interview.

Describe a situation where modifying the paging file size might improve system performance. What are the risks?

Candidates should discuss scenarios involving memory-intensive applications and should warn of potential system instability or decreased performance if not handled carefully.

Process Synchronization

Process synchronization prevents race conditions in concurrent executions. Candidates need a solid grasp of semaphores, mutexes, and deadlocks to manage processes efficiently.

To dig deeper into their expertise, pose a question that tests their practical application of theory.

Can you explain how a mutex differs from a semaphore and provide a real-world application for each?

The candidate's answer should clarify the mutual exclusion provided by mutexes and the signaling mechanism of semaphores. Real-world examples demonstrate their ability to apply theoretical knowledge effectively.

Hire the Best Operating System Experts with Adaface

If you are looking to hire someone with Operating System skills, you need to ensure they have those skills accurately. Having a structured approach is key.

The best way to do this is to use skill tests. Adaface offers relevant tests such as the Linux Online Test and the System Administration Online Test.

Once you use these tests, you can shortlist the best applicants and call them for interviews. This ensures you only spend time with the most qualified candidates.

To get started, visit our test library or sign up to begin assessing candidates with Adaface.

Linux Online Test

Download Operating System interview questions template in multiple formats

Operating System Interview Questions FAQs

Key areas include general OS concepts, system processes, memory management, and situational problem-solving scenarios.

Use a mix of junior, advanced, and situational questions to gauge candidates' knowledge depth and practical experience.

For junior admins, focus on basic OS concepts, common commands, and simple troubleshooting scenarios.

Use advanced questions on complex system processes, memory management, and challenging situational problems to assess senior-level expertise.

Situational questions help evaluate a candidate's problem-solving skills and ability to apply knowledge in real-world scenarios.

40 min skill tests.

No trick questions.

Accurate shortlisting.

We make it easy for you to find the best candidates in your pipeline with a 40 min skills test.

Try for freeRelated posts

Free resources