Neural Networks are at the forefront of AI and machine learning technologies, making them a crucial skill set for various tech roles. Interviewing candidates for these roles requires sharp and insightful questions that can effectively gauge their understanding and proficiency in Neural Networks, similar to the skills required for a machine learning engineer.

This blog post provides categorized lists of Neural Networks interview questions and answers tailored for different levels of engineers, from basic to advanced. Whether you're assessing trainees, junior engineers, or seasoned professionals, you'll find questions suited to your needs.

By using these questions, you can streamline your recruitment process and easily identify the most qualified candidates. For a more comprehensive evaluation, consider utilizing our Neural Networks test before proceeding with interviews.

Table of contents

15 basic Neural Networks interview questions and answers to assess applicants

To evaluate whether your applicants have the right foundational knowledge of neural networks, use these 15 basic interview questions. This list can help you assess their understanding and identify the best candidates for roles that require a strong grasp of neural network concepts, such as a machine learning engineer.

- Can you explain what a neural network is in simple terms?

- What is the difference between a single-layer and a multi-layer neural network?

- How do you define an activation function in a neural network?

- Why is backpropagation important in training neural networks?

- What is the purpose of a loss function in a neural network?

- Can you describe the concept of overfitting and how to prevent it?

- What are the roles of weights and biases in a neural network?

- How does a convolutional neural network (CNN) differ from a traditional neural network?

- What is a recurrent neural network (RNN) and when would you use it?

- Can you explain the vanishing gradient problem and how to solve it?

- What are dropout layers and why are they useful?

- How do you choose the number of hidden layers and nodes in a neural network?

- What is the significance of learning rate in the training process?

- Can you explain the concept of regularization in neural networks?

- What are some common optimization algorithms used in neural network training?

8 Neural Networks interview questions and answers to evaluate junior engineers

To assess whether your junior engineer candidates have a fundamental understanding of neural networks, use these targeted interview questions. Tailored to spark insightful conversations, these questions will help you gauge their grasp on the essential concepts without diving too deep into technical jargon.

1. How would you explain the concept of a neural network to someone without a technical background?

A neural network is a system that tries to mimic the way the human brain works. It consists of layers of neurons, which are essentially nodes that process and pass on information. The idea is to feed data into the input layer, process it through hidden layers, and finally get the output, which is the prediction or classification made by the network.

Look for candidates who can break down complex concepts into simple terms. An ideal response would simplify the technical jargon and make the concept accessible to someone unfamiliar with the field. This shows their ability to communicate effectively and ensure understanding.

2. What is the difference between supervised and unsupervised learning?

Supervised learning involves training a neural network with labeled data, meaning the input data is paired with the correct output. The network learns to make predictions or classifications based on this labeled data. In contrast, unsupervised learning uses data without labels, and the network tries to find patterns or groupings within the data on its own.

Candidates should demonstrate a clear understanding of both learning types and how they are applied in different scenarios. An ideal answer will also touch on examples, such as classification for supervised learning and clustering for unsupervised learning.

3. Can you describe a scenario where you might prefer using a neural network over traditional machine learning algorithms?

Neural networks are particularly useful when dealing with large datasets and complex patterns, such as image and speech recognition. Traditional machine learning algorithms might struggle with these kinds of tasks because they often require manual feature extraction, whereas neural networks can automatically detect and learn these features.

Look for candidates who can provide specific examples where neural networks outperform traditional methods. This shows their practical understanding of when and why to use neural networks in real-world applications.

4. How do you decide the architecture of a neural network for a specific problem?

Deciding the architecture of a neural network involves understanding the problem at hand and the nature of the data. For instance, convolutional neural networks (CNNs) are typically used for image data, while recurrent neural networks (RNNs) are better suited for sequential data like time series or text. Factors like the number of layers, types of layers, and number of neurons per layer are also crucial considerations.

The ideal response should reflect a thoughtful process, considering the specific requirements of the problem and the characteristics of the dataset. Candidates should mention the importance of experimenting and iterating on different architectures to find the best solution.

5. What are some common challenges you might face when training a neural network?

Common challenges include overfitting, where the model performs well on training data but poorly on new data, and the vanishing gradient problem, where gradients become too small for effective training. Additionally, finding the right hyperparameters, such as learning rate and batch size, can be difficult.

Candidates should be able to identify these challenges and discuss potential solutions, such as using dropout layers to prevent overfitting or gradient clipping to handle the vanishing gradient problem. This demonstrates their problem-solving skills and familiarity with neural network training.

6. How can you evaluate the performance of a neural network?

The performance of a neural network can be evaluated using metrics like accuracy, precision, recall, and F1 score for classification tasks. For regression tasks, metrics like mean squared error (MSE) and mean absolute error (MAE) are commonly used. Additionally, visualizing loss and accuracy curves over epochs can help understand the model's learning process.

Look for candidates who can explain these metrics and their relevance to different types of tasks. An ideal answer would also mention the importance of using a validation set to evaluate the model's performance and avoid overfitting.

7. What steps would you take to improve a poorly performing neural network?

To improve a poorly performing neural network, one could try techniques like tuning hyperparameters, adding more data, using data augmentation, or experimenting with different network architectures. Regularization techniques such as dropout and L2 regularization can also help prevent overfitting.

Candidates should show a systematic approach to troubleshooting and improving neural network performance. They should mention the importance of monitoring metrics and iterating on different strategies to achieve better results.

8. Can you explain the importance of data preprocessing in training a neural network?

Data preprocessing is crucial because neural networks are sensitive to the quality and scale of input data. Steps like normalizing data, handling missing values, and encoding categorical variables help ensure that the network can learn effectively. Preprocessed data leads to faster convergence and better performance.

The ideal response should reflect a thorough understanding of data preprocessing techniques and their impact on neural network training. Candidates should also mention that good preprocessing practices can significantly reduce the risk of overfitting and improve generalization.

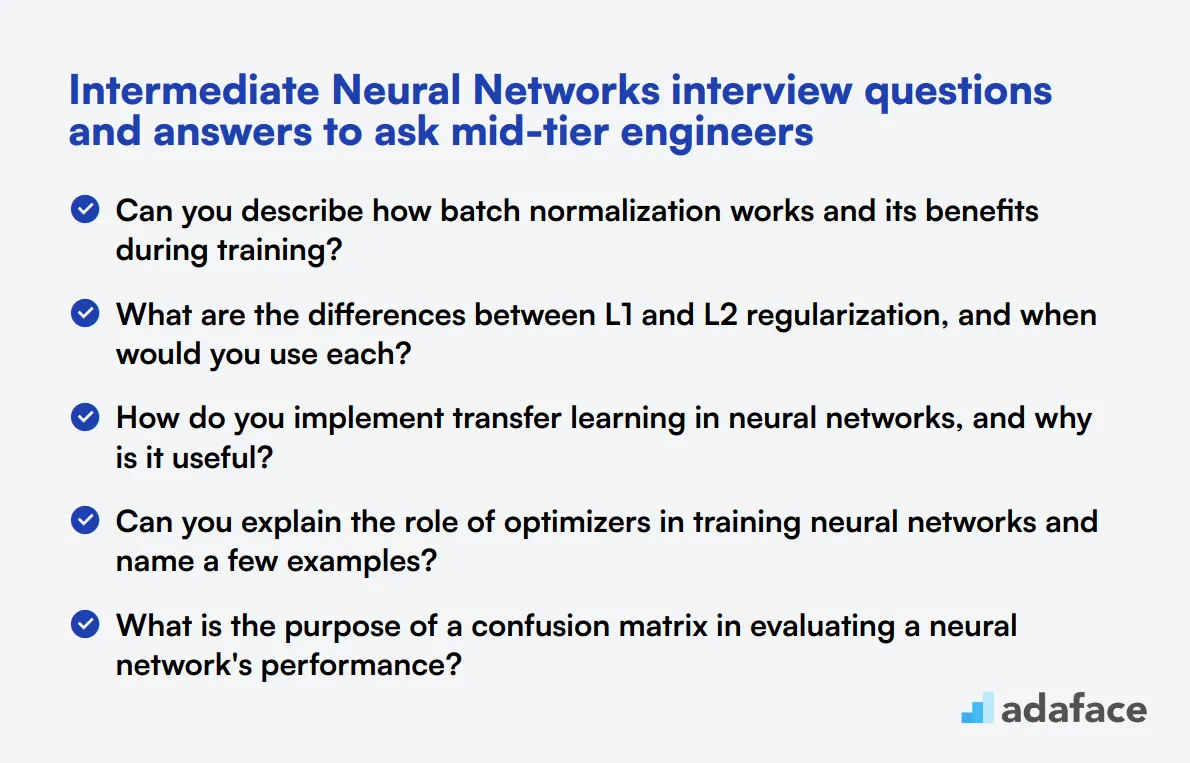

15 intermediate Neural Networks interview questions and answers to ask mid-tier engineers

To assess whether mid-tier engineers possess the necessary skills in neural networks, consider using this list of interview questions. These focused inquiries will help you evaluate their technical expertise and problem-solving abilities effectively. For a comprehensive understanding of role expectations, refer to our machine learning engineer job description.

- Can you describe how batch normalization works and its benefits during training?

- What are the differences between L1 and L2 regularization, and when would you use each?

- How do you implement transfer learning in neural networks, and why is it useful?

- Can you explain the role of optimizers in training neural networks and name a few examples?

- What is the purpose of a confusion matrix in evaluating a neural network's performance?

- How would you handle imbalanced datasets when training a neural network?

- What techniques can you use to visualize the performance of a neural network during training?

- Can you discuss the importance of choosing the right loss function for specific tasks?

- What are the benefits and drawbacks of using pre-trained models?

- How do you interpret the results of a neural network model, and what metrics do you consider?

- Can you explain the concept of attention mechanisms in neural networks?

- What is the role of gradient descent in neural network training, and how does it work?

- How would you design a neural network to handle time-series data?

- Can you discuss the differences between various activation functions and their impact on training?

- What is data augmentation, and how does it benefit neural network training?

7 Neural Networks interview questions and answers related to activation functions

Activation functions are the secret sauce that gives neural networks their power. To help you identify candidates who truly understand these crucial components, we've compiled a list of 7 interview questions focused on activation functions. Use these to spark insightful discussions and assess skills during your next interview for a neural network specialist.

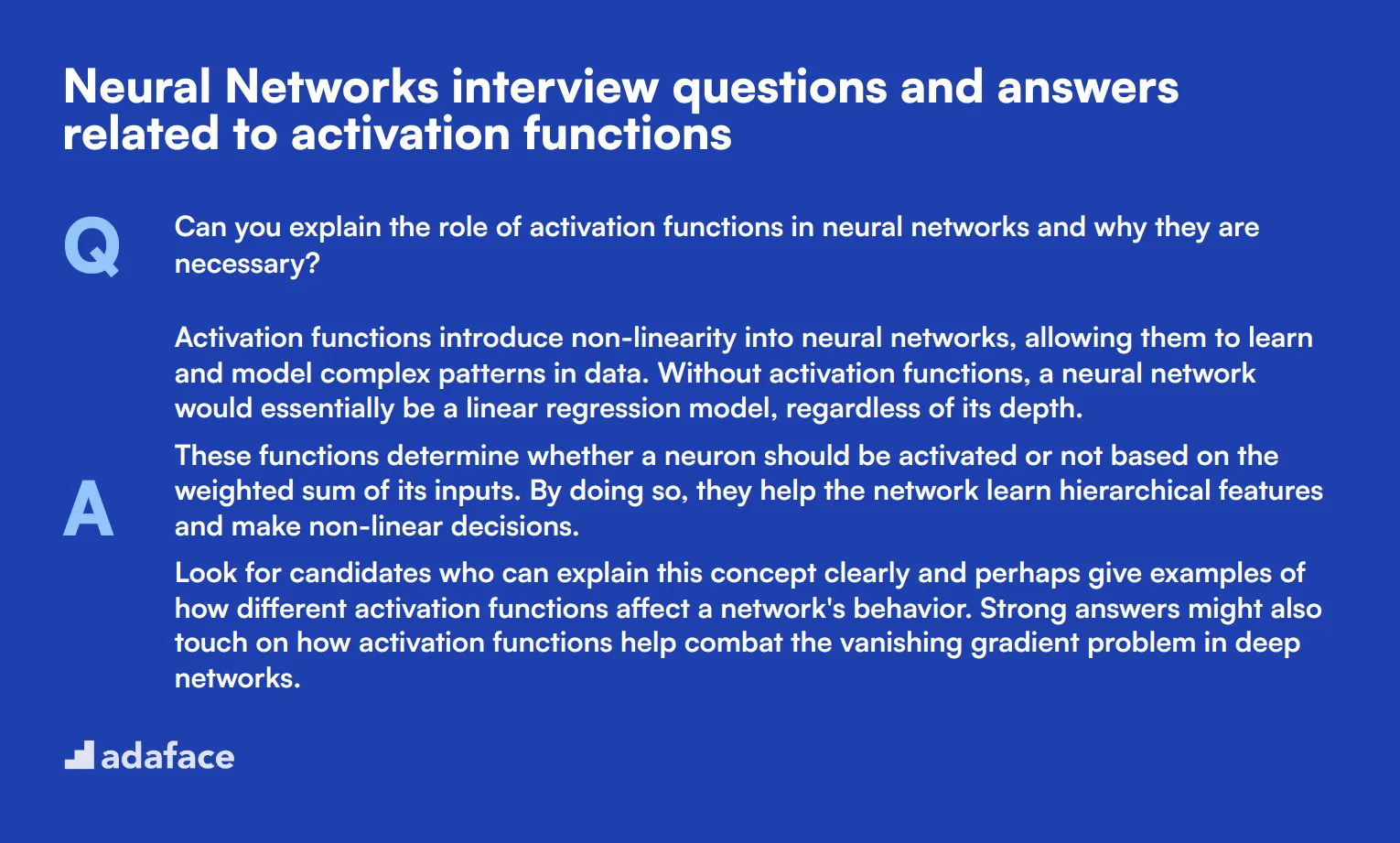

1. Can you explain the role of activation functions in neural networks and why they are necessary?

Activation functions introduce non-linearity into neural networks, allowing them to learn and model complex patterns in data. Without activation functions, a neural network would essentially be a linear regression model, regardless of its depth.

These functions determine whether a neuron should be activated or not based on the weighted sum of its inputs. By doing so, they help the network learn hierarchical features and make non-linear decisions.

Look for candidates who can explain this concept clearly and perhaps give examples of how different activation functions affect a network's behavior. Strong answers might also touch on how activation functions help combat the vanishing gradient problem in deep networks.

2. How does the choice of activation function impact the training process and performance of a neural network?

The choice of activation function can significantly impact both the training process and the final performance of a neural network. Different activation functions have unique properties that affect how easily and effectively a network learns:

- ReLU (Rectified Linear Unit) is computationally efficient and helps mitigate the vanishing gradient problem, making it popular for deep networks.

- Sigmoid and tanh functions can saturate, potentially slowing down learning in deep networks.

- Leaky ReLU and its variants aim to solve the 'dying ReLU' problem where neurons can become inactive.

- Softmax is often used in the output layer for multi-class classification tasks.

Ideal candidates should discuss how the choice impacts training speed, convergence, and the network's ability to approximate different types of functions. They might also mention that the best activation function often depends on the specific problem and network architecture.

3. Can you compare and contrast the sigmoid and ReLU activation functions?

Sigmoid and ReLU (Rectified Linear Unit) are two commonly used activation functions with distinct characteristics:

Sigmoid:

- Outputs values between 0 and 1

- Useful for binary classification in the output layer

- Can cause vanishing gradient problems in deep networks

- Computationally expensive

ReLU:

- Outputs the input directly if positive, else outputs zero

- Computationally efficient

- Helps mitigate the vanishing gradient problem

- Can suffer from 'dying ReLU' problem where neurons can become permanently inactive

Look for candidates who can clearly articulate these differences and discuss scenarios where each might be preferable. Strong answers might also touch on variations like Leaky ReLU or mention the impact on backpropagation during training.

4. What is the vanishing gradient problem and how do certain activation functions help address it?

The vanishing gradient problem occurs when the gradients in the earlier layers of a deep neural network become extremely small during backpropagation. This can lead to slow learning or complete failure to learn in these layers.

Certain activation functions help address this problem:

- ReLU allows gradients to flow through the network more easily for positive inputs.

- Leaky ReLU and its variants (like ELU) allow small negative gradients, further mitigating the issue.

- More recent functions like SELU (Scaled Exponential Linear Unit) are designed to self-normalize, helping maintain consistent gradients.

Strong candidates should be able to explain why sigmoid and tanh functions can exacerbate the vanishing gradient problem, and how the shape of activation functions relates to gradient flow. Look for answers that demonstrate an understanding of the mathematical principles behind this phenomenon.

5. In what situations might you choose to use a linear activation function?

While non-linear activation functions are crucial for most layers in a neural network, there are specific situations where a linear activation function might be appropriate:

- In the output layer for regression tasks, where you want to predict a continuous value without any bounds.

- In some cases, in the last layer of an autoencoder, to reconstruct the input data.

- In certain types of Generative Adversarial Networks (GANs).

- When you specifically want to limit the network to learning linear transformations of the input.

Look for candidates who can explain that using linear activations throughout the network would essentially reduce it to a linear model, regardless of depth. Strong answers might also discuss how linear activations can be used in combination with non-linear ones in specific network architectures.

6. How does the softmax activation function work, and when would you use it?

The softmax activation function is typically used in the output layer of a neural network for multi-class classification tasks. It takes a vector of arbitrary real-valued scores and transforms them into a probability distribution over predicted output classes.

Key characteristics of softmax:

- Outputs are in the range (0, 1) and sum to 1

- Emphasizes the largest values while suppressing those significantly below the maximum

- Useful when you need mutually exclusive classes

Look for candidates who can explain that softmax is often used with cross-entropy loss for training. They should also be able to contrast it with sigmoid, explaining that sigmoid is used for binary classification or multi-label classification where classes are not mutually exclusive.

7. Can you explain the concept of a parametric activation function and give an example?

A parametric activation function is one that has parameters that can be learned during the training process. This allows the activation function to adapt to the data, potentially improving the network's performance.

An example of a parametric activation function is the Parametric ReLU (PReLU): f(x) = x if x > 0 f(x) = ax if x ≤ 0 Where 'a' is a learnable parameter.

Strong candidates should be able to explain the potential benefits of parametric activation functions, such as increased flexibility and potentially better performance. They might also mention other examples like Scaled Exponential Linear Unit (SELU) or discuss how these functions can be implemented in popular deep learning frameworks.

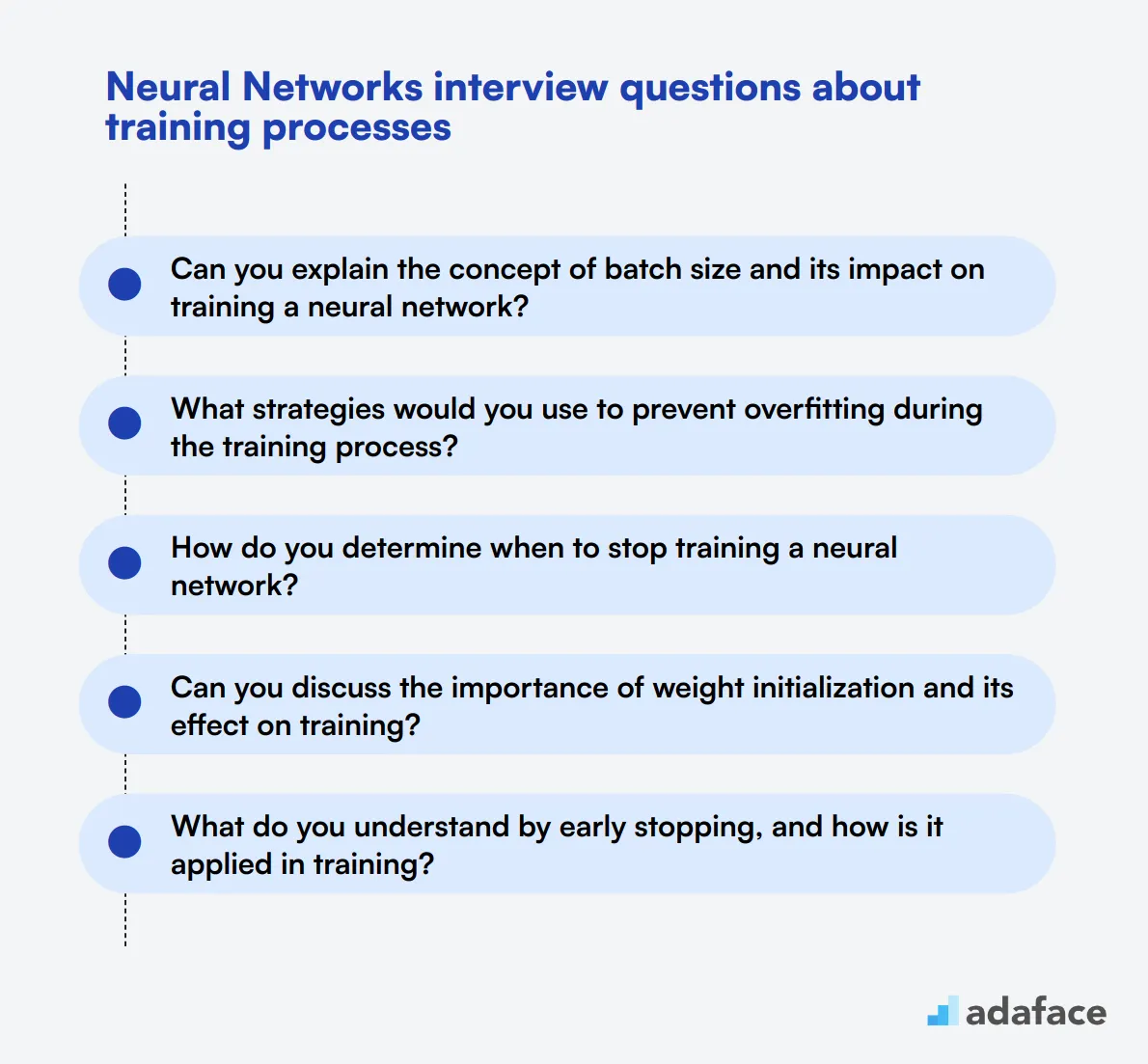

10 Neural Networks interview questions about training processes

To ensure your candidates possess the necessary skills for training neural networks, use this list of focused interview questions. These inquiries will help you gauge their understanding of critical training processes, providing insights into their technical capabilities in roles like machine learning engineer or data scientist.

- Can you explain the concept of batch size and its impact on training a neural network?

- What strategies would you use to prevent overfitting during the training process?

- How do you determine when to stop training a neural network?

- Can you discuss the importance of weight initialization and its effect on training?

- What do you understand by early stopping, and how is it applied in training?

- How can you assess whether a neural network is underfitting or overfitting to the training data?

- What is the significance of validation data during the training process?

- How do you handle the challenge of training a neural network with noisy data?

- Can you explain how to implement k-fold cross-validation in the context of training a neural network?

- What techniques do you use to tune hyperparameters for optimal neural network performance?

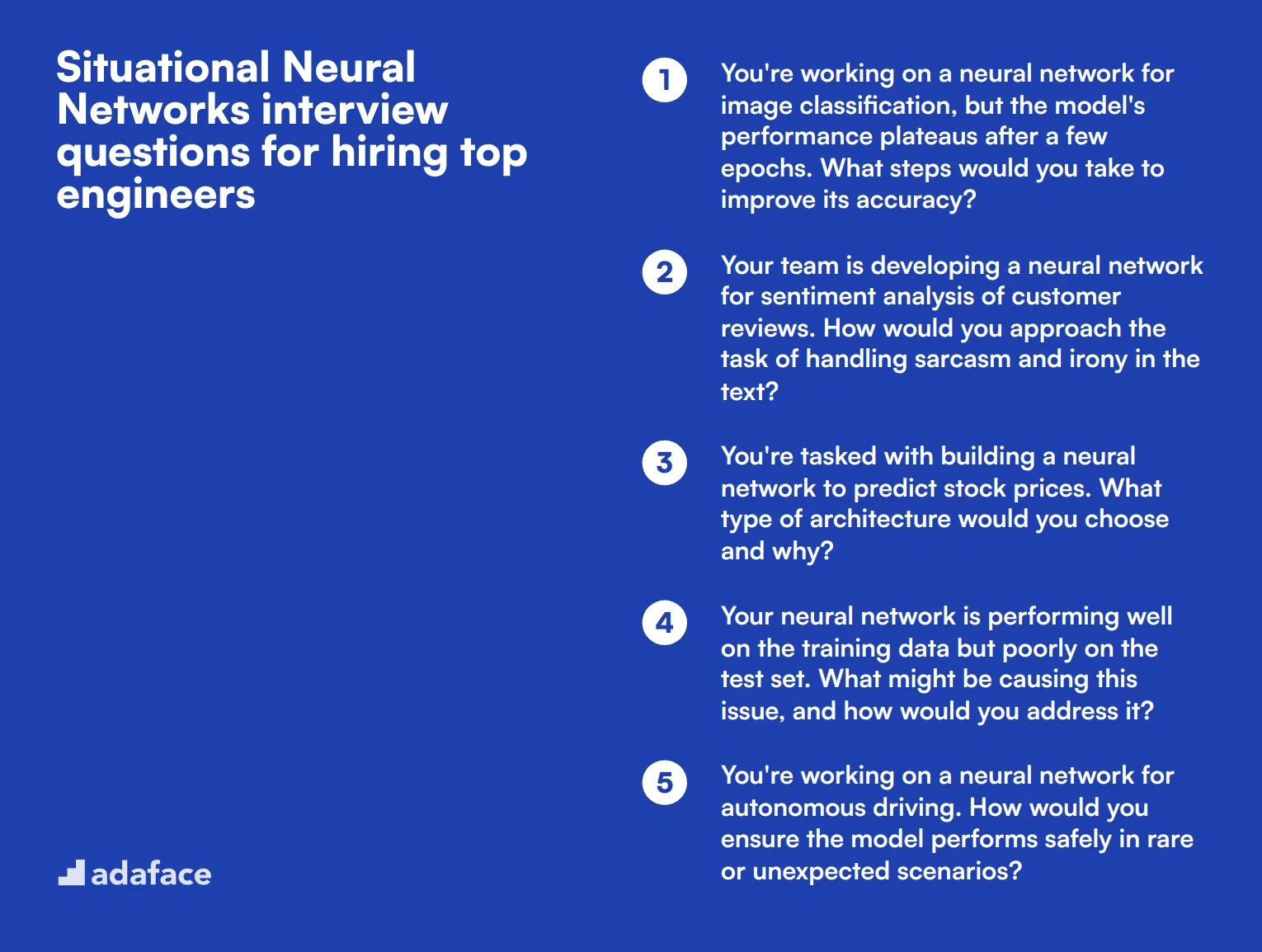

10 situational Neural Networks interview questions for hiring top engineers

To assess a candidate's practical understanding and problem-solving abilities in neural networks, consider using these situational interview questions. These scenarios will help you evaluate how machine learning engineers apply their knowledge to real-world challenges, revealing their critical thinking and decision-making skills.

- You're working on a neural network for image classification, but the model's performance plateaus after a few epochs. What steps would you take to improve its accuracy?

- Your team is developing a neural network for sentiment analysis of customer reviews. How would you approach the task of handling sarcasm and irony in the text?

- You're tasked with building a neural network to predict stock prices. What type of architecture would you choose and why?

- Your neural network is performing well on the training data but poorly on the test set. What might be causing this issue, and how would you address it?

- You're working on a neural network for autonomous driving. How would you ensure the model performs safely in rare or unexpected scenarios?

- Your team needs to deploy a large neural network model on mobile devices with limited resources. What strategies would you use to optimize the model for mobile deployment?

- You're developing a neural network for real-time object detection in video streams. How would you balance accuracy and speed in your model design?

- Your neural network is struggling with class imbalance in a medical diagnosis task. How would you modify your approach to improve performance across all classes?

- You're working on a neural network for language translation. How would you handle the challenge of translating rare words or phrases not seen in the training data?

- Your team is developing a neural network for anomaly detection in IoT sensor data. How would you design the model to handle varying patterns across different types of sensors and environments?

Which Neural Networks skills should you evaluate during the interview phase?

While a single interview might not fully capture the breadth of a candidate's abilities, focusing on key skills can yield a more accurate measure of their capabilities in neural networks. Identifying these core skills can help ensure a candidate's fit and potential for growth within the role.

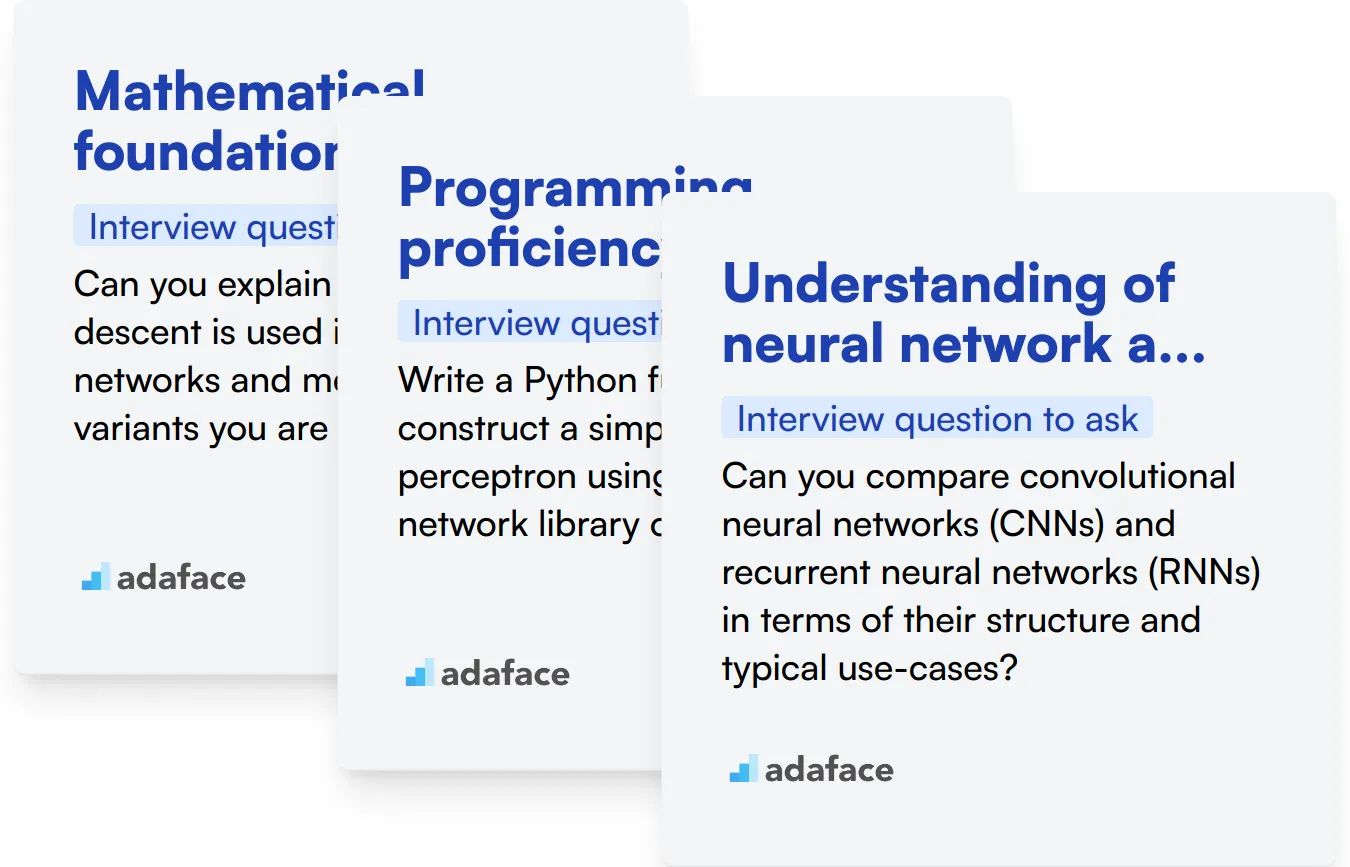

Mathematical foundations

A strong grasp of mathematical concepts like calculus, linear algebra, and probability is fundamental in neural networks for algorithm development and data modeling. These skills allow engineers to understand and implement complex neural network architectures effectively.

You can gauge a candidate's proficiency in this area through targeted multiple-choice questions. Consider using the Machine Learning assessment from our library, which includes questions on these crucial mathematical principles.

Additionally, posing specific interview questions can help assess this skill in a practical context.

Can you explain how gradient descent is used in training neural networks and mention any variants you are familiar with?

Listen for a clear explanation of the gradient descent process, understanding of its role in optimizing neural network weights, and knowledge of at least one variant like stochastic gradient descent.

Programming proficiency

Proficiency in programming languages such as Python, especially with libraries like TensorFlow or PyTorch, is crucial for implementing and manipulating neural network models.

Screening for programming skills can be effectively conducted using assessments tailored to neural networks. Our Python assessment includes relevant libraries and frameworks.

To further explore their coding capabilities, a practical coding question can be very revealing.

Write a Python function to construct a simple multi-layer perceptron using a neural network library of your choice.

Assess not only the correctness of the code but also the candidate's familiarity with neural network libraries and their ability to structure logical, efficient code.

Understanding of neural network architectures

Knowledge of various neural network types, such as CNNs, RNNs, and GANs, and when to apply them, is crucial for designing effective machine learning models tailored to different problems.

This knowledge can be tested via a structured assessment that includes these topics. Our Neural Networks assessment is designed to challenge candidates on these architectures.

Deepen your understanding of their expertise by asking them to compare and contrast different architectures.

Can you compare convolutional neural networks (CNNs) and recurrent neural networks (RNNs) in terms of their structure and typical use-cases?

Look for a detailed comparison that includes the unique characteristics of each network type and appropriate application scenarios.

Use Neural Networks Interview Questions and Skills Tests to Hire Skilled Engineers

If you're in the process of hiring an engineer with expertise in neural networks, it's important to verify their skills accurately.

The most reliable way to assess these skills is through the use of targeted skill tests. Consider utilizing tests from Adaface such as Neural Networks Test, Deep Learning Online Test, or Machine Learning Online Test to gauge the proficiency of your candidates.

After administering these tests, you can effectively shortlist the top candidates. This makes the subsequent interview process more focused and productive, allowing you to explore deeper into their practical and theoretical knowledge.

Ready to find your next great hire? Sign up and start using Adaface to streamline your hiring process. Explore our variety of tests tailored for different roles and skills on our Test Library or begin by setting up an account on our Signup Page.

Neural Networks Test

Download Neural Networks interview questions template in multiple formats

Neural Networks Interview Questions FAQs

This post covers a range of Neural Networks questions, from basic to advanced, including topics on activation functions, training processes, and situational scenarios.

These questions can help assess candidates' knowledge and practical skills in Neural Networks, allowing recruiters and hiring managers to evaluate applicants at various experience levels.

Yes, the post includes answers to the interview questions, helping interviewers understand what to look for in candidates' responses.

The questions are categorized by difficulty level (basic, junior, intermediate) and specific topics like activation functions and training processes.

While focused on Neural Networks, these questions can be adapted for various AI and machine learning engineering roles, depending on the specific job requirements.

40 min skill tests.

No trick questions.

Accurate shortlisting.

We make it easy for you to find the best candidates in your pipeline with a 40 min skills test.

Try for freeRelated posts

Free resources