Evaluating candidates for deep learning roles can be challenging without the right set of questions. For hiring managers and recruiters, it's essential to identify top talent efficiently and ensure they have the necessary skills before onboarding them.

In this blog post, we provide a comprehensive list of deep learning interview questions tailored for various experience levels and specific technical areas. You'll find questions to initiate interviews, evaluate junior to senior engineers, and delve into topics like model architectures and optimization techniques.

Using these questions, you'll be able to gauge the depth of a candidate's knowledge and their problem-solving abilities. Additionally, you can complement these interviews with a deep learning online test from Adaface to further ensure you're making well-informed hiring decisions.

Table of contents

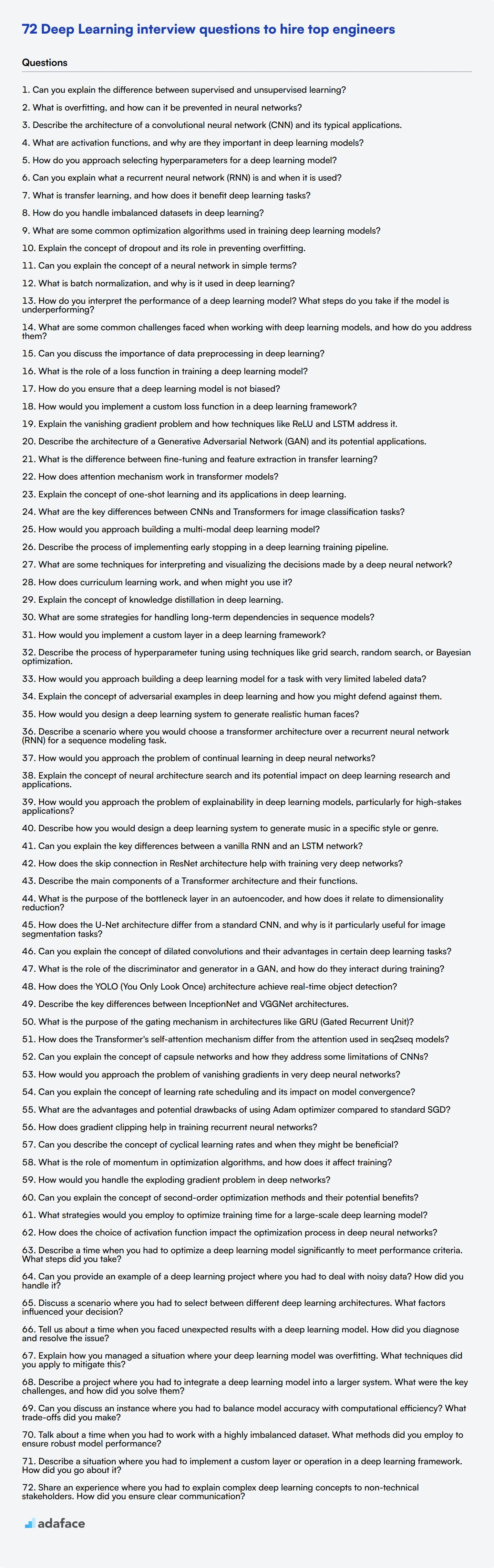

10 Deep Learning interview questions to initiate the interview

To assess whether candidates possess the essential knowledge and skills in deep learning, consider using this list of targeted interview questions. These questions can help you gauge their understanding of fundamental concepts and practical applications, ensuring you find the right fit for your team in the field of machine learning.

- Can you explain the difference between supervised and unsupervised learning?

- What is overfitting, and how can it be prevented in neural networks?

- Describe the architecture of a convolutional neural network (CNN) and its typical applications.

- What are activation functions, and why are they important in deep learning models?

- How do you approach selecting hyperparameters for a deep learning model?

- Can you explain what a recurrent neural network (RNN) is and when it is used?

- What is transfer learning, and how does it benefit deep learning tasks?

- How do you handle imbalanced datasets in deep learning?

- What are some common optimization algorithms used in training deep learning models?

- Explain the concept of dropout and its role in preventing overfitting.

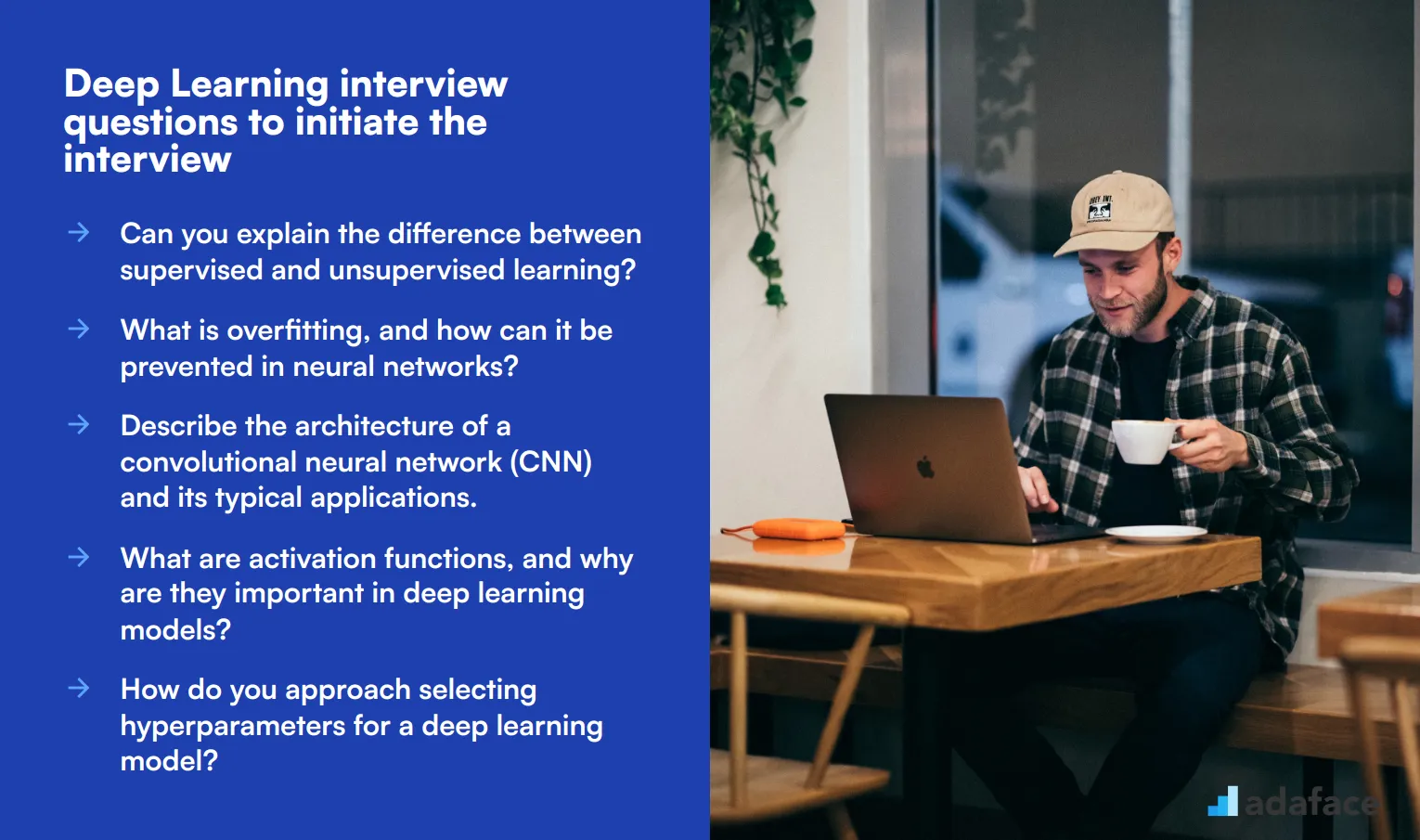

7 Deep Learning interview questions and answers to evaluate junior engineers

To determine whether your applicants have a solid foundational understanding of deep learning, ask them some of these 7 interview questions. These questions are designed to gauge their practical knowledge and problem-solving abilities, ensuring they can handle the tasks ahead.

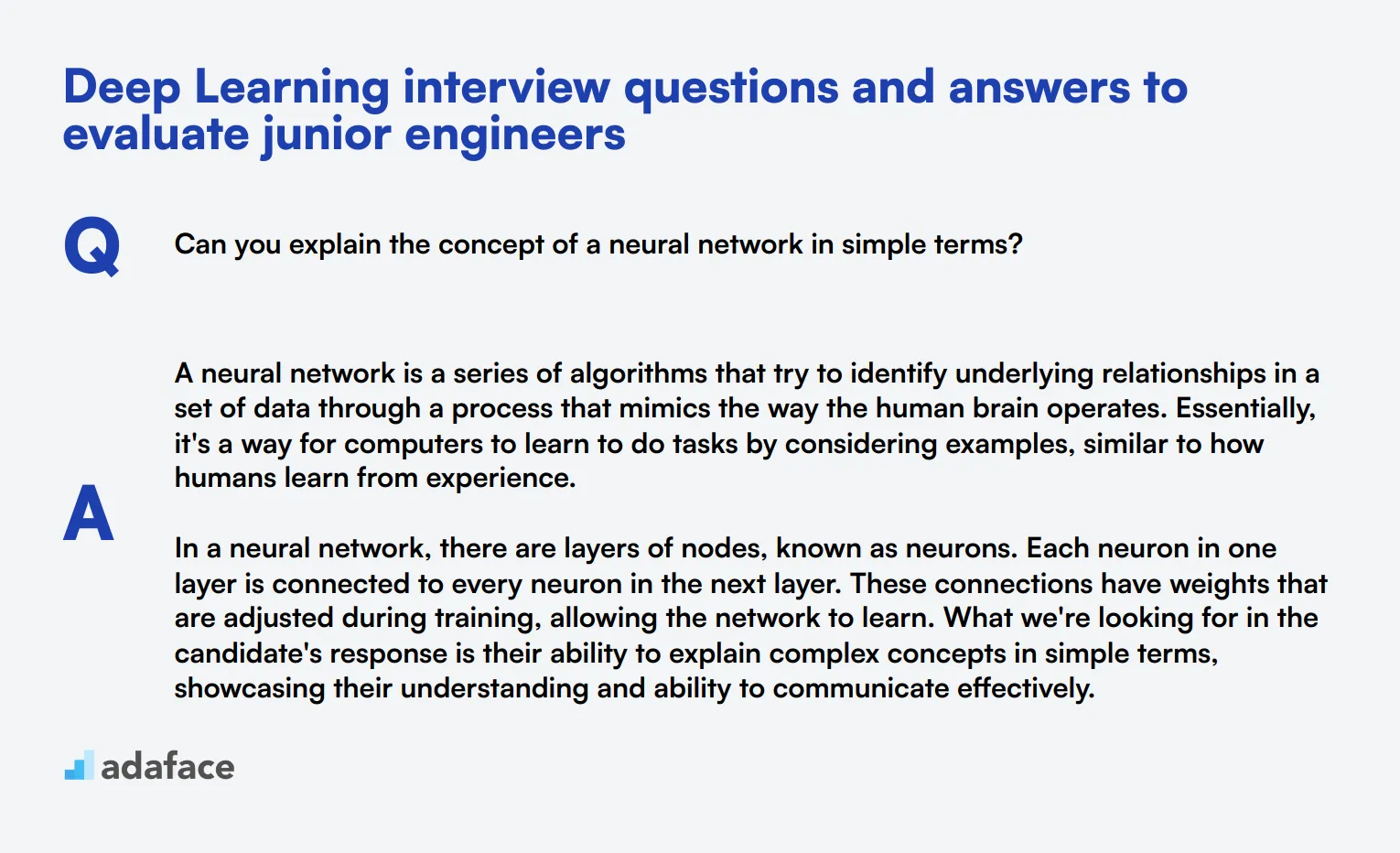

1. Can you explain the concept of a neural network in simple terms?

A neural network is a series of algorithms that try to identify underlying relationships in a set of data through a process that mimics the way the human brain operates. Essentially, it's a way for computers to learn to do tasks by considering examples, similar to how humans learn from experience.

In a neural network, there are layers of nodes, known as neurons. Each neuron in one layer is connected to every neuron in the next layer. These connections have weights that are adjusted during training, allowing the network to learn. What we're looking for in the candidate's response is their ability to explain complex concepts in simple terms, showcasing their understanding and ability to communicate effectively.

2. What is batch normalization, and why is it used in deep learning?

Batch normalization is a technique to improve the training of deep neural networks. It works by normalizing the inputs of each layer so that they have a mean of zero and a standard deviation of one. This helps in stabilizing the learning process and significantly reduces the number of training epochs required to train deep networks.

The key benefits of batch normalization include reducing internal covariate shift, improving gradient flow, and enabling the use of higher learning rates. A strong candidate should demonstrate an understanding of these benefits and how they contribute to more efficient training processes.

3. How do you interpret the performance of a deep learning model? What steps do you take if the model is underperforming?

Interpreting the performance of a deep learning model typically involves evaluating metrics like accuracy, precision, recall, and F1-score. Visual tools like confusion matrices and ROC curves can also provide insights into performance.

If a model is underperforming, steps to address this could include: revisiting the data preprocessing steps, tweaking the model architecture, trying different hyperparameters, or using techniques like data augmentation. Candidates should show an understanding of iterative improvement and troubleshooting strategies in their response.

4. What are some common challenges faced when working with deep learning models, and how do you address them?

Common challenges in deep learning include overfitting, vanishing/exploding gradients, and computational resource constraints. Overfitting can be tackled with techniques like dropout, data augmentation, or increasing the training data. The vanishing/exploding gradient problem can be mitigated by using appropriate activation functions and normalization techniques like batch normalization.

Addressing computational constraints often involves using optimized libraries, parallel processing, or cloud-based solutions. Look for candidates who can identify these challenges and propose practical solutions, demonstrating their problem-solving abilities.

5. Can you discuss the importance of data preprocessing in deep learning?

Data preprocessing is crucial in deep learning as it ensures the quality and relevance of the data fed into the model. It involves steps like data cleaning, normalization, and augmentation, which help improve the model's performance and generalization.

Effective data preprocessing can significantly reduce training times and improve model accuracy. Candidates should highlight their experience with different preprocessing techniques and their impact on model performance.

6. What is the role of a loss function in training a deep learning model?

The loss function measures the difference between the predicted output and the actual output. It provides a way for the model to understand how well it is performing and guides the optimization process in reducing this difference.

The choice of loss function depends on the type of problem being solved (e.g., classification, regression). Candidates should be able to discuss different types of loss functions and their appropriate use cases, indicating their practical knowledge of model training.

7. How do you ensure that a deep learning model is not biased?

Ensuring a deep learning model is not biased involves several strategies, including balanced datasets, fairness-aware learning algorithms, and continuous monitoring. It is crucial to train the model on representative data that captures the diversity of the real-world population.

Additionally, techniques like cross-validation, bias detection metrics, and regular audits can help identify and mitigate biases. Look for candidates who emphasize the importance of ethical considerations and have a thorough understanding of techniques to address bias in models.

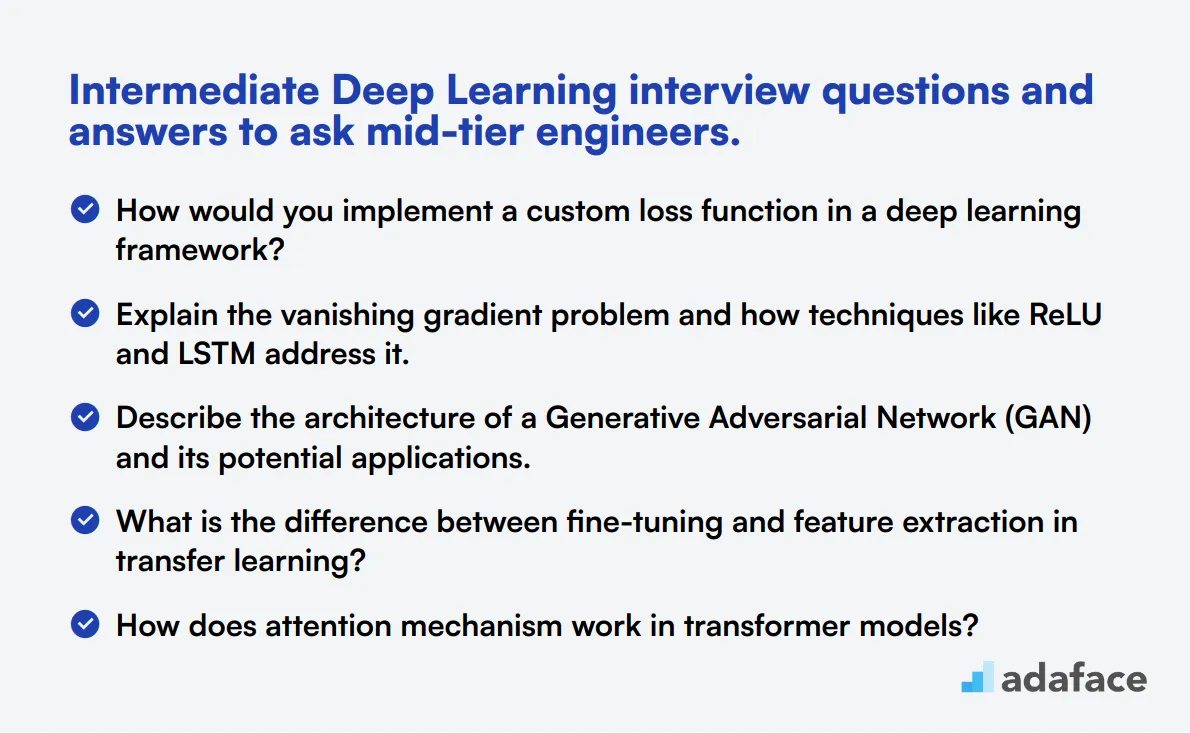

15 intermediate Deep Learning interview questions and answers to ask mid-tier engineers.

To assess the deep learning proficiency of mid-tier engineers, use these 15 intermediate interview questions. These questions are designed to evaluate candidates' understanding of advanced concepts and their ability to apply them in real-world scenarios.

- How would you implement a custom loss function in a deep learning framework?

- Explain the vanishing gradient problem and how techniques like ReLU and LSTM address it.

- Describe the architecture of a Generative Adversarial Network (GAN) and its potential applications.

- What is the difference between fine-tuning and feature extraction in transfer learning?

- How does attention mechanism work in transformer models?

- Explain the concept of one-shot learning and its applications in deep learning.

- What are the key differences between CNNs and Transformers for image classification tasks?

- How would you approach building a multi-modal deep learning model?

- Describe the process of implementing early stopping in a deep learning training pipeline.

- What are some techniques for interpreting and visualizing the decisions made by a deep neural network?

- How does curriculum learning work, and when might you use it?

- Explain the concept of knowledge distillation in deep learning.

- What are some strategies for handling long-term dependencies in sequence models?

- How would you implement a custom layer in a deep learning framework?

- Describe the process of hyperparameter tuning using techniques like grid search, random search, or Bayesian optimization.

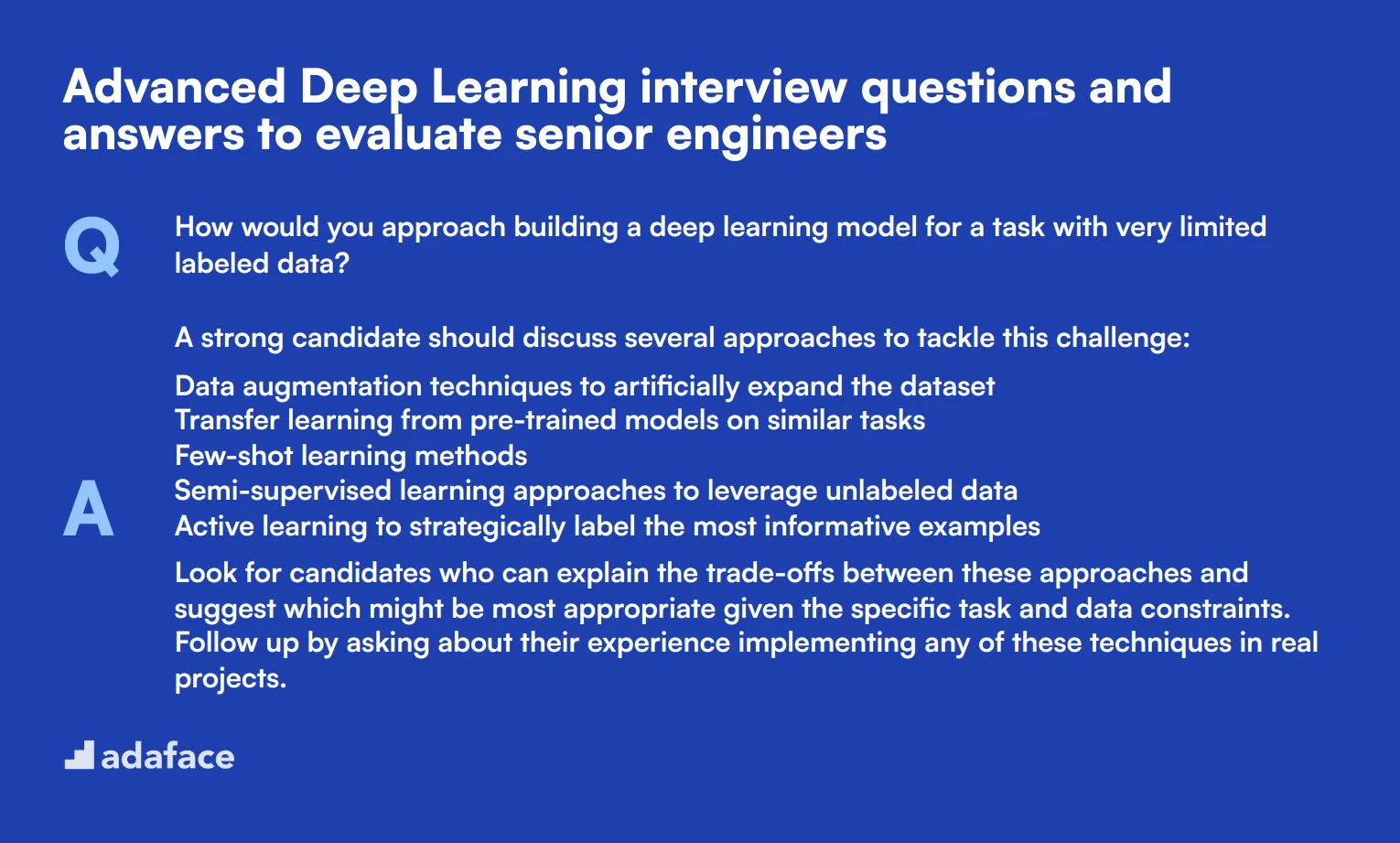

8 advanced Deep Learning interview questions and answers to evaluate senior engineers

Ready to challenge your senior deep learning candidates? These 8 advanced questions will help you evaluate their expertise and problem-solving skills. Use them to dig deeper into a candidate's understanding of complex concepts and their ability to apply theoretical knowledge to real-world scenarios. Remember, the goal is to spark meaningful discussions, not just test memorization.

1. How would you approach building a deep learning model for a task with very limited labeled data?

A strong candidate should discuss several approaches to tackle this challenge:

- Data augmentation techniques to artificially expand the dataset

- Transfer learning from pre-trained models on similar tasks

- Few-shot learning methods

- Semi-supervised learning approaches to leverage unlabeled data

- Active learning to strategically label the most informative examples

Look for candidates who can explain the trade-offs between these approaches and suggest which might be most appropriate given the specific task and data constraints. Follow up by asking about their experience implementing any of these techniques in real projects.

2. Explain the concept of adversarial examples in deep learning and how you might defend against them.

Adversarial examples are inputs to machine learning models that have been intentionally designed to cause the model to make a mistake. They're often created by adding small, carefully crafted perturbations to valid inputs.

Candidates should discuss defense strategies such as:

- Adversarial training: Including adversarial examples in the training data

- Gradient masking: Modifying the model's gradients to make it harder to generate adversarial examples

- Input preprocessing: Applying transformations to inputs to remove adversarial perturbations

- Ensemble methods: Combining predictions from multiple models to increase robustness

A strong answer will also touch on the challenges of defending against adversarial attacks, such as the trade-off between robustness and accuracy. Look for candidates who can discuss the implications of adversarial examples for deep learning model deployment in critical applications.

3. How would you design a deep learning system to generate realistic human faces?

An ideal response should outline the use of Generative Adversarial Networks (GANs) or other advanced generative models. Key points to cover include:

- Architecture: Describing a generator network to create images and a discriminator network to distinguish real from fake

- Training process: Explaining the adversarial training dynamics

- Challenges: Discussing mode collapse, training instability, and evaluation metrics

- Improvements: Mentioning progressive growing, style-based generation, or other recent advancements

Look for candidates who can explain the intuition behind GANs and discuss potential ethical considerations of generating realistic human faces. Strong answers might also touch on alternative approaches like Variational Autoencoders (VAEs) or diffusion models.

4. Describe a scenario where you would choose a transformer architecture over a recurrent neural network (RNN) for a sequence modeling task.

A strong answer should highlight the advantages of transformers over RNNs for certain tasks:

- Parallel processing: Transformers can process entire sequences in parallel, making them faster for long sequences

- Long-range dependencies: The self-attention mechanism allows transformers to capture long-range dependencies more effectively

- No vanishing gradient problem: Transformers don't suffer from the vanishing gradient issue that plagues RNNs

Candidates should provide examples of tasks where transformers excel, such as machine translation, text summarization, or natural language understanding. Look for answers that also acknowledge scenarios where RNNs might still be preferred, such as tasks with strict online processing requirements or very short sequences.

5. How would you approach the problem of continual learning in deep neural networks?

Continual learning, also known as lifelong learning, involves training a model on a sequence of tasks without forgetting previously learned information. A good answer should cover:

- Challenges: Explaining catastrophic forgetting and the stability-plasticity dilemma

- Approaches: Discussing methods like elastic weight consolidation, progressive neural networks, or memory-based techniques

- Evaluation: Mentioning metrics for assessing forgetting and forward transfer

Look for candidates who can explain the trade-offs between different continual learning approaches and discuss potential applications in real-world scenarios. Strong answers might also touch on the relationship between continual learning and human cognitive processes.

6. Explain the concept of neural architecture search and its potential impact on deep learning research and applications.

Neural Architecture Search (NAS) is an automated process for designing optimal neural network architectures for a given task. A comprehensive answer should cover:

- Search space: Defining the set of possible architectures

- Search strategy: Methods like reinforcement learning, evolutionary algorithms, or gradient-based approaches

- Performance estimation: Techniques for efficiently evaluating candidate architectures

- Trade-offs: Discussing the balance between search time, computational resources, and model performance

Look for candidates who can critically evaluate the promises and limitations of NAS. Strong answers might discuss recent advancements like weight sharing or one-shot models, and consider the broader implications for AutoML and democratizing AI development.

7. How would you approach the problem of explainability in deep learning models, particularly for high-stakes applications?

Explainability in deep learning is crucial for building trust and understanding model decisions. A strong answer should discuss various techniques:

- Feature visualization: Methods to understand what features the model has learned

- Attribution methods: Techniques like LIME, SHAP, or Integrated Gradients to explain individual predictions

- Concept-based explanations: Approaches that align neural activations with human-understandable concepts

- Model distillation: Creating simpler, more interpretable models that mimic complex ones

Look for candidates who can discuss the trade-offs between model performance and explainability, and the importance of tailoring explanations to different stakeholders (e.g., developers, domain experts, end-users). Strong answers might also touch on the limitations of current explainability techniques and ongoing research challenges.

8. Describe how you would design a deep learning system to generate music in a specific style or genre.

A comprehensive answer should outline an approach using sequence models or generative models tailored for music. Key points to cover include:

- Data representation: Discussing how to encode musical elements (notes, rhythm, harmony) for the model

- Model architecture: Describing suitable architectures like LSTMs, Transformers, or GANs for music generation

- Training process: Explaining how to train on a corpus of music in the target style

- Evaluation: Discussing metrics and methods for assessing the quality and style-consistency of generated music

Look for candidates who can explain the challenges specific to music generation, such as maintaining long-term coherence and capturing style-specific patterns. Strong answers might also touch on techniques like conditioning on musical attributes or incorporating music theory constraints into the model.

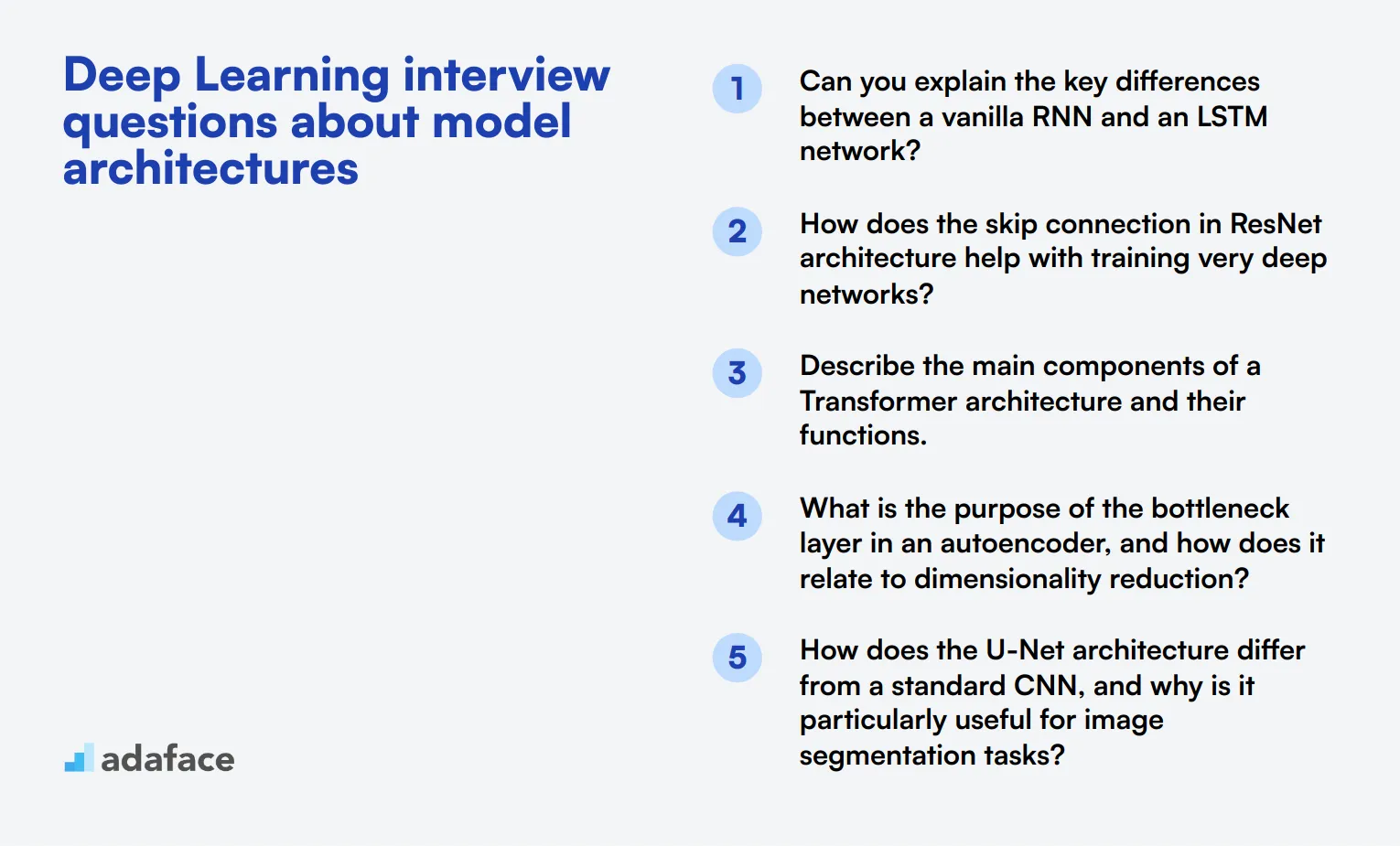

12 Deep Learning interview questions about model architectures

To assess a candidate's understanding of advanced deep learning concepts and model architectures, consider asking some of these 12 interview questions. These questions will help you gauge the applicant's knowledge of complex neural network structures and their applications in various domains.

- Can you explain the key differences between a vanilla RNN and an LSTM network?

- How does the skip connection in ResNet architecture help with training very deep networks?

- Describe the main components of a Transformer architecture and their functions.

- What is the purpose of the bottleneck layer in an autoencoder, and how does it relate to dimensionality reduction?

- How does the U-Net architecture differ from a standard CNN, and why is it particularly useful for image segmentation tasks?

- Can you explain the concept of dilated convolutions and their advantages in certain deep learning tasks?

- What is the role of the discriminator and generator in a GAN, and how do they interact during training?

- How does the YOLO (You Only Look Once) architecture achieve real-time object detection?

- Describe the key differences between InceptionNet and VGGNet architectures.

- What is the purpose of the gating mechanism in architectures like GRU (Gated Recurrent Unit)?

- How does the Transformer's self-attention mechanism differ from the attention used in seq2seq models?

- Can you explain the concept of capsule networks and how they address some limitations of CNNs?

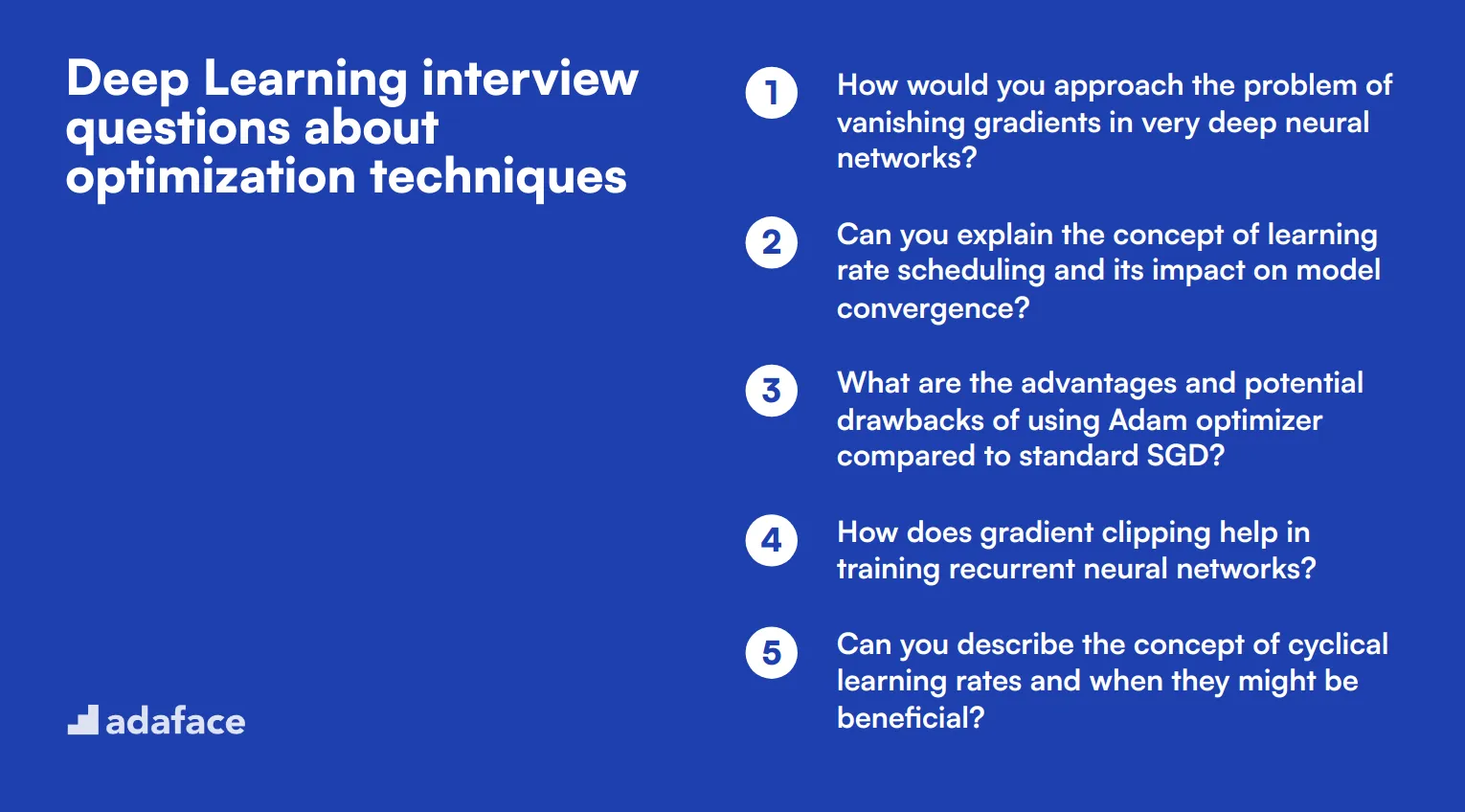

10 Deep Learning interview questions about optimization techniques

To assess a candidate's understanding of optimization techniques in deep learning, consider using these 10 interview questions. They are designed to help hiring managers evaluate a data scientist's proficiency in improving model performance and efficiency. Use these questions to gauge the applicant's practical knowledge and problem-solving skills in deep learning optimization.

- How would you approach the problem of vanishing gradients in very deep neural networks?

- Can you explain the concept of learning rate scheduling and its impact on model convergence?

- What are the advantages and potential drawbacks of using Adam optimizer compared to standard SGD?

- How does gradient clipping help in training recurrent neural networks?

- Can you describe the concept of cyclical learning rates and when they might be beneficial?

- What is the role of momentum in optimization algorithms, and how does it affect training?

- How would you handle the exploding gradient problem in deep networks?

- Can you explain the concept of second-order optimization methods and their potential benefits?

- What strategies would you employ to optimize training time for a large-scale deep learning model?

- How does the choice of activation function impact the optimization process in deep neural networks?

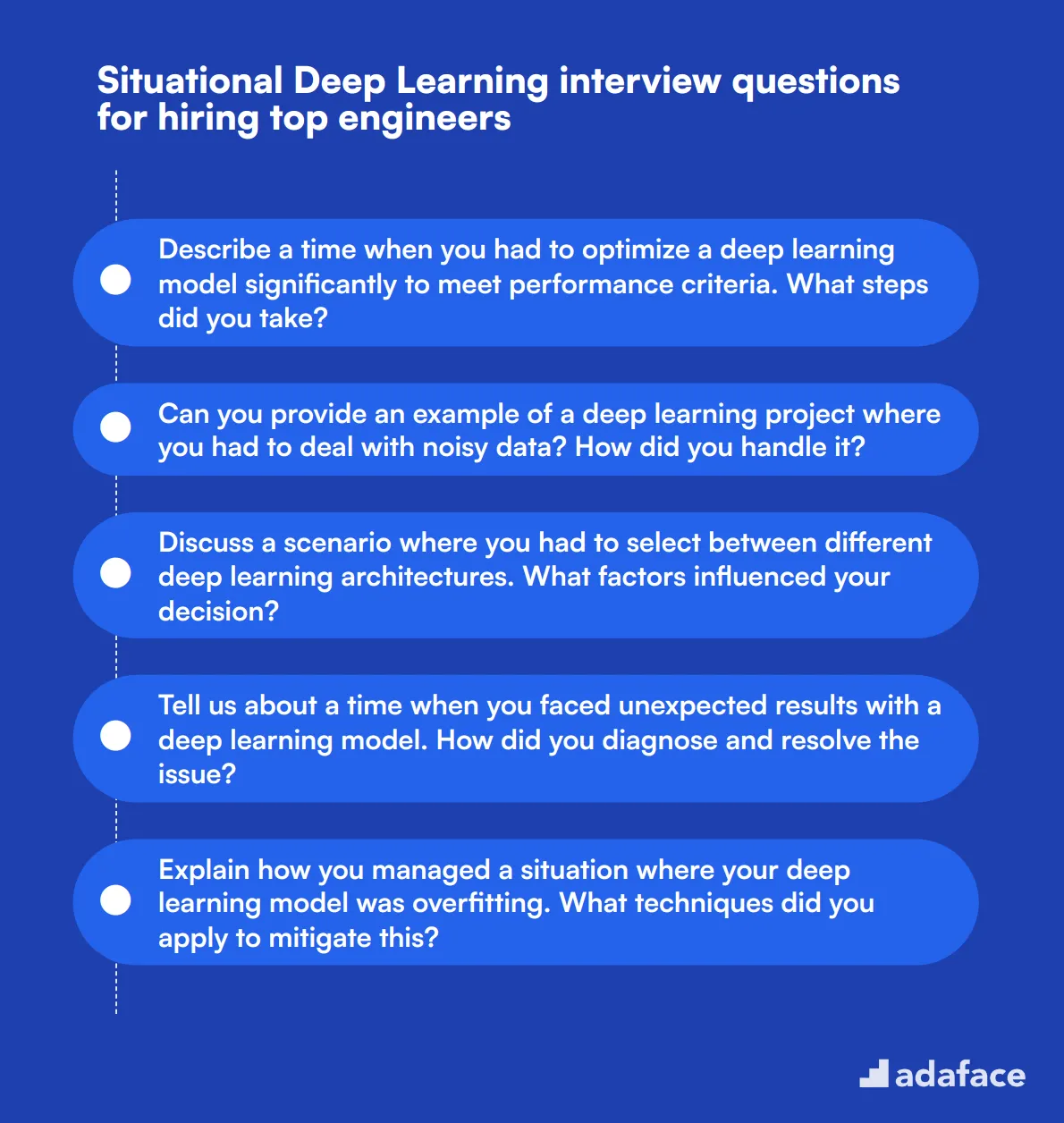

10 situational Deep Learning interview questions for hiring top engineers

To ensure you hire the best talent for deep learning roles, it's crucial to ask the right situational questions. This list of 10 situational Deep Learning interview questions will help you evaluate candidates' problem-solving skills and practical knowledge. You can use these questions to assess a candidate's ability to handle real-world challenges, ensuring they have the skills required for a data scientist or deep learning engineer.

- Describe a time when you had to optimize a deep learning model significantly to meet performance criteria. What steps did you take?

- Can you provide an example of a deep learning project where you had to deal with noisy data? How did you handle it?

- Discuss a scenario where you had to select between different deep learning architectures. What factors influenced your decision?

- Tell us about a time when you faced unexpected results with a deep learning model. How did you diagnose and resolve the issue?

- Explain how you managed a situation where your deep learning model was overfitting. What techniques did you apply to mitigate this?

- Describe a project where you had to integrate a deep learning model into a larger system. What were the key challenges, and how did you solve them?

- Can you discuss an instance where you had to balance model accuracy with computational efficiency? What trade-offs did you make?

- Talk about a time when you had to work with a highly imbalanced dataset. What methods did you employ to ensure robust model performance?

- Describe a situation where you had to implement a custom layer or operation in a deep learning framework. How did you go about it?

- Share an experience where you had to explain complex deep learning concepts to non-technical stakeholders. How did you ensure clear communication?

Which Deep Learning skills should you evaluate during the interview phase?

While a single interview might not reveal everything about a candidate's capabilities, focusing on core Deep Learning skills can help you assess whether they are the right fit for your team. Here are some key skills to evaluate during the interview phase.

Neural Networks

You can use an assessment test that includes relevant MCQs to filter out candidates with strong neural network knowledge.

During the interview, you can ask targeted questions to gauge the candidate's understanding of neural networks.

Can you explain how backpropagation works in neural networks?

Look for answers that demonstrate a clear understanding of the backpropagation algorithm, its role in training neural networks, and how it adjusts weights through gradient descent.

Optimization Techniques

To assess this skill, consider using an optimization techniques test that includes questions on various optimization algorithms.

Interview questions can also help evaluate a candidate’s knowledge of optimization techniques.

What are some common pitfalls when using the Adam optimizer, and how can they be mitigated?

Expect candidates to discuss issues like learning rate sensitivity and potential solutions such as appropriate parameter tuning or switching optimizers for better performance.

Model Evaluation Metrics

You can evaluate this skill by using an assessment test that includes MCQs on various evaluation metrics.

Targeted interview questions can provide insights into the candidate’s expertise in model evaluation metrics.

How would you choose the right evaluation metric for a classification problem?

Good answers will cover metrics like accuracy, precision, recall, and F1-score, explaining when each is most appropriate and why.

Strategic Tips for Leveraging Deep Learning Interview Questions

Before you start utilizing the deep learning interview questions listed above, here are some strategic tips to enhance your recruitment process effectively.

1. Incorporate Pre-Interview Skill Tests

Implementing skill tests prior to the interview phase can significantly streamline the candidate selection process. By assessing skills upfront, you filter out less suitable candidates and focus on those who meet the technical requirements of the role.

For deep learning roles, consider using our Deep Learning Online Test or Machine Learning Online Test to evaluate fundamental competencies. Other relevant tests like the Neural Networks Test can also be useful.

These pre-interview assessments help verify the candidates' abilities in real-world scenarios, ensuring that only the most capable proceed to the interview stage. Transitioning to the next tip, let's consider the formulation of your interview questions.

2. Craft A Targeted Questionnaire for Interviews

With limited time during interviews, it's important to ask the right questions that reveal key competencies and fit with the role. Select a mixture of technical and situational questions to gauge both hard and soft skills.

Explore other skill-specific question pages to broaden your interview scope, like Python or Data Science questions, which complement deep learning roles well by assessing adjacent skills.

By carefully choosing relevant questions, you can better assess candidates on critical areas essential for success in the role.

3. Emphasize Insightful Follow-Up Questions

Using your main interview questions is just the beginning; follow-up questions are key to diving deeper into candidates' knowledge and authenticity. These questions can expose rehearsed answers and reveal the candidate's true understanding.

For a Deep Learning question about neural networks, a good follow-up might be, 'Can you explain how you've optimized network parameters in past projects?' This question probes practical experience and problem-solving skills, which are crucial for the role.

Use Deep Learning interview questions and skills tests to hire talented engineers

If you are looking to hire someone with deep learning skills, you need to ensure they possess the required expertise. The best way to assess their capabilities is through skill tests. Consider using our Deep Learning Online Test or our Neural Networks Test to evaluate your candidates accurately.

Once you've used these tests, you can shortlist the best applicants and invite them for interviews. To move forward, visit our sign-up page to create an account, or check out our online assessment platform for more information.

Deep Learning Online Test

Download Deep Learning interview questions template in multiple formats

Deep Learning Interview Questions FAQs

The post covers questions for different experience levels, model architectures, optimization techniques, and situational scenarios.

Questions are categorized by difficulty level, specific topics, and interview stages, making it easy for interviewers to select relevant questions.

Yes, the questions cover a wide range of topics and difficulty levels, suitable for various Deep Learning positions and experience levels.

Yes, the post includes strategic tips for leveraging Deep Learning interview questions to evaluate candidates thoroughly.

40 min skill tests.

No trick questions.

Accurate shortlisting.

We make it easy for you to find the best candidates in your pipeline with a 40 min skills test.

Try for freeRelated posts

Free resources